Google says hackers are abusing Gemini AI for all attacks stages

Source: Bleeping Computer

“The PRC‑based threat actor fabricated a scenario, in one case trialing Hexstrike MCP tooling, and directing the model to analyse Remote Code Execution (RCE), WAF‑bypass techniques and SQL‑injection test results against specific US‑based targets.” – Google Threat Intelligence Group (GTIG)

AI‑enhanced malicious activity

The Google Threat Intelligence Group (GTIG) reported that adversaries are using Gemini to:

- Automate vulnerability analysis and produce targeted testing plans.

- Fix code, conduct research and obtain technical advice for intrusions.

- Accelerate social‑engineering campaigns and generate tailored malicious tools (debugging, code generation, exploitation technique research).

- Extend existing malware families (e.g., CoinBait phishing kit, HonestCue downloader/launcher) with new AI‑driven capabilities.

Notable malware examples

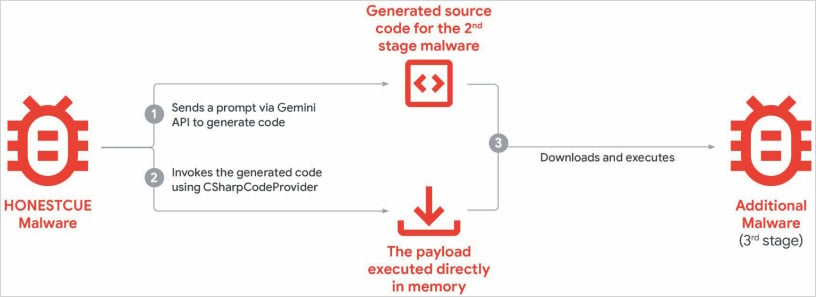

HonestCue

-

Description: Proof‑of‑concept framework observed in late 2025 that calls the Gemini API to generate C# code for a second‑stage payload, which is then compiled and executed in memory.

-

Image:

Source: Google

CoinBait

- Description: A React SPA‑wrapped phishing kit masquerading as a cryptocurrency exchange. Development artifacts indicate heavy use of AI code‑generation tools.

- Indicator: Source‑code comments prefixed with

Analytics:that can help defenders track data‑exfiltration steps. - Platform used: Lovable AI (Lovable Supabase client,

lovable.app).

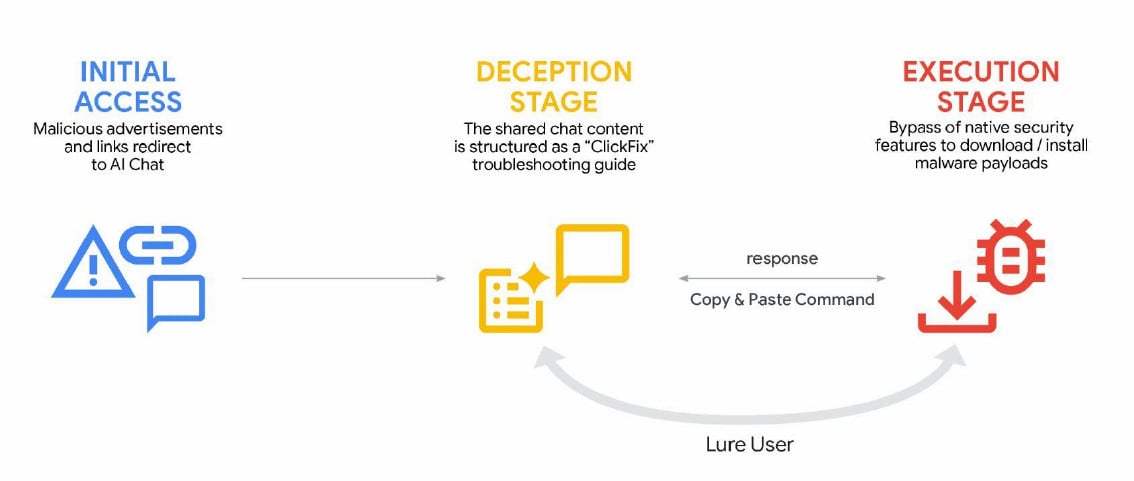

ClickFix campaigns

-

Technique: Generative‑AI services are leveraged to deliver the AMOS info‑stealing macOS malware via malicious ads that appear in search results for troubleshooting queries.

-

Image:

Source: Google

Model extraction & knowledge distillation

GTIG also observed large‑scale attempts to extract Gemini’s behaviour and distill it into competing models:

- Scale: ~100 000 prompts aimed at reproducing Gemini’s reasoning across many non‑English tasks.

- Impact: Intellectual‑property theft, reduced cost and time for adversaries to build comparable AI services, and a threat to the AI‑as‑a‑service business model.

Google’s response

- Disabled abusive accounts and related infrastructure.

- Hardened Gemini’s classifiers to make abuse more difficult.

- Continues to embed robust security measures and safety guardrails, with regular testing to improve model security.

All images are used under fair‑use for illustrative purposes.

The Future of IT Infrastructure

Modern IT infrastructure moves faster than manual workflows can handle.

In this new Tines guide you’ll learn how to:

- Reduce hidden manual delays

- Improve reliability through automated response

- Build and scale intelligent workflows on top of the tools you already use