The Great AI Convergence: PyTorch vs. TensorFlow in 2026

Source: Dev.to

In the rapidly evolving world of artificial intelligence, two titans continue to dominate the landscape: PyTorch and TensorFlow. For years developers have debated which framework is superior, but as we move through 2025 the conversation has shifted. It’s no longer just about “which is better,” but rather “which is right for your specific workflow.”

Whether you are a researcher pushing the boundaries of generative AI or an engineer deploying models to millions of edge devices, understanding the nuances of these frameworks is essential.

1. The Core Philosophy – Flexibility vs. Structure

| Aspect | PyTorch (Dynamic) | TensorFlow (Static/Hybrid) |

|---|---|---|

| Origin | Developed by Meta | Developed by Google |

| Computation Graph | Define‑by‑run – the graph is built on the fly as the code executes. This feels like native Python; you can use standard loops, conditionals, and debuggers (e.g., pdb). | Define‑and‑run – originally a static graph compiled before execution. TensorFlow 2.x added Eager Execution for PyTorch‑like flexibility, but the core remains optimized static compilation. |

| Optimization Focus | Emphasizes ease of experimentation and debugging. | Emphasizes aggressive hardware‑level optimizations (e.g., XLA, TPU support). |

2. Developer Experience & Debugging

-

PyTorch

- Currently holds the crown for smooth Developer Experience (DX).

- Seamlessly integrates with the Python ecosystem; debugging is as simple as inserting

print()statements or breakpoints inside your training loop. - Clear stack traces make it easy to locate issues.

-

TensorFlow

- The gap has narrowed thanks to Keras, its high‑level API that enables rapid model building with a “Lego‑brick” style of stacking layers.

- When you need to dive under the hood (custom loss functions, complex gradient calculations), error messages can still be cryptic compared with PyTorch’s straightforward traces.

3. Production & Deployment – The Enterprise Edge

| Feature | PyTorch | TensorFlow |

|---|---|---|

| Enterprise‑grade tooling | TorchServe for serving, TorchScript for model serialization, ONNX support for interoperability. | TensorFlow Extended (TFX) for end‑to‑end pipelines, TensorFlow Lite for mobile/IoT, TensorFlow Serving for scalable model serving with versioning and rolling updates. |

| Maturity for large‑scale deployments | Rapidly improving; suitable for many production scenarios. | Long‑standing, battle‑tested in massive, global infrastructures. |

| Ecosystem “batteries‑included” | Growing ecosystem, but still catching up to TensorFlow’s breadth. | Comprehensive suite covering data ingestion, training, validation, serving, and monitoring. |

4. Performance – 2025 Benchmarks

-

PyTorch 2.0+

- Introduced

torch.compile()which leverages the Triton compiler. - Typical speed‑ups of 30 %–60 % with a single line of code.

- Introduced

-

TensorFlow

- Uses XLA (Accelerated Linear Algebra) to fuse operations and reduce memory overhead.

- Particularly efficient on Google TPUs and high‑throughput inference workloads.

General observation:

- PyTorch tends to be slightly faster for prototyping and small‑to‑medium scale training.

- TensorFlow often edges out in large‑scale, high‑throughput inference scenarios.

TL;DR

- Choose PyTorch if you prioritize rapid experimentation, intuitive debugging, and a Python‑first feel.

- Choose TensorFlow if you need a mature, enterprise‑ready ecosystem with robust tooling for large‑scale production, especially when targeting mobile/IoT or TPU hardware.

Both frameworks have converged dramatically in flexibility and performance, so the “right” choice now hinges on the specifics of your workflow, team expertise, and deployment targets.

End‑to‑End ML Lifecycle Tools

- TensorFlow Extended (TFX) – Platform for managing end‑to‑end pipelines.

- TensorFlow Lite – Gold standard for deploying models on mobile (iOS/Android) and IoT devices.

- TensorFlow Serving – Highly mature tooling for deploying models at scale with built‑in versioning and rolling updates.

PyTorch has made massive strides with TorchServe, TorchScript, and support for the ONNX (Open Neural Network Exchange) format to bridge the gap. However, for a company that needs to deploy thousands of models across a global infrastructure, TensorFlow’s maturity is hard to beat.

5. Performance: The 2025 Benchmarks (Re‑examined)

- PyTorch 2.0+ introduced

torch.compile(), which uses the Triton compiler to provide massive speed‑ups (often 30 %–60 %) with a single line of code. - TensorFlow leverages XLA to fuse operations and reduce memory overhead, making it exceptionally efficient on Google’s TPUs.

Takeaways

- PyTorch is slightly faster for prototyping and small‑to‑medium‑scale training.

- TensorFlow often edges out in high‑throughput inference for large‑scale production workloads.

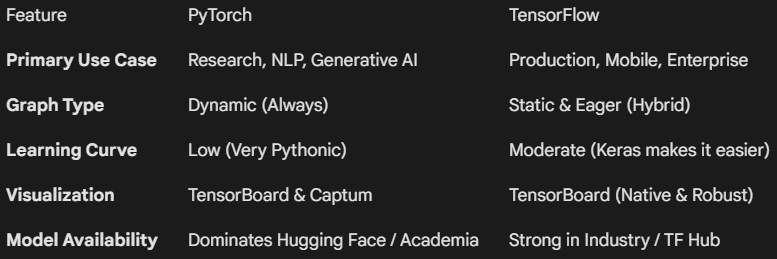

Comparison at a Glance

6. Ecosystem and Community

- PyTorch – The darling of the academic world. New AI papers on arXiv are ~90 % likely to use PyTorch, making it the go‑to for cutting‑edge models like GPT‑4 variants or Stable Diffusion.

- TensorFlow – A massive corporate footprint. Deeply integrated into Google Cloud Platform (GCP) and preferred by large‑scale industries such as retail, finance, and healthcare, where stability and long‑term support are prioritized over experimental features.

Final Verdict: Which One Should You Choose?

The “Framework Wars” have reached a peaceful stalemate—both tools are excellent, but they serve different masters.

- Choose PyTorch if: you are a student, researcher, or developer at a startup; you want to iterate fast, experiment with custom architectures, and have access to the latest open‑source models on Hugging Face.

- Choose TensorFlow if: you are working in a large‑scale production environment, need to deploy models to mobile/web, or are heavily invested in the Google Cloud ecosystem.

Pro Tip

In 2026 the most valuable AI engineers are “bilingual.” Since the underlying concepts (tensors, back‑propagation, optimizers) are identical, mastering one framework makes learning the other much easier.