LLM research on Hacker News is drying up

Source: Hacker News

Overview

I thought I was seeing fewer arXiv papers on the front page of Hacker News (HN) these days, and I wanted to check if that was real.

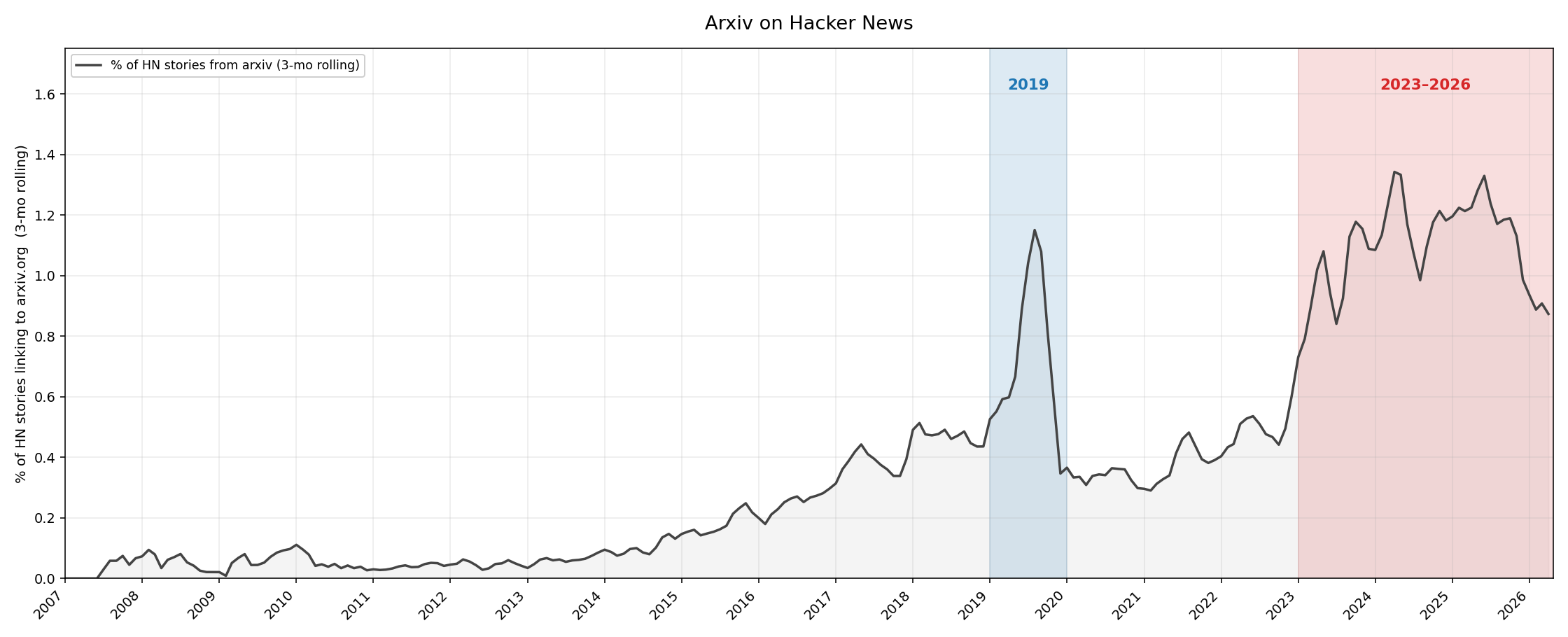

So I asked Claude to run a quick analysis: track the share of arXiv stories on HN over time. It queried the BigQuery HN dataset, bucketed the stories by month, and plotted the series:

Percentage of HN stories linking to arXiv – the trend confirms my hunch: arXiv posts have been decreasing rapidly in the last few months. Interestingly, there was another peak around 2019, which prompted further investigation.

Topic distribution of arXiv stories

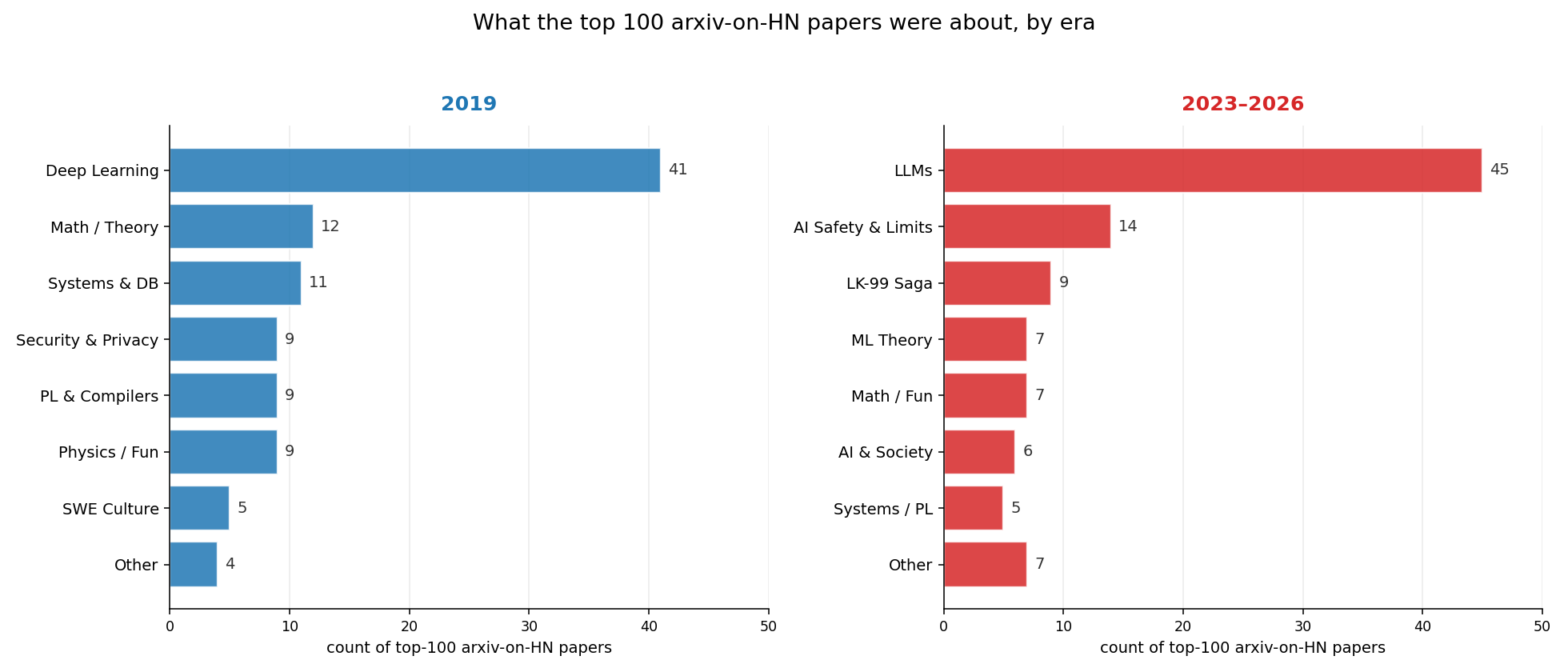

I asked Claude to pull the top 100 papers by upvotes from 2019 and group them by topic. It was the deep‑learning peak: 41 % of the top 100 were about deep learning. Running the same query for 2023‑2026 showed that 59 % of the top 100 upvoted papers were about LLMs or AI.

The resulting chart:

Distribution of topics of arXiv stories

2019 papers that aged well

Claude identified the 2019 papers that still held up among the top 100:

- MuZero — Mastering Atari, Go, Chess and Shogi by Planning with a Learned Model (161 pts) – DeepMind’s successor to AlphaZero

- EfficientNet — Rethinking Model Scaling for Convolutional Neural Networks (119 pts) – Compound scaling, set the new CV SOTA

- XLNet — Generalized Autoregressive Pretraining for Language Understanding (79 pts) – Briefly dethroned BERT

- PyTorch: An Imperative Style, High-Performance Deep Learning Library (113 pts) – The NeurIPS paper formalizing PyTorch’s design

- On the Measure of Intelligence (80 pts) – Chollet’s ARC / “human‑like intelligence” manifesto

2023‑2026 papers predicted to hold up

Since it’s too early to know which recent papers will endure, I asked Claude to guess:

- DeepSeek‑R1 — Incentivizing Reasoning Capability in LLMs via RL (1,351 pts) – First open recipe for o1‑style reasoning via pure RL on verifiable rewards

- Generative Agents — Interactive Simulacra of Human Behavior (391 pts) – The canonical “Smallville” paper, template for LLM agent architectures

- The Era of 1‑bit LLMs — BitNet b1.58, ternary parameters for cost‑effective computing (1,040 pts) – First credible case for low‑bit inference as the default

- Differential Transformer (562 pts) – Attention with a noise‑cancelling term, clean architectural contribution with a real theoretical story

- LK‑99 cluster — room‑temperature superconductor preprints (2,408 + 1,690 pts) – Landmark meta‑science, not physics: open‑science‑at‑wire‑speed and the canonical case of crowdsourced replication

That was fun. Thanks, Claude.

Citation

BibTeX citation:

@online{castillo2026,

author = {Castillo, Dylan},

title = {LLM Research on {Hacker} {News} Is Drying Up},

date = {2026-04-24},

url = {https://dylancastillo.co/til/llm-research-on-hacker-news-is-dying.html},

langid = {en}

}For attribution, please cite this work as:

Castillo, Dylan. 2026. “LLM Research on Hacker News Is Drying Up.” April 24.