Google announces Gemini 3.1 Pro, says it's better at complex problem-solving

Source: Ars Technica

Overview

Google has released a new version of its flagship AI model, Gemini 3.1 Pro, now available in preview for developers and consumers. The update follows the November release of Gemini 3 and promises enhanced problem‑solving and reasoning capabilities. Google also announced improvements to its Deep Think tool, which now runs on Gemini 3.1 Pro.

Benchmark Results

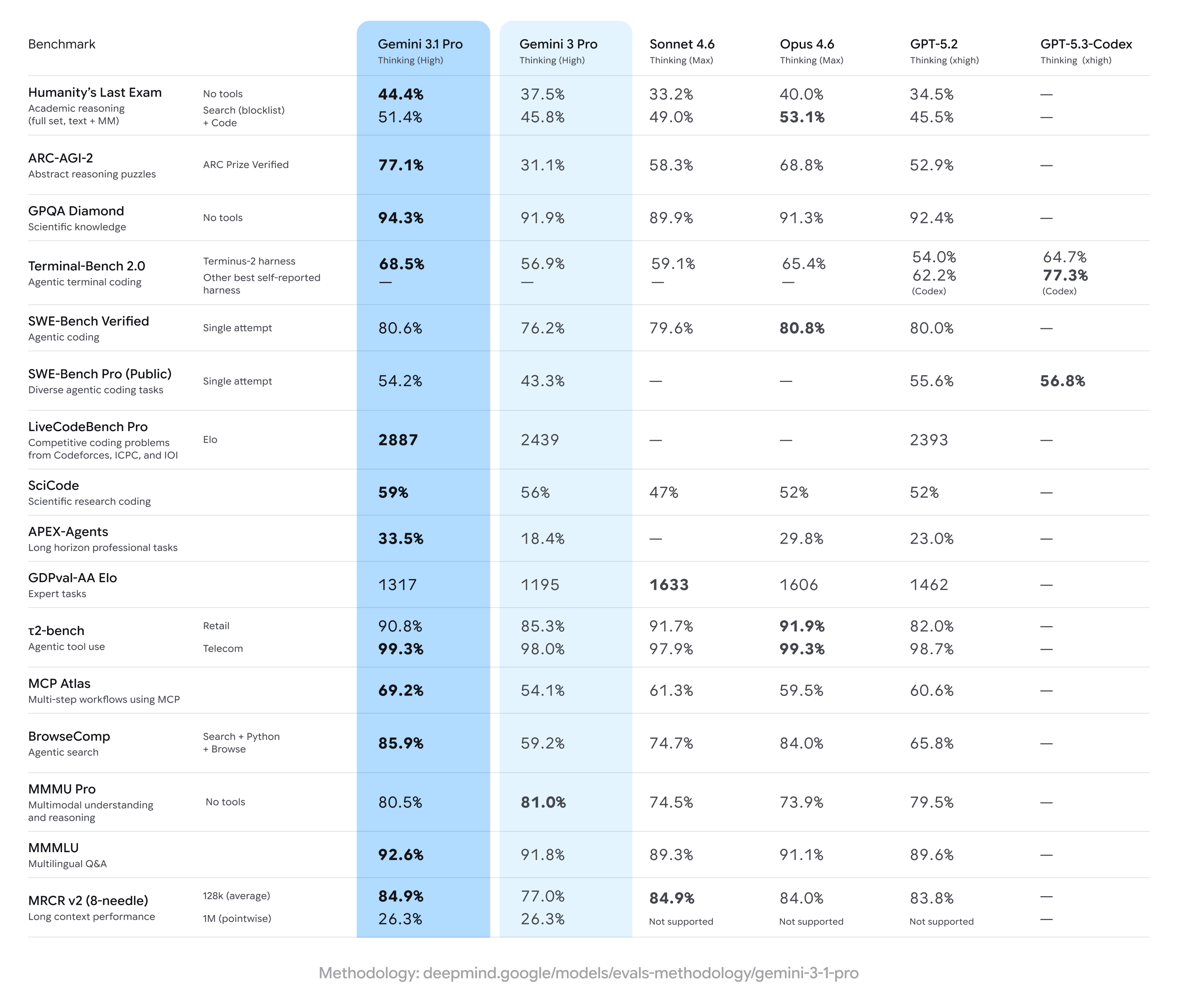

In the Humanity’s Last Exam—a benchmark that tests advanced domain‑specific knowledge—Gemini 3.1 Pro achieved a record 44.4 % score, surpassing Gemini 3 (37.5 %) and OpenAI’s GPT 5.2 (34.5 %).

Credit: Google

The model also showed a substantial improvement on the ARC‑AGI‑2 benchmark, which contains novel logic problems that cannot be directly trained into an AI. Gemini 3 scored 31.1 %, while Gemini 3.1 Pro more than doubled that performance to 77.1 %.

Arena Leaderboard

Google often highlights its models’ positions on the Arena leaderboard (formerly LM Arena). This time, Gemini 3.1 Pro does not top the list:

- Text: Claude Opus 4.6 leads with a score of 1504, four points ahead of Gemini 3.1 Pro.

- Code: Claude Opus 4.6, Opus 4.5, and GPT 5.2 High all rank slightly above Gemini 3.1 Pro.

It’s worth noting that the Arena leaderboard is based on user votes, which can favor outputs that appear correct even if they are not.