You are going to get priced out of the best AI coding tools

Source: Hacker News

Introduction

Andy Warhol famously said:

“What’s great about this country is that the richest consumers buy essentially the same things as the poorest. You can be watching TV and see Coca‑Cola, and you know that the President drinks Coke, Liz Taylor drinks Coke, and just think, you can drink Coke, too.”

There was a time when everyone used GitHub Copilot—$10 / month, free for students. I used it, Andrej Karpathy used it, high‑schoolers learning to code used it too.

Now the cheapest usable tier of Claude Code costs $100 / month. Below I outline a handful of short arguments for why the old state of affairs was temporary and why the best AI coding tools will become far more expensive.

Pricing Trends

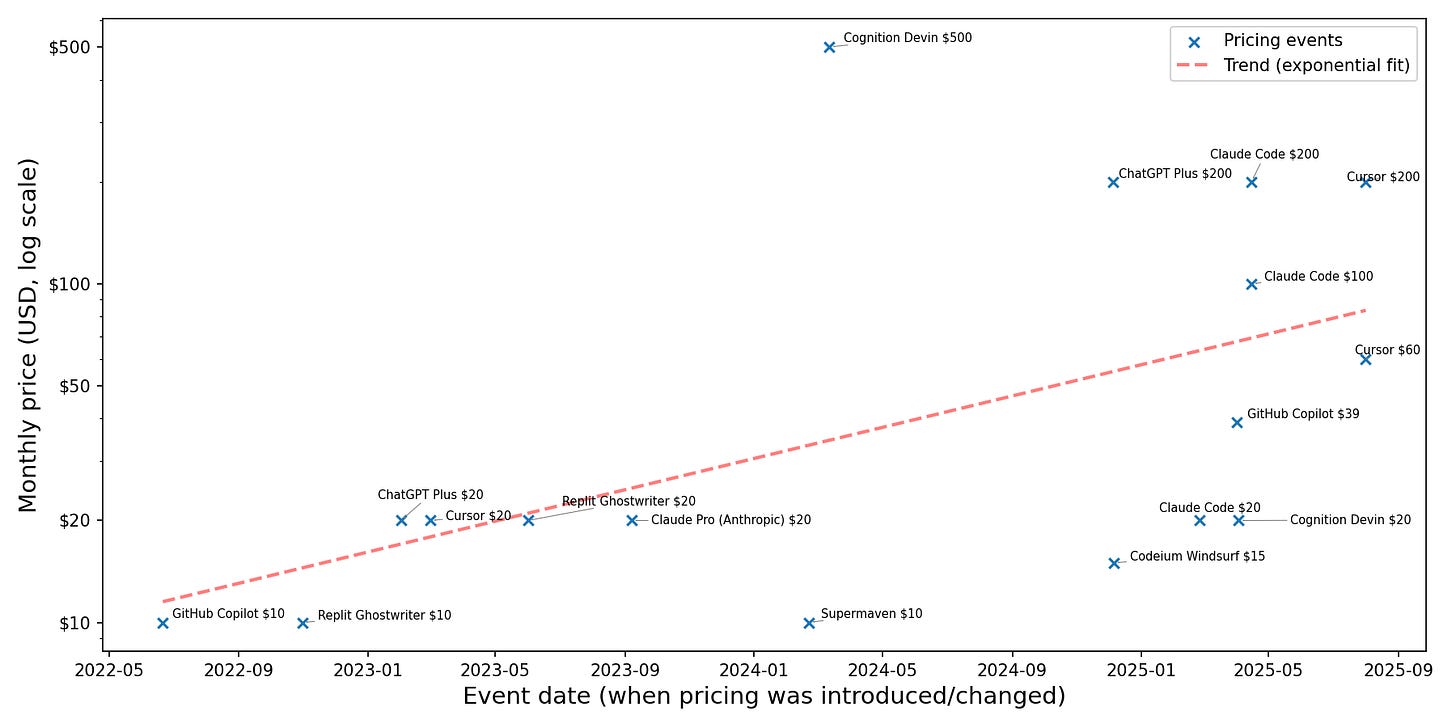

I plotted tiered offerings for AI coding tools, which suggests an exponential trend. The plot has two caveats:

- The data is biased toward products I looked up.

- The higher‑ and lower‑pricing regimes appear to follow disjoint trends, so a straight‑line fit is imperfect.

Nevertheless, the upward trajectory is clear.

Furthermore, OpenAI reportedly discussed charging $20 k / month for PhD‑level research agents with investors (see the Information article). This was mentioned in March; no newer updates have appeared, so treat the claim with caution.

Why AI Coding Agents Remain Valuable

Large language models (LLMs) are unusual in that they started cheap and, when they can perform a task, they often do it much cheaper than humans (source). Measured by the number of lines of code produced, LLM coding agents deliver far more value than they cost (LessWrong post).

This creates an opportunity: a better product can use more compute, charge more, and generate higher margins.

Potential Revenue Drivers

- Continuous assistance – Users may pay extra for frontier LLMs that continuously run, comment, and fill in their work, delivering faster results.

- Improved information retrieval – ChatGPT often fails on challenging queries. A faster, research‑grade alternative (e.g., “Deep Research”) could command higher prices and stimulate demand (tweet).

- Parallel sampling – Running several model instances in parallel and selecting the best output improves metrics (e.g., Pass@K vs. Pass@1). The DeepSeek‑R1 paper reports a jump from 70 % (single query) to 86 % (64 queries with majority vote) on a math benchmark (arXiv).

Industry Perspective

AI‑industry insider Nathan Lambert (reporting from The Curve) notes:

“Within 2 years a lot of academic AI research engineering will be automated with the top end of tools … I also expect academics to be fully priced out from these tools. … Labs will be spending $200 k+ per year per employee on AI tools (inference cost), but most consumers will be at tiers of $20 k or less due to compute scarcity.”

Counter‑Arguments: What Could Keep Costs Down?

- Competition – Rival labs or open‑source projects may push prices lower or develop cheaper alternatives.

- Subsidization – Labs have incentives to broaden adoption among high‑value users, potentially subsidizing costs for broader audiences.

- Hardware & algorithmic gains – Improvements in hardware supply and algorithmic efficiency could outpace demand growth.

- Diminishing returns on scaling inference – If RL‑based inference yields similar performance to pre‑training scaling (Pass@K ≈ Pass@1), the economic incentive to increase compute may fade.

While none of these scenarios appear highly likely, they merit further research.