What Business Owners Thought AI Would Be, Why It Didn’t Work, And Why the Canonical Intelligence Layer (CIL) Changes Everything

Source: Dev.to

For most business leaders, the AI story began with a simple expectation

“I want to ask my company a question and get a correct answer in seconds.”

- Not a document.

- Not a dashboard.

- Not a spreadsheet.

- An answer.

What followed instead was one of the biggest expectation gaps in modern enterprise technology.

Phase One: The AI Dream

When AI entered the mainstream, business owners imagined something close to a digital brain for their organization:

- Ask: “What was the ROI of our last Polpharma project?”

- Ask: “Which client segments are becoming unprofitable?”

- Ask: “Where are we exposed to regulatory risk right now?”

And receive:

- Correct

- Context‑aware

- Authorized

- Explainable

answers — instantly.

In short, they imagined organizational intelligence, not a chatbot.

This imagined system had a name long before AI was fashionable:

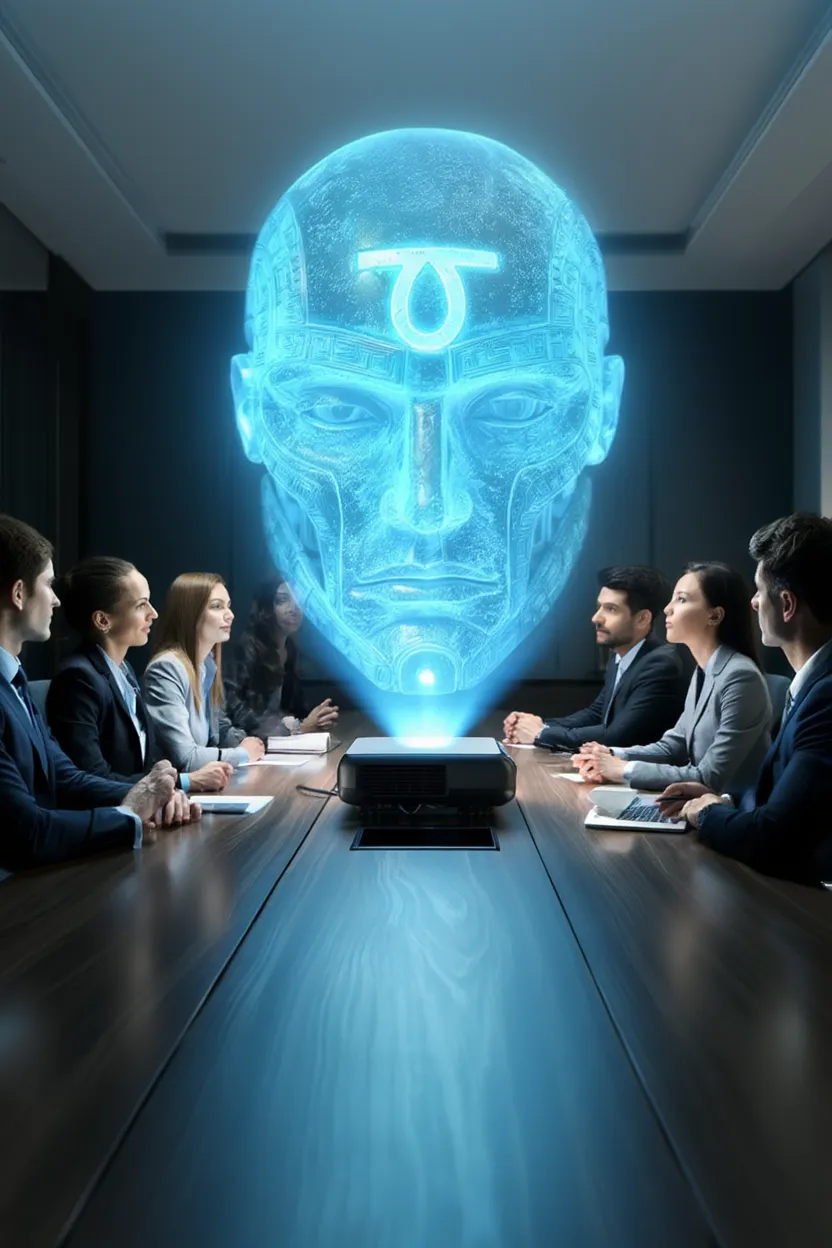

A Canonical Intelligence Layer (CIL) – a single, trusted interface to the company’s real knowledge.

Phase Two: The First Disappointment — “Let’s Add a Chatbot”

The first approach most companies tried was simple:

“Let’s put an AI chat interface on top of our data.”

They connected:

- Documents

- PDFs

- Emails

- CRM exports

- Dashboards

and asked the model to “answer questions”.

What they got

- Fluent responses

- Confident explanations

- Well‑written summaries

What they didn’t get

- Correctness guarantees

- Authorization control

- Accountability

- Consistency over time

The system could talk about the company, but it did not know the company.

Why it failed

- Language models optimize for coherence, not truth.

- They do not understand ownership, permissions, or authority.

- They cannot distinguish “available text” from “allowed knowledge”.

This wasn’t intelligence. It was narration.

Phase Three: The Second Disappointment — “Let’s Train Our Own Model”

After realizing third‑party AI couldn’t be trusted, many companies escalated:

“We’ll train our own LLM on internal data.”

They invested in:

- Fine‑tuning

- Embeddings

- Private clouds

- Vector databases

- Security wrappers

The result?

A more fluent, more company‑specific, but still unreliable system.

Why this also failed

- Training does not create authority.

- More data does not create governance.

- Fine‑tuning does not create accountability.

- Models still hallucinate — just with internal vocabulary.

The model learned how the company sounds, not how the company works.

Core mistake: trying to solve a knowledge‑architecture problem with a language‑optimization tool.

The Fundamental Misunderstanding

Business owners were never asking for better language. They were asking for:

- Decision‑grade answers

- Verifiable truth

- Organizational memory

- Controlled access

- Auditability

In other words: they wanted intelligence, not generation.

Language models are powerful interfaces — but they are not intelligence systems.

Enter the Canonical Intelligence Layer (CIL)

A CIL is not a model. It is an architecture.

What a CIL actually is

A Canonical Intelligence Layer is a system that:

- Holds canonical, governed company knowledge

- Understands who is allowed to know what

- Resolves questions against verified sources

- Enforces authorization before answering

- Produces answers with provenance

- Logs every decision for accountability

In a CIL:

- Knowledge is structured

- Truth is defined

- Access is enforced

- Answers are assembled, not invented

Language models, if used at all, sit at the edge — translating verified outputs into human language.

Why This Finally Works

Because CIL aligns with how companies actually operate:

- Companies don’t run on text — they run on systems.

- They don’t trust fluency — they trust correctness, authority, and auditability.

# Rust Controls

- They don’t optimize for creativity — they optimize for risk reduction

- They don’t want “impressive answers” — they want defensible ones

A CIL turns AI from a **confident storyteller** into a **governed enterprise intelligence system**

[](https://media2.dev.to/dynamic/image/width=800,height=,fit=scale-down,gravity=auto,format=auto/https%3A%2F%2Fdev-to-uploads.s3.amazonaws.com%2Fuploads%2Farticles%2Fdxq6revonw2543np7otx.png)The Real Shift: From AI as a Brain to AI as Infrastructure

The future of enterprise AI is not:

- bigger models

- more parameters

- more training data

It is:

- knowledge architecture

- governance runtimes

- controlled intelligence layers

- CIL‑style systems

This is why many AI projects felt powerful — but failed in production.

They were trying to install a Ferrari engine into a go‑kart and then make it “safe” by adding another engine.

What enterprises actually needed was a new vehicle design.

Final Thought

Business owners were not naïve. Their intuition was correct.

AI should be able to:

- answer company questions

- surface real knowledge

- operate in seconds

- reduce cognitive load

- increase decision quality

The mistake was assuming language models alone could do that. But they can’t!

A TauGuard Canonical Intelligence Layer (CIL) can.

And that’s the difference between:

- AI that sounds smart

- AI that earns trust