Two different tricks for fast LLM inference

Source: Hacker News

Fast Mode Showdown: Anthropic vs. OpenAI

Anthropic and OpenAI have both announced “fast mode,” a way to interact with their best coding models at significantly higher speeds.

These two versions of fast mode are very different. Anthropic’s offering provides up to 2.5× tokens per second (≈ 170 t/s, up from Opus 4.6’s 65 t/s). OpenAI’s fast mode delivers more than 1 000 t/s (up from GPT‑5.3‑Codex’s 65 t/s, i.e., ≈ 15×). Consequently, OpenAI’s fast mode is roughly six times faster than Anthropic’s1.

Key distinction: Anthropic serves the actual Opus 4.6 model, whereas OpenAI’s fast mode runs the smaller GPT‑5.3‑Codex‑Spark model—not the full‑size GPT‑5.3‑Codex. Spark is faster but noticeably less capable; it can get confused and mishandle tool calls that the vanilla model would never botch.

Why the differences?

The labs haven’t disclosed the exact mechanics of their fast modes, but a plausible explanation is:

- Anthropic: low‑batch‑size inference.

- OpenAI: special, massive Cerebras chips.

Below is a deeper dive into each approach.

How Anthropic’s fast mode works

The core trade‑off in AI inference economics is batching, because the main bottleneck is memory. GPUs are fast, but moving data onto a GPU is not. Every inference request must copy the entire prompt onto the GPU before computation can start2. Batching multiple users together raises overall throughput at the cost of making each user wait for the batch to fill.

Analogy – a bus system

Zero batching is like a bus that departs the moment a passenger boards. The passenger’s commute is swift, but overall throughput suffers because the bus often runs half‑empty.

Anthropic’s fast mode is essentially a “bus pass” that guarantees the bus leaves immediately once you board. It costs about six times more because you’re paying for the capacity that could have been shared with other passengers, but you enjoy zero waiting time3.

Edit: A reader pointed out that the “waiting for the bus” cost applies only to the first token, so it doesn’t affect streaming latency (only latency per turn or tool call). Thus, the performance impact of batch size is mainly that smaller batches require fewer FLOPs and finish faster. In the analogy, think of it as “lighter buses drive faster.”

I can’t be 100 % certain of this explanation. Anthropic might have access to ultra‑fast compute or a novel algorithmic trick. However, introducing a new, cheaper model (as OpenAI does) would likely require model changes, whereas a low‑batch‑size regime can explain the observed “six‑times‑more‑expensive for 2.5×‑faster” ratio.

How OpenAI’s fast mode works

OpenAI’s fast mode is fundamentally different. The fact that they introduced a new, less capable model (Spark) signals that the speed gain isn’t just a batch‑size tweak. Their announcement blog post explicitly states the backing hardware: Cerebras4.

Cerebras partnership

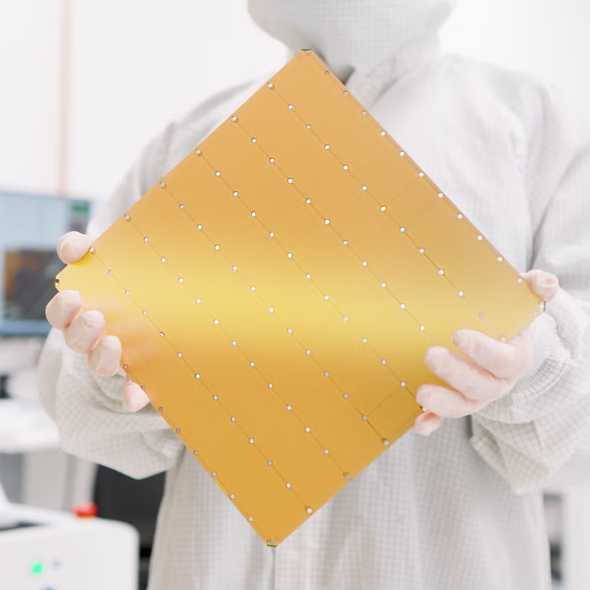

OpenAI announced its partnership with Cerebras in January 2024. Cerebras builds “ultra‑low‑latency compute” by fabricating giant chips. While an NVIDIA H100 occupies just over a square inch, a Cerebras wafer‑scale engine spans ≈ 70 in².

These chips have massive on‑chip SRAM, allowing the entire model to reside in memory. Typical GPU SRAM is only tens of megabytes, forcing frequent weight streaming from slower DRAM during inference5. With enough SRAM, inference can stay in‑memory, yielding the observed ≈ 15× speedup.

The latest Cerebras wafer‑scale engine offers 44 GB of SRAM6. This is sufficient for a small model (~20 B parameters at fp16, ~40 B at int8), but far too small for the full GPT‑5.3‑Codex. Hence, OpenAI introduced GPT‑5.3‑Codex‑Spark, a distilled version of the larger model7.

OpenAI’s version is technically impressive

The two labs have taken very different engineering paths to achieve fast inference:

| Aspect | Anthropic | OpenAI |

|---|---|---|

| Speed gain | 2.5× tokens/s (≈ 170 t/s) | 15× tokens/s (≈ 1 000 t/s) |

| Model used | Full Opus 4.6 (no distillation) | Distilled GPT‑5.3‑Codex‑Spark |

| Primary technique | Low‑batch‑size inference (less waiting) | Cerebras wafer‑scale engine (massive on‑chip memory) |

| Cost | ~6× higher per token | ~6× higher per token (similar ratio) |

| Trade‑off | Slightly higher latency per turn (first token) | Reduced model capability |

Both approaches have merit. Anthropic’s method preserves model quality while shaving latency through smarter batching. OpenAI’s hardware‑centric strategy pushes raw throughput dramatically, at the expense of model size.

Footnotes (1‑7)

It could go something like this

- OpenAI partners with Cerebras in mid‑January, obviously to work on putting an OpenAI model on a fast Cerebras chip.

- Anthropic has no similar play available, but they know OpenAI will announce some kind of blazing‑fast inference in February, and they want to have something in the news cycle to compete with that.

- Anthropic therefore hustles to put together the kind of fast inference they can provide: simply lowering the batch size on their existing inference stack.

- Anthropic (probably) waits until a few days before OpenAI are done with their much more complex Cerebras implementation to announce it, so it looks like OpenAI copied them.

Obviously OpenAI’s achievement here is more technically impressive. Getting a model running on Cerebras chips is not trivial because the hardware is so unusual. Training a 20 B‑ or 40 B‑parameter distil of GPT‑5.3‑Codex that is still “kind‑of‑good‑enough” is also non‑trivial.

But I commend Anthropic for finding a sneaky way to get ahead of the announcement that will be largely opaque to non‑technical people. It reminds me of OpenAI’s mid‑2025 sneaky introduction of the Responses API to help them conceal their reasoning tokens8.

Is fast AI inference the next big thing?

Seeing the two major labs put out this feature might make you think that fast AI inference is the new major goal they’re chasing. I don’t think it is. If my theory above is right, Anthropic doesn’t care that much about fast inference; they just didn’t want to appear behind OpenAI. And OpenAI is mainly exploring the capabilities of its new Cerebras partnership.

It’s still largely an open question what kind of models can fit on these giant chips, how useful those models will be, and whether the economics will ever make sense.

I personally don’t find “fast, less‑capable inference” particularly useful. I’ve been playing around with it in Codex and I don’t like it. The usefulness of AI agents is dominated by how few mistakes they make, not by their raw speed. Buying 6× the speed at the cost of 20 % more mistakes is a bad bargain, because most of the user’s time is spent handling mistakes instead of waiting for the model9.

However, it’s certainly possible that fast, less‑capable inference becomes a core lower‑level primitive in AI systems. Claude Code already uses Haiku for some operations10. Maybe OpenAI will end up using Spark in a similar way.

A few technical side‑notes

-

Either the KV‑cache for previous tokens, or a large tensor of intermediate activations if inference is being pipelined through multiple GPUs, is involved. I write a lot more about this in Why DeepSeek is cheap at scale but expensive to run locally, which explains why DeepSeek can be offered at such cheap prices (massive batches allow an economy of scale on giant, expensive GPUs, but individual consumers can’t access that at all)11.

-

Is it a contradiction that low‑batch‑size means low throughput, but this “fast‑pass” system gives users much greater throughput? No. The overall throughput of the GPU is much lower when some users are using “fast mode”, but those users’ per‑user throughput is much higher12.

-

Remember, GPUs are fast, but copying data onto them is not. Each “copy these weights to GPU” step is a meaningful part of the overall inference time9.

-

The “fast” model could also be a smaller distil of whatever more powerful base model GPT‑5.3‑Codex was itself distilled from. I don’t know how AI labs do it exactly, and they keep it very secret. More on that here13.

-

On a related note, it’s interesting that Cursor’s hype dropped away basically at the same time they released their own “much faster, a little less‑capable” agent model14. Of course, much of this is due to Claude Code sucking up all the oxygen in the room, but having a very fast model certainly didn’t help.

If you liked this post…

- Consider subscribing to email updates about my new posts.

- Or share it on Hacker News.

Related post (preview)

How does AI impact skill formation?

Two days ago, the Anthropic Fellows program released a paper called How AI Impacts Skill Formation. Like other papers on AI before it, this one is being treated as proof that AI makes you slower and dumber. Does it prove that?

The structure of the paper is similar to the 2025 MIT study Your Brain on ChatGPT. Researchers gave a group of participants a cognitive task that required learning a new skill—the Python Trio library. Half of the participants were required to use AI, and half were forbidden from using it. Afterwards, the researchers quizzed the participants to see how much information they retained about Trio.

Additional Footnotes (8‑15)

Footnotes

-

OpenAI’s fast mode (≈ 1 000 t/s) is roughly six times faster than Anthropic’s (≈ 170 t/s). ↩

-

Each inference request must copy the entire prompt onto the GPU before computation can begin. ↩

-

The “six‑times‑cost” reflects paying for the capacity that could have been shared with other users in a batch. ↩

-

OpenAI’s blog post on the Cerebras partnership. ↩

-

GPU SRAM is typically measured in tens of megabytes; streaming weights from DRAM adds latency. ↩

-

Cerebras Wafer‑Scale Engine (WSE) SRAM capacity: 44 GB. ↩

-

Spark is a distilled version of GPT‑5.3‑Codex, designed to fit within Cerebras’ on‑chip memory constraints. ↩

-

See Sean Goedecke’s discussion of the Responses API. ↩

-

Haiku issue comment. ↩

-

See the DeepSeek article referenced above. ↩

-

Explanation of per‑user throughput versus overall GPU throughput. ↩

-

Discussion of model distillation practices. ↩

-

Cursor’s fast, less‑capable agent model rollout. ↩