NVIDIA Launches Nemotron 3 Nano Omni Model, Unifying Vision, Audio and Language for up to 9x More Efficient AI Agents

Source: NVIDIA AI Blog

NVIDIA Nemotron 3 Nano Omni – A Unified Multimodal Model

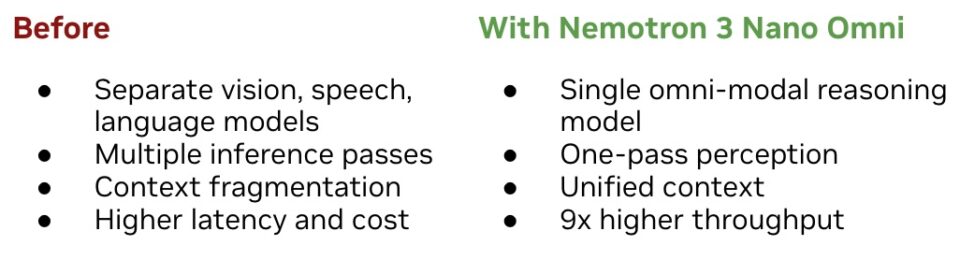

AI agents today often rely on separate models for vision, speech, and language, losing time and context as data hops between them.

Nemotron 3 Nano Omni (announced today) is an open, multimodal model that unifies video, audio, image, and text processing in a single system. This enables agents to:

- Respond faster and more intelligently

- Perform advanced reasoning across modalities

- Reduce latency and cost while maintaining high accuracy

Why It Matters

- Efficiency frontier: Sets a new benchmark for open multimodal models with leading accuracy at low cost.

- Leaderboard leader: Tops six leaderboards for complex document intelligence, video, and audio understanding – see the full list in the NVIDIA blog post.

- Production‑ready: Offers enterprises and developers a clear path to deploy efficient, accurate multimodal AI agents with full control over the stack.

Early Adopters

| Company / Organization | Use Case / Note |

|---|---|

| Aible | Nemotron 3 Nano Omni AI Agent |

| Applied Scientific Intelligence (ASI) | Scientific AI literature agent |

| Eka Care | Multimodal healthcare agent for India‑scale patient care |

| Foxconn | — |

| H Company | Holotron 3 |

| Palantir | — |

| Pyler | Scaling trustworthy video safety |

| Dell Technologies | — |

| DocuSign | — |

| Infosys | — |

| K‑Dense | Multimodal agentic science |

| Lila | — |

| Oracle | — |

| Zefr | Evaluating Nemotron 3 Nano Omni for cognition AI |

Customer Quote

“To build useful agents, you can’t wait seconds for a model to interpret a screen,” said Gautier Cloix, CEO of H Company.

“By building on Nemotron 3 Nano Omni, our agents can rapidly interpret full‑HD screen recordings — something that wasn’t practical before. This isn’t just a speed boost: it’s a fundamental shift in how our agents perceive and interact with digital environments in real time.”

Nemotron 3 Nano Omni provides a single, efficient foundation for next‑generation multimodal AI agents, delivering speed, accuracy, and flexibility across video, audio, image, and text.

Nemotron 3 Nano Omni Enables Faster, Leaner Multimodal Agents

Modern AI agents often need to process vision, speech, and language together—for example, a customer‑support bot that watches a screen recording, listens to call audio, and checks data logs, or a finance assistant that parses PDFs, spreadsheets, charts, and voice notes.

Most current systems use separate models for each modality, which leads to:

- ↑ Latency from multiple inference passes

- Fragmented context across modalities

- Higher cost and cumulative inaccuracies

Why Nemotron 3 Nano Omni is different

- Hybrid Mixture‑of‑Experts (MoE) architecture – 30 B‑parameter base with an additional 3 B‑parameter expert layer that jointly encodes vision and audio.

- Single perception model – eliminates the need for distinct vision, speech, and language back‑ends.

- 9× higher throughput than other open‑source omni‑models at comparable interactivity levels【Hugging Face blog】.

The result: lower inference cost, better scalability, and no sacrifice in responsiveness or quality.

How it fits into agentic workflows

Nemotron 3 Nano Omni can be paired with:

| Companion model | Typical role |

|---|---|

| Nemotron 3 Super | High‑frequency, low‑latency execution |

| Nemotron 3 Ultra | Complex planning and reasoning |

| Proprietary cloud models | Specialized downstream tasks |

Together they power sub‑agents for:

- Computer‑use agents – Perception loop for UI navigation, on‑screen reasoning, and state tracking.

- Example: H Company’s computer‑usage agent (1920 × 1080 native resolution) achieved a large jump on the OSWorld benchmark by leveraging high‑resolution visual reasoning.

- Document intelligence – Unified understanding of PDFs, charts, tables, screenshots, and mixed‑media inputs, essential for enterprise analysis and compliance.

- Audio‑video understanding – Maintains a single reasoning stream that ties together spoken words, visual cues, and documented information for customer‑service, research, and monitoring pipelines.

Visual summary

Open and Customizable, Deployable Anywhere

Nemotron 3 Nano Omni is released with open weights, datasets, and training techniques—giving organizations full transparency and control over how the model is customized and deployed.

Developers can use tools like NVIDIA NeMo for customization, evaluation, and optimization for domain‑specific use cases. Because the Nemotron family of models is open, organizations can deploy them in environments that meet regulatory, sovereignty, or data‑localization requirements.

The Nemotron 3 family—including Nano, Super, and Ultra models—has seen over 50 million downloads in the past year. Omni extends the family’s capabilities into multimodal and agentic domains.

The model is available on:

It can also be accessed through a broad ecosystem of NVIDIA Cloud Partners, inference platforms, and cloud service providers.

Deployment Options

Nemotron 3 Nano Omni’s open, lightweight architecture supports consistent deployment across:

- Local systems – e.g., NVIDIA Jetson hardware

- Workstations – e.g., NVIDIA DGX Spark and DGX Station

- Data‑center and cloud environments

Learn More

-

NVIDIA technical blog – tutorials, cookbooks, and deployment guides

-

Stay up‑to‑date on agentic AI, NVIDIA Nemotron, and more by:

- Subscribing to NVIDIA news

- Joining the NVIDIA developer community

- Following NVIDIA AI on:

Explore, customize, and deploy Nemotron 3 Nano Omni wherever your workloads demand the highest flexibility and control.