Memory makers are set to earn $551 billion from the AI boom, twice as much as contract chip manufacturers — forecasts suggest that 2026 revenue will skyrocket thanks to data center demand

Source: Tom’s Hardware

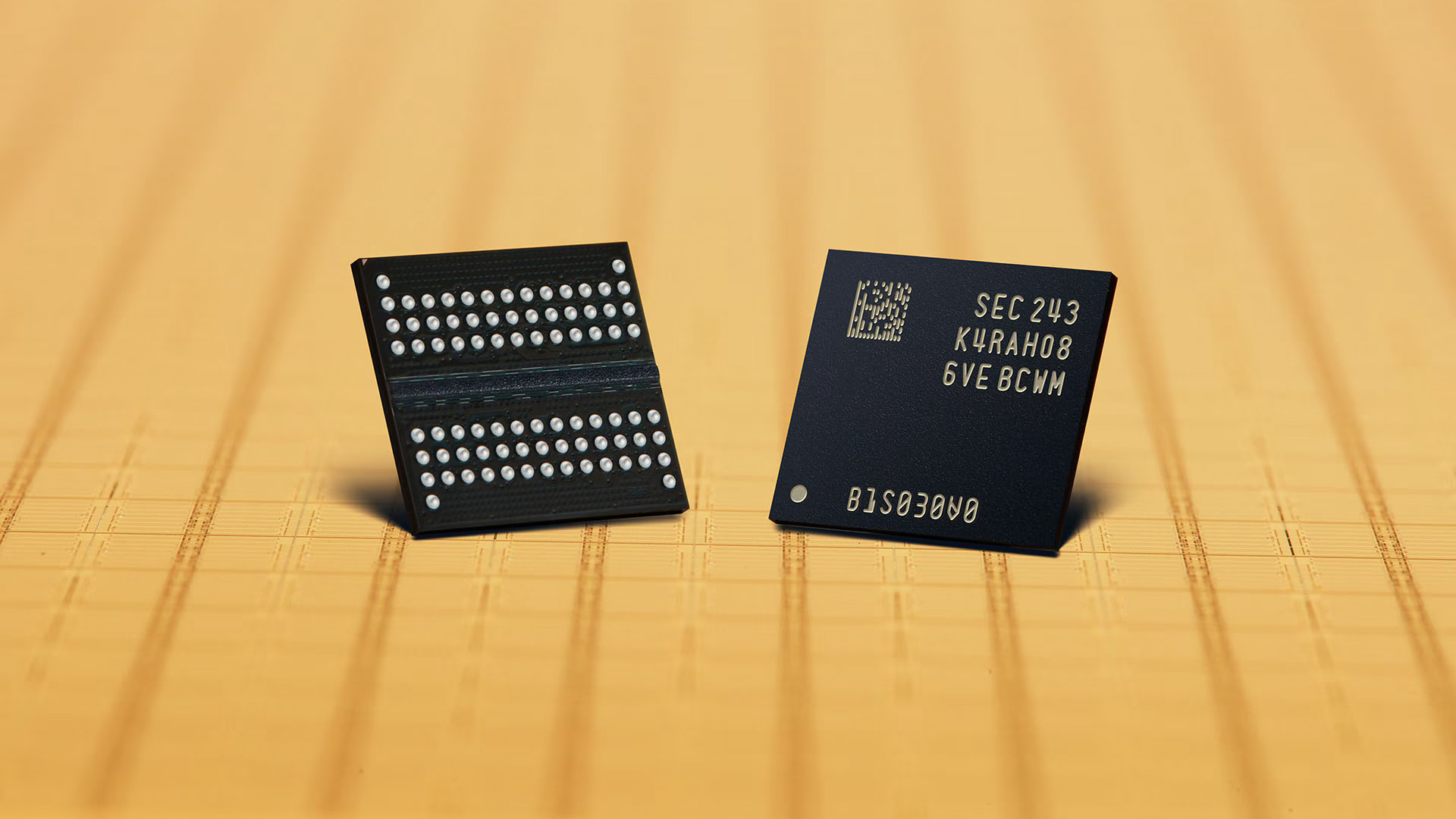

Image credit: Samsung

The artificial intelligence supercycle is reshaping the semiconductor and electronics industries, as the scale of the AI infrastructure buildout strains the entire supply chain. While developers of AI accelerators like Nvidia are cashing in on the AI boom, it’s memory makers that will earn the most cash, according to estimates from TrendForce. This reflects differences in business models, expansion strategies, and commodity‑market dynamics.

Demand outstrips supply

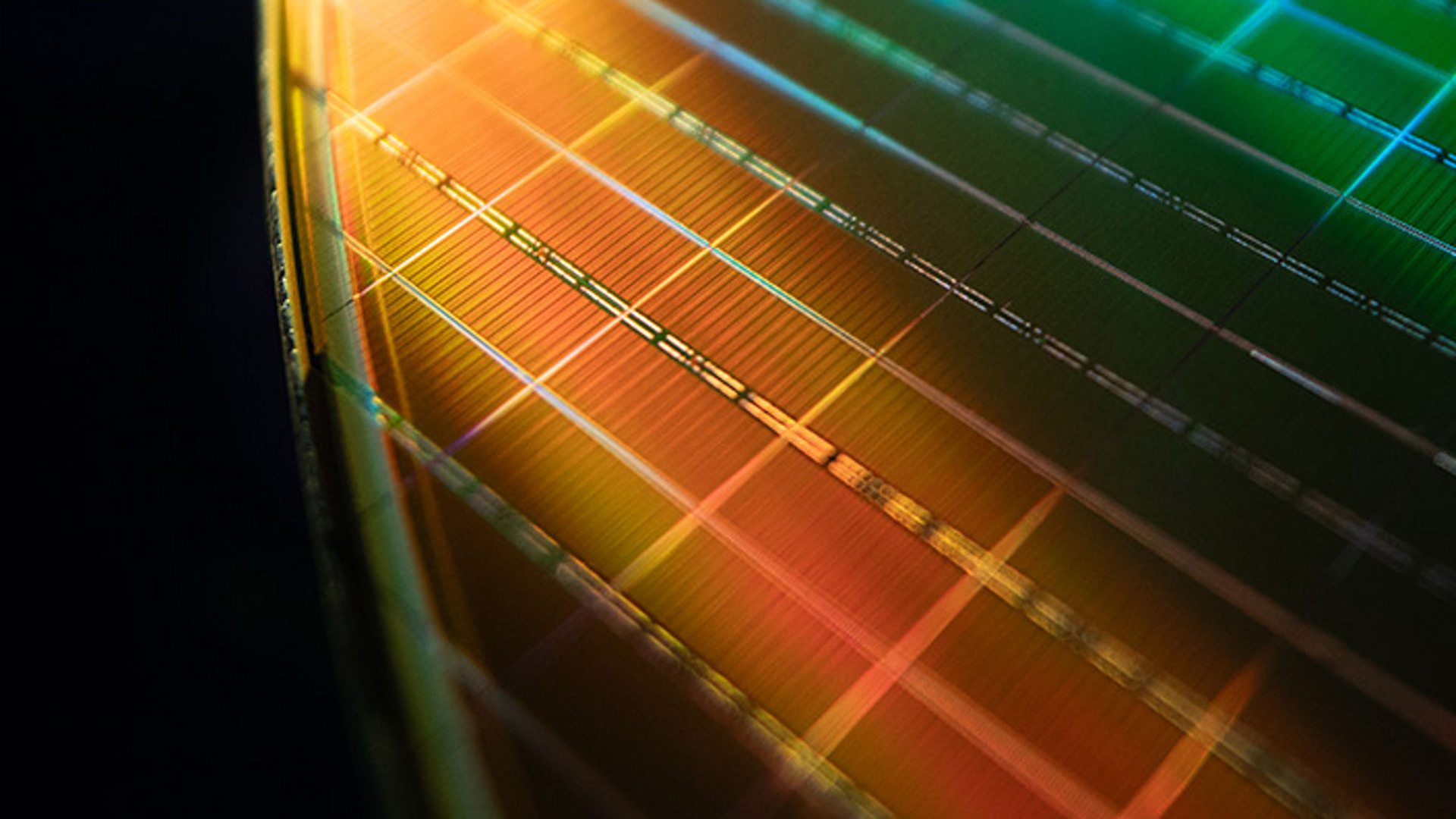

Image credit: Micron

The spot price of a 16 Gb DDR5 chip on DRAMeXchange averaged $38, with a daily high of $53 and a low of $25. A year earlier the same chip averaged $4.75 (low $3.70, high $6.60). Similar price spikes have been observed for 3D NAND memory in recent quarters.

Fundamental differences

TrendForce labels the AI megatrend a “supercycle,” highlighting its ubiquity across industries and its potential longevity.

Two periods saw consecutive‑year revenue growth for memory makers:

- 2017‑2018: hyperscalers expanded data centers (+62 % in 2017, +27 % in 2018)

- 2020‑2021: pandemic‑driven PC purchases

In both cases, capacity expansions were followed by sharp revenue drops in the subsequent years (2019, 2022).

Foundries, which are far more capital‑intensive — see the TSMC spending package — use fabs that take longer to build and experienced only a year‑over‑year revenue decline in 2023.

Today, leading AI developers need powerful clusters for training, driving demand for cutting‑edge hardware with expensive HBM3E memory and ample storage. Inference workloads also require robust CPUs, accelerators, memory, and storage, keeping demand high. Cloud service providers (CSPs) are relatively price‑insensitive, allowing 3D NAND and DRAM suppliers to raise average selling prices more aggressively than in past cycles.

Foundry vs. Commodity

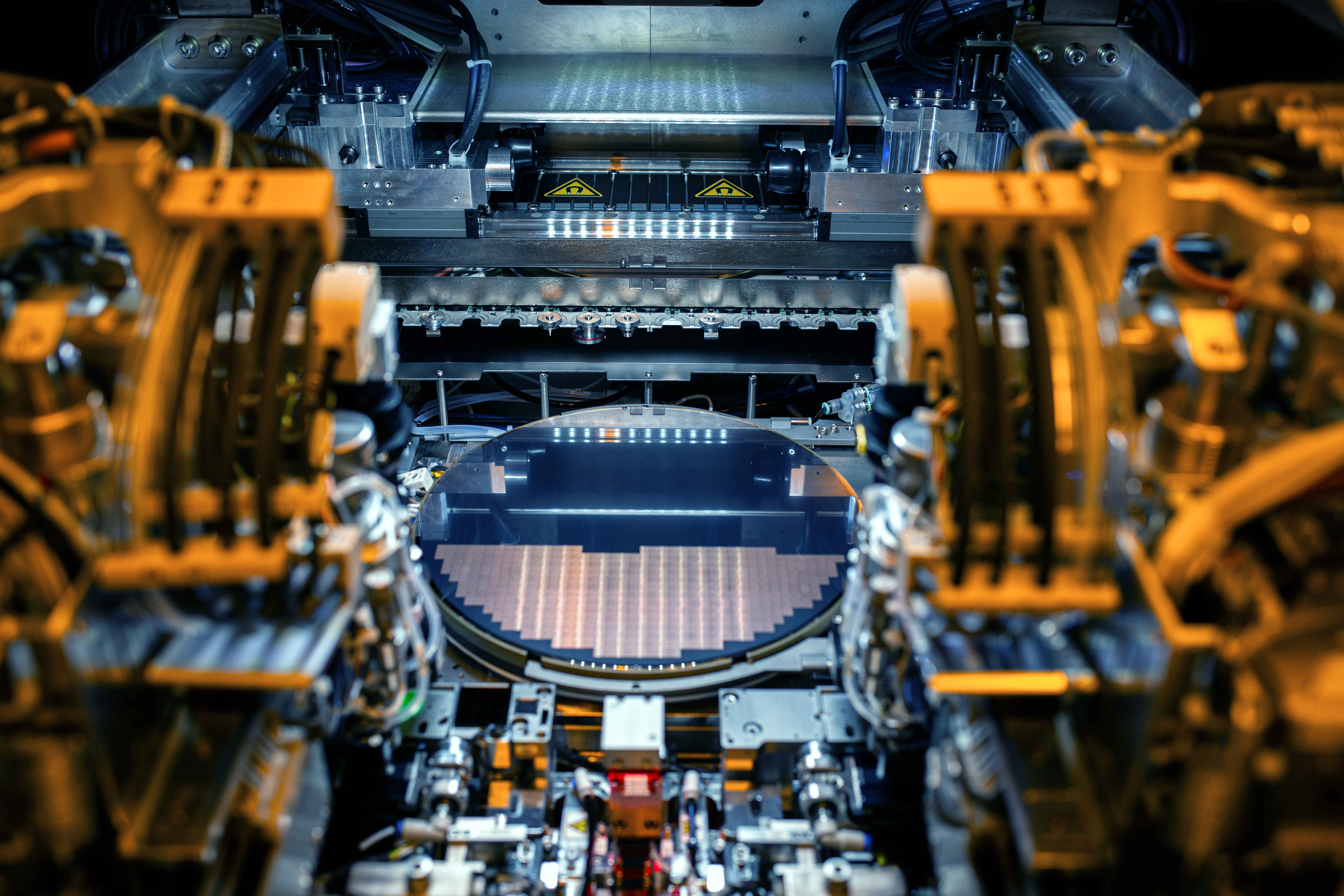

Image credit: Intel

3D NAND and DRAM behave as commodities, with prices reacting quickly to supply constraints, demand spikes, or buyer sentiment. While large PC makers lock in prices semi‑annually, a significant portion of memory is sold on the spot market.

TrendForce projects memory revenue growth of 80 % in 2024, 46 % in 2025, and a 134 % surge in 2026 after the 2022‑2023 downturn.

Foundries operate under long‑term agreements that smooth price fluctuations, resulting in slower revenue growth. TrendForce forecasts a 19 % YoY revenue increase for foundries in 2024, followed by 25 % growth in 2025 and another 25 % in 2026.

Consequently, boosted by the AI supercycle and free from long‑term pricing constraints, memory vendors are expected to earn more than twice as much as logic producers this year.

The biggest question

HBM4 memory devices use four times more silicon than typical DRAM ICs, highlighting capacity limits for memory makers and prompting price adjustments. The key question is how much current 3D NAND and DRAM prices are driven by genuine supply shortages versus typical commodity behavior—where customers buy more as prices rise—potentially leading to even higher future prices.