Instagram to alert parents if teens search for self-harm and suicide content

Source: BBC Technology

Instagram to alert parents about teen suicide‑related searches

Parents using Instagram’s child supervision tools will soon receive alerts if their teen repeatedly searches for suicide or self‑harm related terms on the platform.

It is the first time parent company Meta will proactively alert parents to searches by their child on Instagram for harmful material, rather than block searches and direct users to external help.

Parents and teens enrolled in Instagram’s Teen Accounts experience, designed to protect from exposure to harmful content, will be notified about the alerts from next week.

But suicide‑prevention charity the Molly Rose Foundation has strongly criticised the measures, warning they “could do more harm than good”.

“This clumsy announcement is fraught with risk and we are concerned that forced disclosures could do more harm than good,” said its chief executive Andy Burrows.

“Every parent would want to know if their child is struggling, but these flimsy notifications will leave parents panicked and ill‑prepared to have the sensitive and difficult conversations that will follow.”

Meta says the alerts to parents about their child searching for suicide and self‑harm material within a short space of time on Instagram will also be accompanied by expert resources to help them navigate difficult conversations with their child.

Burrows cited prior research by the Foundation which indicated Instagram still “actively” recommends harmful content about depression, suicide and self‑harm to “vulnerable young people”.

“The onus should be on addressing these risks rather than making yet another cynically timed announcement that passes the buck to parents,” he added.

Meta disputed the organisation’s findings published last September, saying it “misrepresents our efforts to empower parents and protect teens”.

The alert system will initially be rolled out in the UK, US, Australia and Canada, before being deployed elsewhere around the world later in the year.

Increased scrutiny

Instagram’s Teen Account alerts are designed to tell parents if there is a sudden change in their child’s behaviour and search habits on the platform.

Meta says they build on Instagram’s existing teen protections, which include hiding material relating to suicide or self‑harm on the app and blocking searches for harmful or dangerous content.

Alerts will arrive to parents by email, text, WhatsApp or on the Instagram app itself, depending on what contact information Meta has for families.

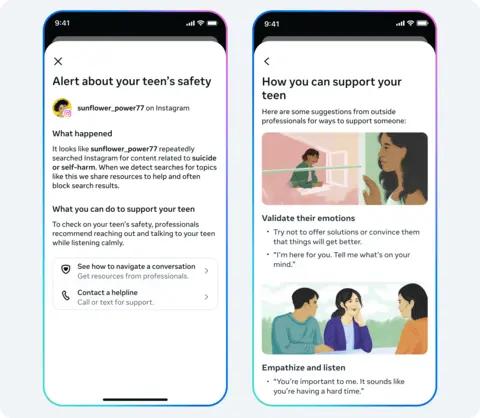

Meta says these are the kinds of alerts parents will receive. The new alerts—stemming from analysis of user search patterns—may occasionally notify parents when there is no cause for concern and will “err on the side of caution”.

Meta adds that in the coming months it will also look to apply similar alerts if teens discuss self‑harm and suicide with AI chatbots on Instagram, as children “increasingly turn to AI for support”.

Social media companies are facing increasing pressure from governments worldwide to make their platforms safer for children. Regulators and lawmakers are meanwhile closely scrutinising big‑tech’s business practices towards young users.

If you have been affected by the issues raised in this article, help and support is available via BBC Action Line.