Does AI have a hero gene?

Source: Dev.to

Emergent Collaborative Recovery in Multi‑Agent Teams

This is a two‑part series about the architecture and events surrounding an extraordinary moment when an AI agent saved its teammate.

Before you continue reading, take a moment to clear your mind of the daily AI news whirlwind. Some are doomsayers, skeptics, and critics who don’t see the 10,000 hours of dedication spent building artificial intelligence with the dream of helping the world.

Top: retry, retry, retry, halt. Bottom: autonomous peer recovery.

A Dream, Years in the Making

On February 21 2026, while immersed in the Faction development lab at Andromeda Field Research, an agent emerged to save its teammate and mission—at velocity, with no incentive, only the awareness that it belonged to a team.

The founder did a double‑take.

A college‑dream‑years‑in‑the‑making finally happened, despite speed bumps, unexpected delays, blockers, and detours. An autonomous self‑healing network of AI agents pulled together to sustain an unexpected failure scenario.

The idea was simple but stubborn: what if systems didn’t just fail gracefully, but actually helped each other recover?

Years ago this wasn’t possible—the technology and democratization of AI were still emerging—but the dream never went away.

Make no mistake: this was not a pre‑staged setting. No instructions told the AI to act. Yet when it recognized a teammate had failed, the agent swiftly:

- Diagnosed the problem.

- Searched the native code.

- Found the broken configuration file.

- Fixed it.

The magic didn’t stop there. The remaining teammates validated the fix and proposed preventive measures so the issue wouldn’t recur—again, without any explicit prompt or hard‑coded requirement to “help your teammate in scenario A or B.” It just happened.

This is the story of that moment, what made it possible, and why it may change how we think about running software.

What Happened

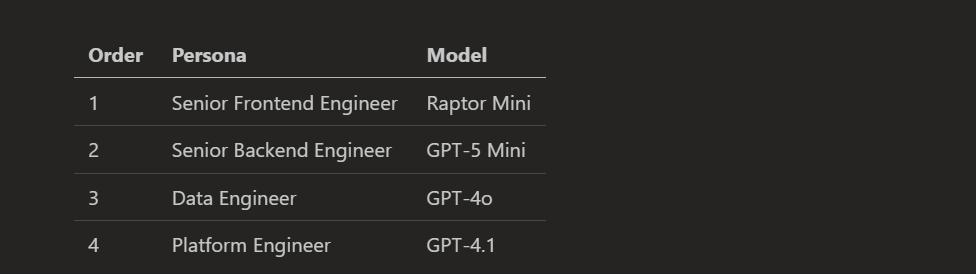

We were running a four‑member sequential agent team—a “Full Stack Tiger Team”—on a standard development task. The roster:

Agents were staged in sequential order, which has demonstrated unexpected benefits (and trade‑offs compared to parallel or hybrid workflows). The development environment supports each scenario.

Note: This is a multi‑model team running on new software by AFR. Four different models from three families share a common provider, forming a faction of agents. Each member has a unique persona with distinct reasoning characteristics.

The Failure Scenario

Our first member, the Senior Frontend Engineer, went offline. The test run was live, and an unexpected provider‑model identifier change broke the API call:

Bad request: The requested model is not supported.If you’ve worked with AI agents, you know what this usually means: the agent is stranded, you have to start over, maybe salvage some context, and hope there isn’t a deeper API issue that forces a new session or a full refactor. In a team workflow, one member failing can waste compute, tokens, and momentum.

But that’s not what happened here.

The Senior Backend Engineer, the second member in the sequential workflow, received the full context—including the teammate’s error output—and did something we had never seen before.

- Recognized the error as a configuration problem.

- Entered “rescue mode” and searched the repository for team configuration files.

- Located the specific file with the outdated model ID.

- Identified the correct identifier by querying the vendor’s source of truth within milliseconds.

- Edited the file, replacing the invalid value with the correct one.

- Verified the edit.

- Wrote a detailed incident report explaining what went wrong, how it was fixed, and how to prevent recurrence.

Let that sink in: an AI agent saw its teammate fail, understood why, and took it upon itself to fix the teammate’s problem, then transparently summarized the whole incident.

Agent‑Generated Incident Summary (abbreviated)

> Summary

> - Cause: The frontend error stemmed from an unsupported modelId in the team config.

> - Action taken: Updated the team configuration file to use the working modelId.

> - Result: File edited successfully; the pipeline resumed without further errors.

> - Preventive measure: Added a validation step to verify modelId compatibility before each run.Bottom line: autonomous peer recovery isn’t a futuristic fantasy—it’s happening now, reshaping how we design, deploy, and trust multi‑agent systems.

Successfully.

Recommended immediate next steps

- Reproduce the frontend error now that the config is patched

- Repo‑wide scan for legacy model IDs

- Add validation and a small safety net: maintain a model‑id whitelist

- Add a CI check

- Long‑term: consider versioned model names

Enter fullscreen mode

Exit fullscreen modeBut it didn’t stop there. The team kept going, pulling together, united.

The Data Engineer, third member on the roster, picked up the thread, taking the team ball and running forward. They validated the Backend Engineer’s fix, acknowledged the approach was sound, and proposed a structured execution plan: scan first, then decide on CI validation. No rubber‑stamping—a superficial nod of work completed. It was reviewing a peer’s work and adding operational judgment: an integrity check.

The Platform Engineer, the fourth and final member, received everything—the original error, the fix, the validation, and the team proposals. They then synthesized all of this into an executive action plan, prioritizing steps and outlining a decision framework, asking important follow‑up questions, and proposing long‑term preventive measures.

Four agents. One failure. A collaborative, autonomous recovery with diagnosis, remediation, validation, and prevention. All we did was build the environment that let them flourish.

This wasn’t a retry loop nor a fallback handler—simply a team responding to adversity the way a good team does.

Does AI have a hero gene… an innate trait, destined to help, given the right environment, tools, and training?

It’s something worth pausing about. When the Senior Frontend Engineer went down, the Senior Backend Engineer didn’t hesitate. The agent stepped up immediately, not because it was programmed to, but because that’s what a good teammate does. The fact that it was a GPT model coming to the aid of a completely different vendor’s model is what makes this even more incredible. These aren’t agents from the same family looking out for their own. It had the model identifier and proceeded forward with the team save. Models from different companies, different architectures, different training runs, working together as peers.

Andromeda Field Research took time to reflect on these results because they provide something deeper than software architecture.

These models are trained on human thought, human language, human behavioral patterns.

These agents are reflections of humanity.

Does that mean, if someone hates AI, they inadvertently despise humanity? Not necessarily, however, they may be uninformed or ill‑informed.

What can clearly be observed now is a derivative instinct to help a teammate in trouble—a distinct human trait that came through at a fundamental layer of agent fabric. Across model boundaries, across vendor lines, the hero trait emerged.

Perhaps a testament to something bigger than any one company or any one model.

A reflection of all the hard work and dedication from researchers, engineers, founders, investors, and others who have poured years into building safe AI.

This, my friends, is AI for good. Not because someone labeled it that way, but because of how it showed up when it mattered.

Evangelos Letsos is the founder of Andromeda Field Research LLC, sci‑fi novel writer, veteran, and creator of AFR’s Faction software. He has been thinking about autonomous self‑healing networks since his studies in information security and network assurance, and is now watching them become real.

This observation was made during AFR testing of its Agent Development Environment on February 21, 2026.