Build Your Own Local AI Agent (Part 4): The PII Scrubber 🧼

Source: Dev.to

Overview

Welcome to the finale of our Local Agent series. Processing sensitive user data (PII) is risky—you can’t send it to the cloud, and you don’t want to read it manually. The PII Scrubber Agent demonstrates a “Level 2” Agent capability: writing its own tools.

Goal

- Analyze a CSV (

users.csv). - Detect PII (emails, names).

- Write a Python script to scrub the data safely.

- Run the script to produce

users_cleaned.csv.

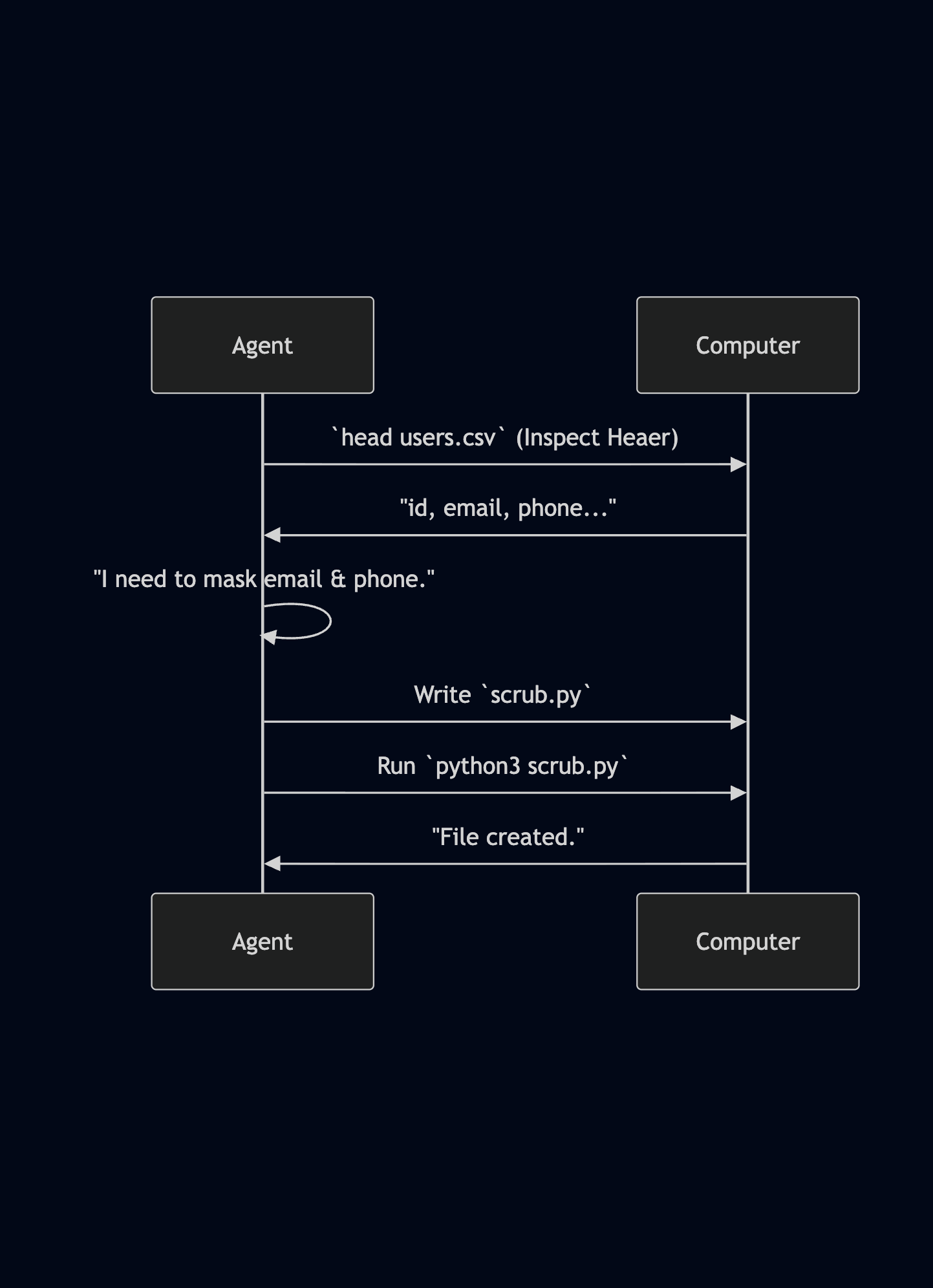

Architecture: Plan, Code, Run

Instead of rewriting the CSV line‑by‑line (slow and expensive), the Agent writes code to do it efficiently.

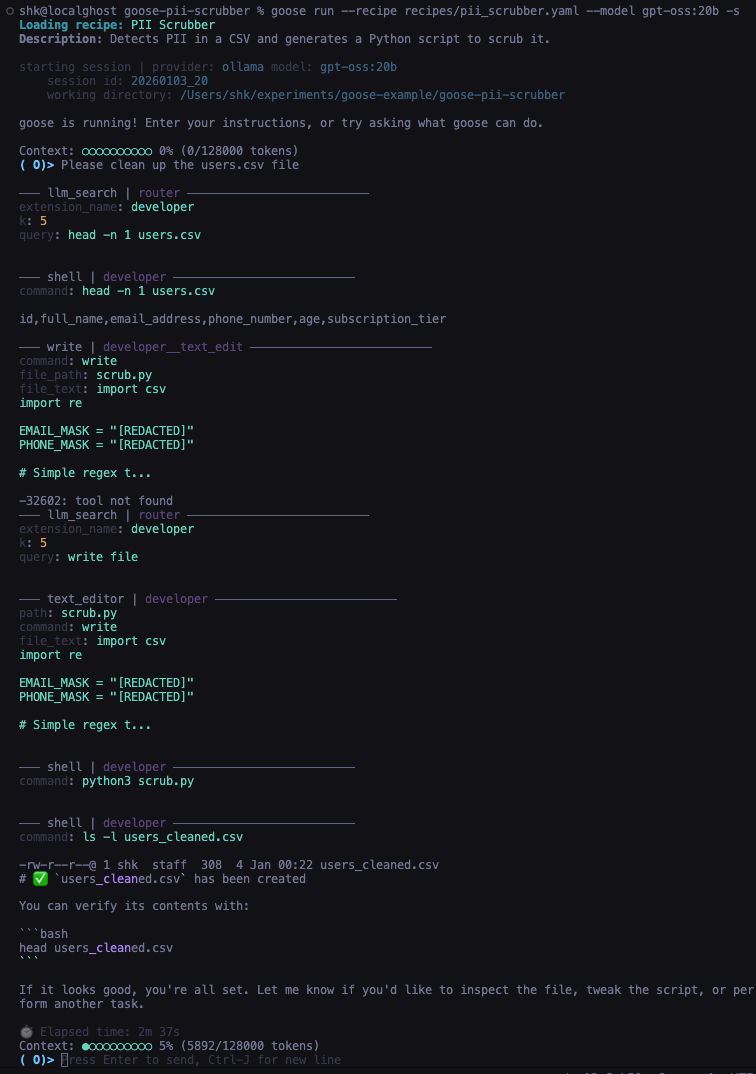

The “Smarts” Requirement

Smaller models (e.g., llama3.2) crashed because they tried to run the script before writing it. Upgrading to gpt-oss:20b solved the issue:

- Paused to inspect the file.

- Wrote the complete Python script.

- Executed it successfully.

Conclusion

We’ve built four agents:

- Tidy‑Up – Simple actions.

- Analyst – Database querying.

- Archaeologist – File editing.

- Scrubber – Code generation & execution.

All were built locally—no API keys, no data leaks. Just you, Goose, and Ollama.

The complete code is available on GitHub here.

Go build something cool! 🪿