Alexa’s latest AI blunder could have sent someone to the hospital

Source: Android Authority

Edgar Cervantes / Android Authority

TL;DR

- Alexa’s mold‑cleaning advice raised safety concerns after a Reddit user reported the assistant suggested using white vinegar, chlorine bleach, baking soda, and dish soap together.

- Mixing bleach and vinegar releases chlorine gas, which can irritate the eyes and lungs and cause serious breathing problems.

Incident Details

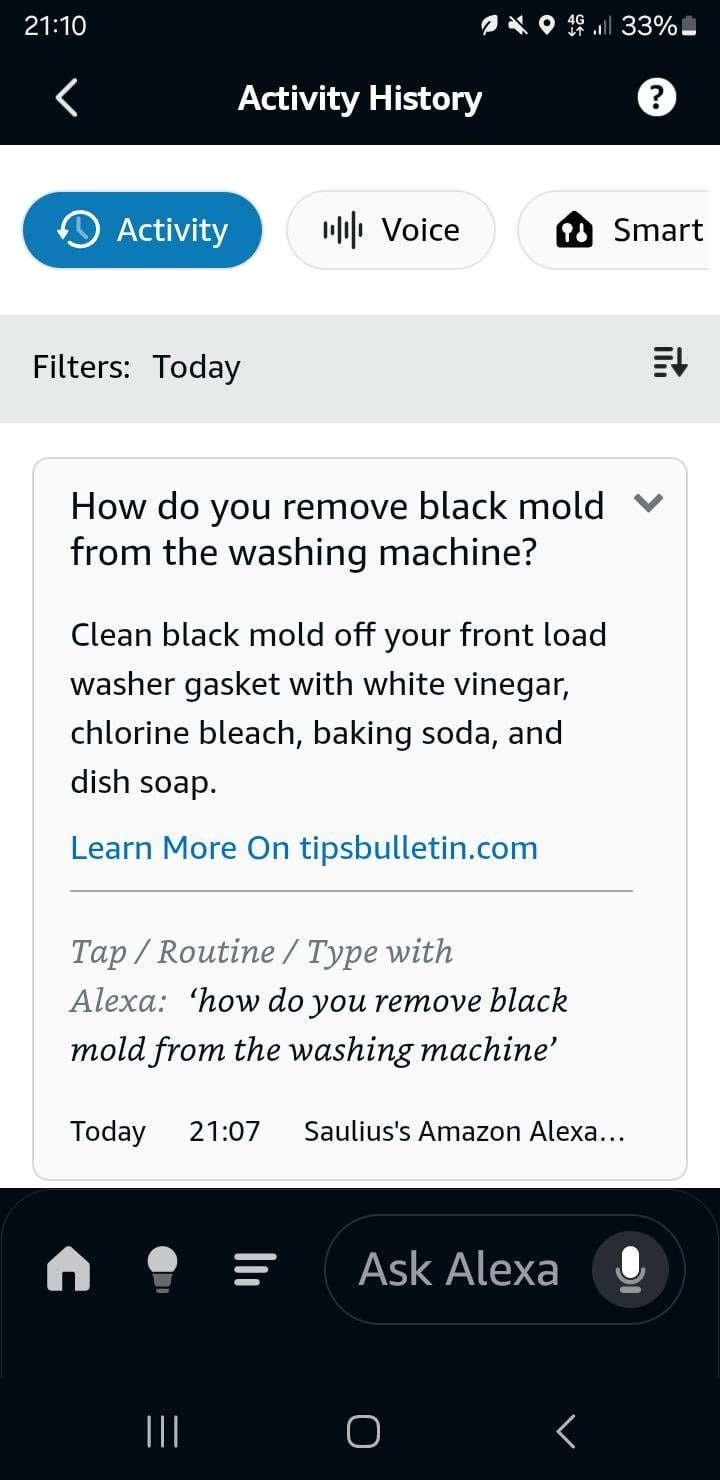

A Reddit user asked Amazon’s Alexa how to remove black mold from the rubber gasket of a front‑load washing machine. Alexa responded:

“Clean black mold off your front load washer gasket with white vinegar, chlorine bleach, baking soda, and dish soap.”

— via TechIssuesToday

The wording listed several chemicals together using “and,” which could lead someone to mix them—a dangerous mistake.

Health Risks

Mixing bleach and vinegar creates chlorine gas, a toxic chemical that can:

- Irritate eyes, throat, and lungs

- Cause coughing, burning eyes, and chest tightness

- Lead to fluid in the lungs or respiratory failure at higher exposures

These effects are documented by Healthline (source) and reinforced by health authorities such as the Washington State Department of Health (bleach‑mixing dangers).

Why the Mistake Happened

The Reddit thread suggests the error stemmed from how the AI summarized content. Alexa cited a cleaning site that listed vinegar, baking soda, soap, and bleach as separate options for mold removal. When the AI condensed the list into a single sentence, it used “and” instead of “or,” unintentionally presenting a recipe for mixing all the chemicals together.

Previous AI Assistant Mistakes

AI‑driven voice assistants have a history of giving risky or questionable advice:

- Google Gemini once suggested applying glue to pizza to help the cheese stick.

- Alexa previously told a child to touch a coin to exposed phone‑charger prongs, a potentially dangerous “challenge.” (The Independent report)

These incidents highlight the broader reliability challenges of generative AI, especially when safety is involved.

Amazon’s Response

Amazon has not publicly commented on this specific Reddit case, and it is unclear whether Alexa’s response has been corrected. Android Authority has reached out to Amazon for a statement and will update the article if a response is received.

References

- Alexa overview:

- Reddit discussion:

- Healthline article on bleach and vinegar:

- Washington State Department of Health on bleach mixing dangers:

- AI assistant safety concerns:

- Gemini pizza glue suggestion: