Why I Built a Personal AI Assistant and Kept It Small

Source: Dev.to

Overview

I like the idea of a personal AI assistant, but most of them feel heavy. When you try to use them, there’s often too much going on: huge system prompts, excessive token overhead, frameworks that are hard to trust, and many layers between what you ask for and what actually happens.

That is why I built Atombot – a small personal AI assistant inspired by OpenClaw and nanobot. I wasn’t trying to build a complex agent platform; I wanted something simpler, something I could understand, change, and actually use.

Privacy also matters. A personal assistant handles my personal data, and I don’t want to send that data outside of my machine. This made local LLM support important to me. While local models can run heavier assistant frameworks, they often struggle with large context windows because of the overhead from system prompts, instructions, tool definitions, and extra logic. It works fine for API‑based LLMs, but it becomes a much worse fit for local models. I wanted something lighter so local setups would feel realistic instead of frustrating.

Core Features

- Local LLM support with simple onboarding

- Small, understandable codebase that’s easy to modify

- Persistent memory for long‑term context

- Reminders and scheduled tasks (one‑time or recurring)

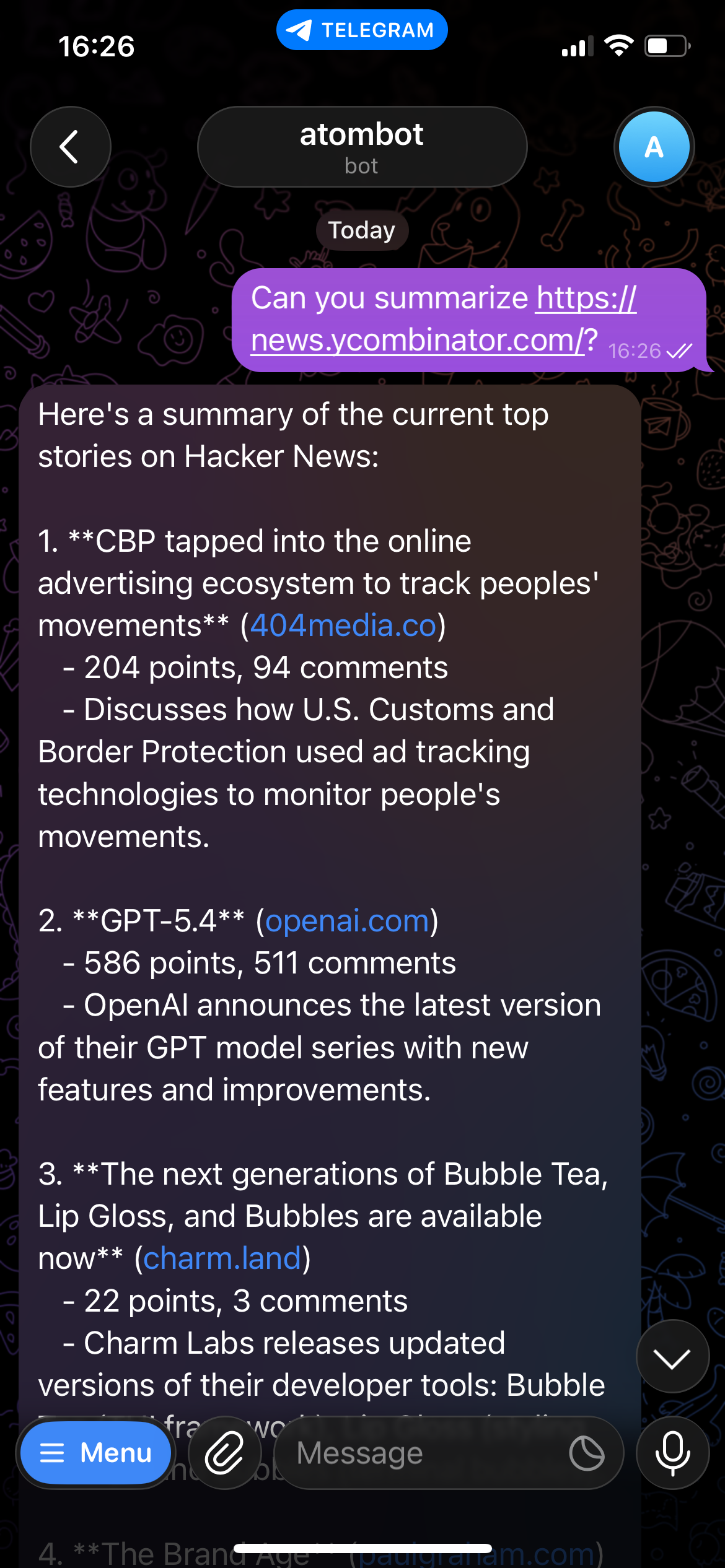

- Telegram integration so I can chat with it outside the terminal

Example Use Cases

Exploring the web and summarizing content

Managing reminders

Atombot can explore websites, summarize what it finds, and handle one‑time or recurring reminders. These are just two examples; you can find more in the repository.

Getting Started

If you want to try it, check out the project on GitHub:

https://github.com/daegwang/atombot

Note: This post was originally published on my site – link.