Why fake AI videos of UK urban decline are taking over social media

Source: BBC Technology

Overview

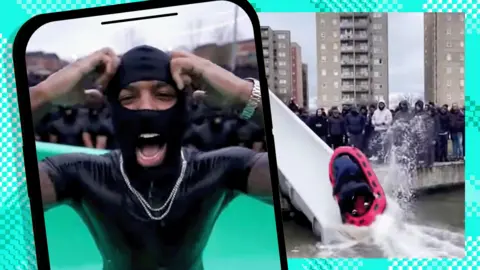

An AI‑generated video shows a crowd of young – mostly Black – men, wearing balaclavas and padded jackets, slipping down a water slide into a dirty swimming pool littered with debris. The caption describes the scene as a taxpayer‑funded water park in Croydon.

It is one of a wave of deepfakes that depict absurd scenes of urban decline, often set in the same south‑London neighbourhood. Dozens of copy‑cat accounts have begun producing similar content, collectively racking up millions of views on TikTok and Instagram Reels.

These fake videos are part of a broader trend in which online influencers portray Western cities—London, Manchester, San Francisco, New York—as overrun by immigrants and crime. The phenomenon has been dubbed “decline porn.” While some of the material is clearly satirical, the narratives are frequently exaggerated or fabricated, fuelling anger and racist backlash among viewers who take them at face value.

The BBC tracked down the originator of the Croydon AI videos for the new podcast Top Comment, which investigates the stories behind our social‑media feeds. What we found was a new brand of online faker who thrives on engagement and shrugs off responsibility for how the content can be used to push divisive political narratives.

The creator: RadialB

The videos are posted by an account that goes by RadialB. In a phone interview he:

- Refused to give his real name, but said he is in his 20s and from the north‑west of England.

- Has never been to Croydon.

- Describes the AI‑generated water‑park, zoo and aquarium as “just part of the progression of things getting more and more funny or absurd.”

- Acknowledges that several videos “blew up” because they were graphic, showing people flying off slides.

“If people saw it and they immediately knew it was fake, then they would just scroll. The selling point of generative‑AI models is that they look real,” RadialB told me.

He explains that the “roadmen” (a slang term for urban youth often associated with drug dealing) featured in his clips are “cultural archetypes” he uses for comedic effect. One post portraying roadmen in Parliament reportedly earned eight million views in a day.

When asked about the racist comments his videos sometimes provoke, he replied:

“I don’t deny it, but comments get filtered.”

He notes that TikTok, Instagram and X have policies that delete racist abuse. RadialB says he does not intend to target any specific race or ethnicity; he simply uses the prompt “roadmen wearing puffer jackets, tracksuits and balaclavas” because, in his view, that creates the “funniest” characters.

Racism, politics and the “AI‑generated” label

Although RadialB claims no political agenda, many of his videos depict absurd “taxpayer‑funded” facilities and include quips such as:

“English politics is a bit of a parasitic cesspit”

“We should replace them all with roadmen”

Some clips carry small on‑screen labels stating they are “AI‑generated” or contain “synthetic media,” in line with platform policies. Nevertheless, several commenters told us they were genuinely convinced the posts were real.

RadialB admits his videos provoke political reactions:

“I could put stuff up and there would be 50‑year‑olds and 60‑year‑olds in the comments raging and saying all this political stuff.”

He adds that many of those comments are likely ironic.

Community response

The wave of AI‑generated “slop” videos has drawn criticism for reinforcing unfair racial stereotypes of Croydon. One Black TikTok user from the area, C.Tino, posted a response:

“These videos are making people think this is real life. It’s becoming out of hand now.”

He argued that the trend falsely portrays Croydon as a “ghetto.”

BBC’s investigation

The BBC’s Top Comment podcast explores the origins of these deepfakes, the motivations of creators like RadialB, and the broader impact of synthetic media on public discourse. The episode is available here: Top Comment – AI‑generated decline porn.

Distort reality

RadialB says he was able to start making this content because of the “huge jump” in the quality and availability of AI tools. It “hugely lowers the barrier for entry” for anyone who wants to make “fake stuff,” he says.

He notes that many of the accounts resharing his posts are likely doing it for views and clicks – trying to monetise the content on platforms such as Facebook. Users as far away as Israel and Brazil said they shared the videos because they “got engagement” or wanted to “join in on the trend.”

Several Arabic‑language accounts based in the Middle East have also shared multiple videos about London’s decline, including footage of Croydon.

The author also found a number of TikTok profiles that present themselves as British news outlets but only share AI‑generated videos about London or other negative content about cities in the UK and the US.

The deepfakes fit into an existing trend of videos portraying European and American cities as falling into urban decay because of crime and immigration. Sometimes they show real examples of phone‑snatching, homelessness, graffiti, or drug problems, but they omit any wider context. Increasingly, however, they use AI to distort reality.

The Kurt Caz case

South African YouTuber Kurt Caz has built an audience of more than four million subscribers by posting travel videos with sensational titles such as “Attacked by thieves in Barcelona!” and “Threatened in the UK’s worst town!”

After posting a recent video called “Avoid this place in London,” he was accused of using AI to doctor the thumbnail in order to bolster his portrayal of the UK capital as “one of the most messed‑up cities” he has ever visited.

|

|---|

| Arabic text was added to shop signs and a balaclava placed on a cyclist in this YouTube thumbnail. |

In the video itself, the signs on Croydon’s North End are in English, the cyclist has no balaclava, and Caz is giving him a thumbs‑up after a friendly chat.

On X, Kurt Caz dismissed criticism of the thumbnail as “clickbait,” saying: “If you’re going to do a hit piece on me, do it properly.”

High‑profile amplification

These ideas of UK and European decline have also been taken up by high‑profile, influential figures, including Elon Musk (owner of X, Tesla, and SpaceX), who spoke at far‑right activist Tommy Robinson’s Unite the Kingdom rally last year.

“What I see happening is a destruction of Britain. Initially a slow erosion, but a rapidly increasing erosion of Britain with massive uncontrolled migration,” Musk said.

He regularly posts about the topic on his X profile, which has more than 230 million followers.

|

|---|

| Elon Musk has promoted ideas of British decline. |

While there are legitimate debates to be had about immigration and crime, a lot of this content goes beyond the evidence available in reality.

In January, pollster YouGov released data suggesting a majority of Britons now believe London is unsafe. However, only a third of respondents in the capital agreed, and 81 % said their own local area was safe.

RadialB’s stance

RadialB says his intention was not to become a “decline porn” influencer; instead, he claims he just wants to make people laugh with an “artform” that games recommendation systems. He appears to wash his hands of responsibility for how his content may be used or copied.

His TikTok account was banned for sharing content detected as graphic or inappropriate, but he has since set up a new account sharing the same kinds of videos—showing “roadmen” at grubby “infinity pools” and “taxpayer‑funded buffets.”