The Untold issues with AI job-takeover theory ( chapter 2)

Source: Dev.to

TL;DR:

The fact that AI will improve does not automatically mean it will replace most workers. Progress matters, but so do limits, timelines, economics, and diminishing returns.

The Theory

There’s an ongoing theory that AI will take most jobs—and definitely all software‑development jobs. I think this is highly exaggerated for a few reasons. In this post I want to look at how AI evolves, where it might take us, and what that means.

The Worst AI You’ll Ever Use

“Today’s AI is the worst AI you’ll ever use.”

If you’ve been interacting with LLMs over the past year, this statement feels obvious. It’s true of almost any piece of technology, not just computer science. We have the worst cars, planes, boats, TVs, medicines, and gym equipment we’ll ever own (maybe not zippers—those have been roughly the same since invention, crazy, I know).

But none of these things have the capability of taking our jobs, you might argue. The point is that simply saying something is getting better doesn’t tell us anything about where it will take us or how long it will take to get there.

AGI? ASI? WTFAI?

There’s a logical leap embedded in the “AI is getting better → it will replace everyone” statement. The real question is:

How far can it go?

AGI vs. ASI

- AGI (Artificial General Intelligence) – a system that can perform any intellectual task a human can.

- ASI (Artificial Super‑Intelligence) – an intelligence that surpasses the best human minds across all domains.

On X (Twitter) you’ll see claims like “we’re reaching AGI 5 times a day.” The real question isn’t when, but if we’ll ever get there.

The first big issue: defining AGI

- There’s no universally accepted metric for human intelligence.

- IQ measures specific cognitive abilities, not “intelligence” per se.

- Animal intelligence is usually compared to human abilities at a given age—highly subjective.

Because of this, there’s no cinematic moment where Sam Altman looks at a screen and declares, “AGI is born!”

Even if we define AGI as roughly matching the average human’s cognitive abilities, we must ask: What if we can actually build it? No one knows whether LLMs or current machine‑learning paradigms can achieve that.

Exercise: Think about everyone you know and how smart (or not) they are. By that definition, an AGI would be dumber than half the people you know at many tasks—hardly impressive.

It All Happened So Fast… Too Fast!

A lot of conjecture surrounds where we’re heading. No one knows for sure, but one thing is certain: it feels like AI is moving at break‑neck speed.

Recent breakthroughs

- The past 5 years (and especially the last year) have produced very impressive improvements.

- Models seem to get better by the minute—most of the time.

A “younger” appearance

Just as a celebrity’s facelift makes them look younger than they are, AI looks younger than its true origins.

- Early roots: Alan Turing’s Imitation Game (1950).

- First chatbot: ELIZA (1964).

So why now?

Why the recent acceleration?

- Attention Is All You Need (Vaswani et al., 2017) introduced the Transformer architecture, unlocking massive parallelism.

- GPUs (thanks to companies like NVIDIA) provided the hardware to exploit that parallelism.

- The combination created a perfect storm—plus a lot of eager gamers waiting for better graphics.

Money talks

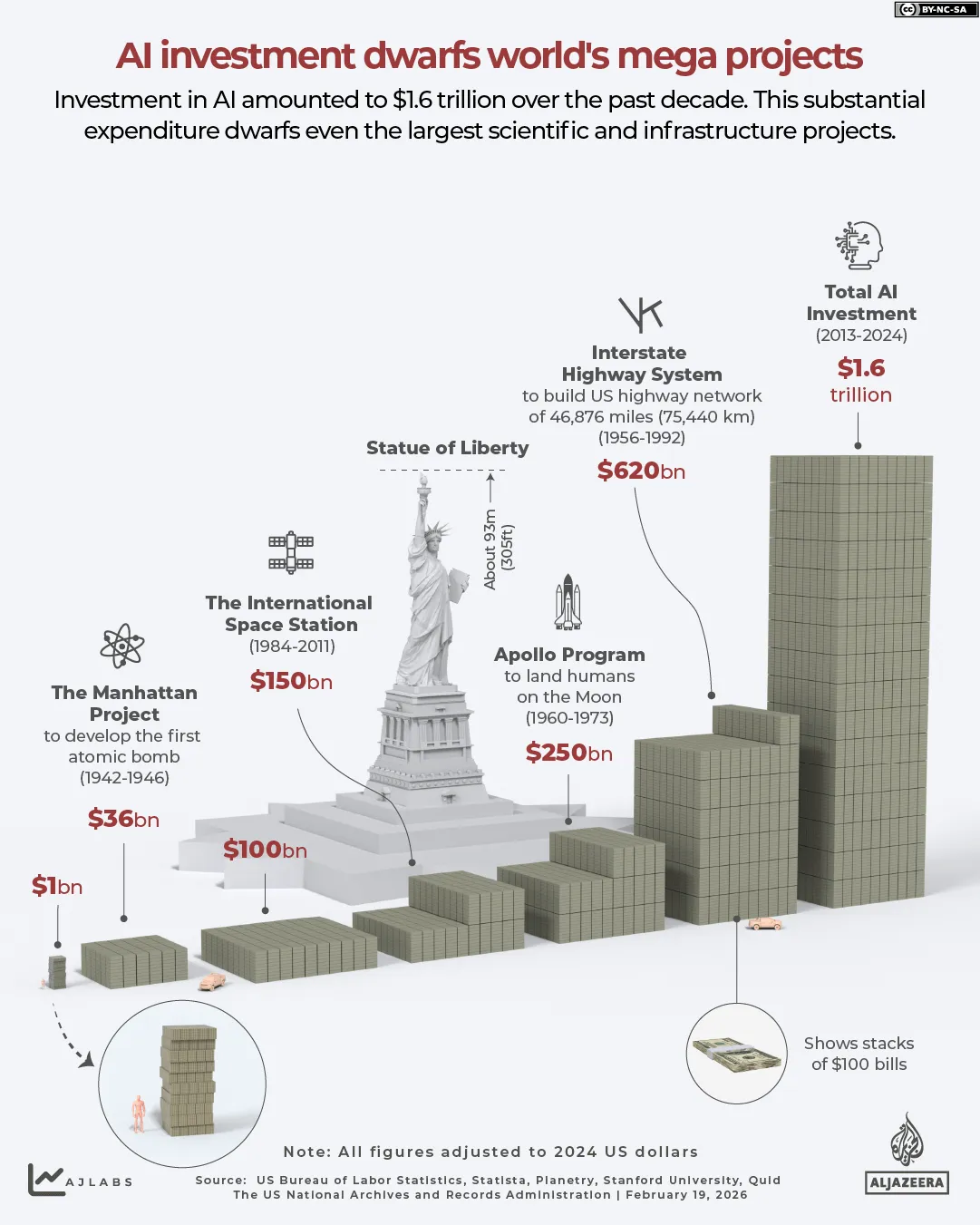

The hype attracted investors, leading to massive AI funding:

| Project | Approx. Cost (as % of contemporary GDP) |

|---|---|

| Apollo program (1960s) | ~0.4 % of US GDP |

| Manhattan Project (1940s) | ~0.4 % of US GDP |

| Meta AI spend (2026) | $135 B (≈0.5 % of 2026 US GDP) |

| Overall AI industry (2026) | Dwarfs historical mega‑projects |

Diminishing returns

Economics teaches us about the law of diminishing returns: each additional unit of investment yields smaller and smaller gains. It’s akin to the Pareto Principle (80/20 rule).

Think about the hype around each new iPhone or TV model. Year‑to‑year improvements rarely justify the excitement.

If We Were to …

(The original text cuts off here. The section can be completed later.)

Predict AI is going to continue to improve at the same pace, we would need to assume either or both:

- This massive level of investment will continue or grow at the same pace.

- A major breakthrough will happen in the field.

This is not an argument that this won’t unfold; I just want to highlight the challenges ahead from a realistic perspective. It’s unlikely we’ll see AI make the same leap forward in the next 5 years that it did in the past 5 years.

State of the Art

You can’t think in “forever” terms—“it will just keep getting better.” Whenever you start to consider infinity, everything becomes possible (e.g., the infinite monkey theorem). Regarding job displacement, we need to think in decades, not centuries. What will state‑of‑the‑art AI look like in 10 years?

Right now, AI is really good at pattern matching and information gathering/lookup. It’s essentially a huge, smart database; it can be dangerous, and it is causing pain right now. We can discuss this, but to say it will eliminate most jobs is extremely pessimistic (or optimistic, depending on your side).

If your job is mostly pattern recognition and information gathering, you’ve probably already been impacted. That isn’t the end of the world—similar disruptions have happened before on a large scale. But that’s a topic for another time.

AI still needs to improve a lot in other areas of cognition, such as logic, creativity, and visual/spatial reasoning. Not to mention the lack of reliability, which is inherited from its architecture.

So, what do you think? How far can it get in your lifetime?

Keep coding. Until next time.