The Nervous System: Designing Distributed Signaling with Redis and RabbitMQ

Source: Dev.to

The Split‑Brain Signaling Crisis

In the lifecycle of every successful real‑time application, there is a specific day when the architecture breaks – usually when you deploy your second signaling server.

Day 1 – Single process

With one Python process (or one server) WebRTC signaling is trivial.

You keep a simple in‑memory dictionary mapping user_id → websocket_connection.

When User A wants to call User B, your code looks up User B in the dictionary and pushes the SDP offer down the socket. It is fast, atomic, and simple.

Day 100 – Scale‑out

You add a load balancer in front of three signaling nodes to handle 50 000 concurrent connections. Suddenly the system enters a state of Split‑Brain.

- User A connects to Node 1.

- User B connects to Node 3.

When User A sends an offer to User B, Node 1 checks its local memory, sees no connection for User B, and drops the message – or worse, returns a “User Offline” error while User B is actively waiting on another server. The users are isolated in their respective process silos, unable to negotiate media.

This is the fundamental distributed‑state problem in WebRTC.

Unlike standard HTTP REST APIs (stateless, backed by a shared DB), signaling is stateful and ephemeral. Writing every SDP packet to Postgres would destroy call‑setup latency. You need a nervous system – a high‑speed, distributed message bus that bridges isolated processes.

The Two Paradigms: Speed vs. Memory

When architecting this layer, engineers usually gravitate toward two dominant technologies:

| Paradigm | Technology | Philosophy |

|---|---|---|

| Ephemeral | Redis Pub/Sub | “If you aren’t listening right now, you don’t need to know.” |

| Durable | RabbitMQ | “I will hold this message until you confirm you have processed it.” |

In a production WebRTC system you often need both, applied to different classes of traffic.

Redis Pub/Sub – The Velocity Layer

Redis is the industry standard for WebRTC signaling because of one metric: latency.

- Pub/Sub model – a publisher sends a message to a channel; Redis instantly forwards it to all active subscribers.

- No storage, no queueing, no look‑back.

Internals & Performance

PUBLISH iterates over the linked list of subscribers for the channel and writes the data to their output buffers. This makes a single Redis instance capable of handling millions of messages per second with sub‑millisecond latency.

For WebRTC, this speed is critical during the ICE candidate exchange. A typical client may generate 10‑20 candidates in a burst; they must travel from Client A → Server → Client B immediately. Adding 50 ms of queuing latency to each candidate delays the Time‑to‑First‑Media (TTFM), leaving users staring at a black screen.

The “At‑Most‑Once” Trade‑off

The cost of this speed is an at‑most‑once delivery guarantee. If a signaling node crashes or restarts, it disconnects from Redis; any messages sent to its subscribers during that downtime are lost forever.

- For ICE candidates this is often acceptable – WebRTC is robust; lost candidates simply cause the ICE agent to try the next pair.

- For critical state transitions (e.g., “Call Ended”) losing a message can leave a room marked “active” in your database forever, leaking resources.

Meme for morale

RabbitMQ – The Reliability Layer

RabbitMQ implements AMQP (Advanced Message Queuing Protocol) and acts as a broker, not just a router.

Internals & Reliability

- Messages flow through exchanges to queues.

- Acknowledgments (ACKs) and persistence guarantee that a message is not removed from the queue until a consumer acknowledges it.

- If a consumer crashes, the TCP connection breaks, and RabbitMQ re‑queues the message for another consumer.

This at‑least‑once guarantee is non‑negotiable for control‑plane events.

Example:

Room Created→Start Cloud Recording.

If you send this via Redis and the recording service blips, the recording never starts – the call proceeds, but the compliance file is missing, exposing you to HIPAA violations.

With RabbitMQ, theStart Recordingjob sits in a durable queue until a recorder comes back online and processes it.

The Latency Tax

Reliability is expensive. RabbitMQ writes persistent messages to disk (or to a durable store) and performs additional handshakes for ACKs, which adds latency compared with Redis Pub/Sub. In practice the added latency is usually tens of milliseconds, which is acceptable for control‑plane traffic but not for the high‑frequency ICE candidate stream.

Putting It All Together

| Traffic Type | Recommended Bus | Guarantees | Typical Latency |

|---|---|---|---|

| ICE candidates, SDP offers/answers (high‑frequency, latency‑sensitive) | Redis Pub/Sub | At‑most‑once (loss tolerable) | < 1 ms |

| Call‑control events (room creation, recording start/stop, call termination) | RabbitMQ | At‑least‑once (durable) | 10‑30 ms |

| Hybrid scenarios (e.g., fallback when Redis is unavailable) | Both (Redis primary, RabbitMQ fallback) | Depends on fallback logic | – |

By splitting the signaling plane into a fast, volatile layer (Redis) and a reliable, durable layer (RabbitMQ), you avoid split‑brain isolation while keeping call‑setup latency low and guaranteeing that critical state changes are never lost.

Happy signaling!

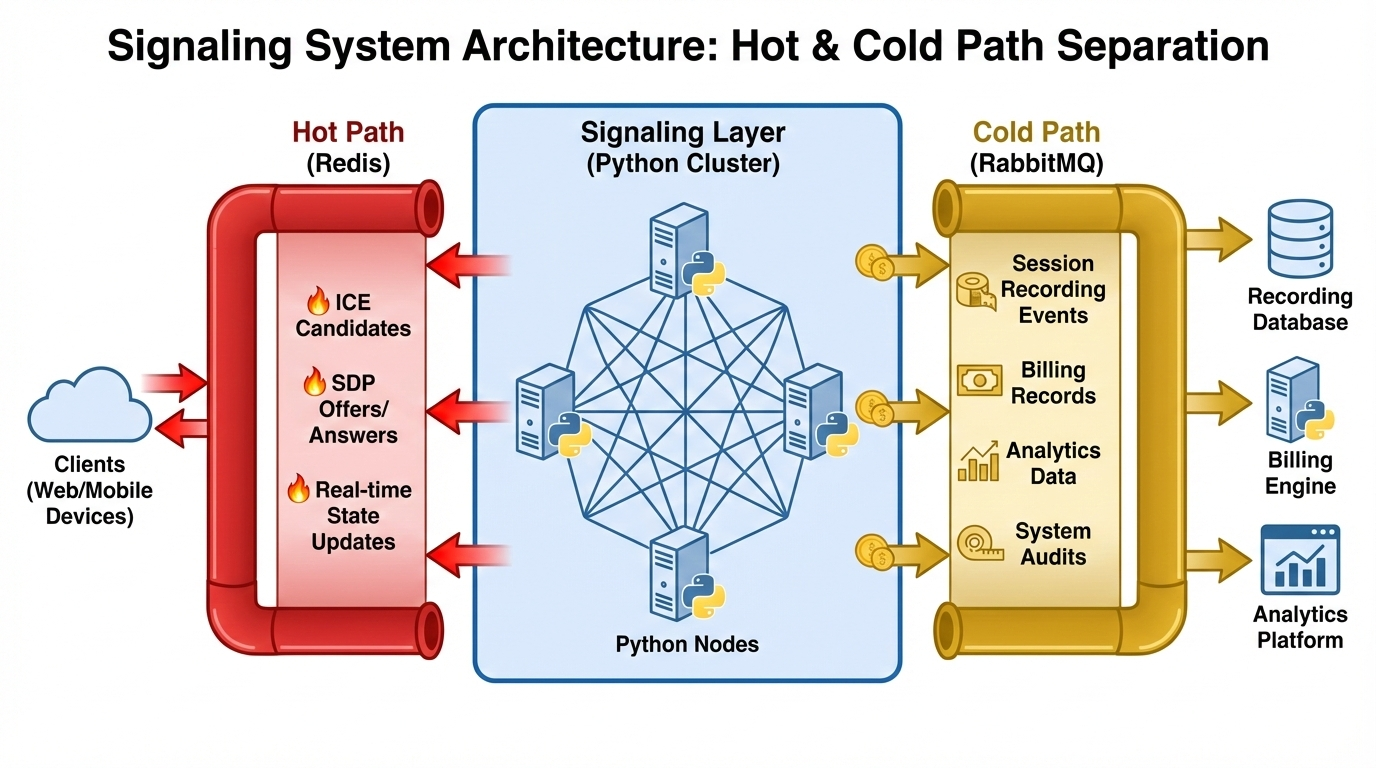

# The Hybrid Architecture: A Dual‑Bus Approach

The most robust production systems utilize a **Hybrid Architecture**.

We classify traffic into two lanes:

* **Hot Path** (Ephemeral) – low‑latency, fire‑and‑forget signals.

* **Cold Path** (Durable) – transactional events that must be persisted.

---

##

---

### Lane 1: The Hot Path (Redis)

**Traffic:** SDP offers/answers, ICE candidates, cursor movements, typing indicators.

**Goal:** Minimal latency.

**Implementation**

1. Each user connects to a signaling node.

2. The node subscribes to a unique Redis channel `user:{uuid}`.

3. When another node needs to send data to that user, it publishes to that channel.

**Library:** `redis.asyncio` (formerly `aioredis`).

**Pattern:** *Fire‑and‑forget*. If a message drops, the UI handles the retry or simply ignores it (e.g., a lost cursor update is irrelevant 100 ms later).

### Lane 2: The Cold Path (RabbitMQ)

**Traffic:** Room lifecycle events (create/destroy), webhook triggers, billing metering, recording jobs.

**Goal:** Transactional integrity.

**Implementation**

When a meeting ends, the signaling node publishes a `room.ended` event to a **topic** exchange in RabbitMQ. The event is routed to multiple queues:

| Queue | Purpose |

|------------------|-------------------------------------------|

| `billing_queue` | Calculates duration and charges the customer |

| `cleanup_queue` | Shuts down the media‑server (SFU) resources |

| `analytics_queue`| Aggregates quality statistics |

**Library:** `aio_pika`.

**Pattern:** *Publisher confirms* + *consumer acks* – we rely on RabbitMQ to ensure every billing event is processed **exactly once** (or at least once with idempotency checks).Implementing Async Architectures in Python

When using an asyncio‑based framework (Quart, FastAPI, etc.) you must manage connection pools carefully. Opening a new Redis connection per WebSocket will exhaust file descriptors instantly.

The Multiplexed Redis Listener

Maintain one global Redis connection for publishing and one for subscribing per process. subscribe() is a blocking operation, so run it in a dedicated background task that dispatches messages to the appropriate WebSocket instances.

# Conceptual architecture for multiplexed Redis → WebSocket

active_websockets = {} # Map user_id → websocket

async def redis_reader(channel):

async for message in channel.listen():

target_user = extract_target(message)

if ws := active_websockets.get(target_user):

await ws.send_json(message["data"])

# On startup

asyncio.create_task(redis_reader(global_pubsub_channel))The Async AMQP Consumer

aio_pika provides robust channel‑state handling. A critical production pattern is back‑pressure: if your signaling server is overwhelmed by incoming WebSocket frames, you should not pull more messages from RabbitMQ. Set a prefetch_count so the server only consumes what it can handle, leaving excess messages for other nodes (automatic load balancing).

Decision Matrix: When to Use What

| Feature | Redis Pub/Sub | RabbitMQ |

|---|---|---|

| Primary Metric | Latency (< 1 ms) | Reliability (durability) |

| Delivery Guarantee | At‑most‑once (lossy) | At‑least‑once (persistent) |

| Throughput | High (millions /sec) | Moderate (thousands /sec) |

| Complexity | Low (simple commands) | High (exchanges, bindings) |

| Ideal Payload | ICE candidates, mouse positions | Billing events, start/stop recording |

| Python Library | redis.asyncio | aio_pika |

Conclusion: The Nervous System of Scale

- A single signaling server is a prototype.

- A distributed cluster is a product.

Introducing a message bus decouples the socket connection from application logic. Signaling nodes become stateless “dumb pipes” that merely ferry data between the client and the “nervous system”.

Choosing between Redis and RabbitMQ is not binary. The most resilient WebRTC architectures distinguish between signals (which flow like water) and events (which must be recorded like stone). By hybridizing these technologies you build a platform that feels instant to the user while remaining audit‑proof to the business.

Follow the author

Channel: The Lalit Official