StrongDM's AI team build serious software without even looking at the code

Source: Hacker News

7 February 2026

Last week I hinted at a demo I had seen from a team implementing what Dan Shapiro called the Dark Factory level of AI adoption, where no human even looks at the code the coding agents are producing. That team was part of StrongDM, and they’ve just shared the first public description of how they are working in Software Factories and the Agentic Moment:

We built a Software Factory: non‑interactive development where specs + scenarios drive agents that write code, run harnesses, and converge without human review. […]

In kōan or mantra form

- Why am I doing this? (implied: the model should be doing this instead)

In rule form

- Code must not be written by humans

- Code must not be reviewed by humans

In practical form

- If you haven’t spent at least $1,000 on tokens today per human engineer, your software factory has room for improvement.

I think the most interesting of these, without a doubt, is “Code must not be reviewed by humans.” How could that possibly be a sensible strategy when we all know how prone LLMs are to making inhuman mistakes?

I’ve seen many developers recently acknowledge the November 2025 inflection point, where Claude Opus 4.5 and GPT 5.2 appeared to turn the corner on how reliably a coding agent could follow instructions and take on complex coding tasks. StrongDM’s AI team was founded in July 2025 based on an earlier inflection point relating to Claude Sonnet 3.5:

The catalyst was a transition observed in late 2024: with the second revision of Claude 3.5 (October 2024), long‑horizon agentic coding workflows began to compound correctness rather than error.

By December 2024, the model’s long‑horizon coding performance was unmistakable via Cursor’s YOLO mode.

Their new team started with the rule “no hand‑coded software”—radical for July 2025, but something I’m seeing a significant number of experienced developers adopt as of January 2026.

The core problem

If you’re not writing anything by hand, how do you ensure that the code actually works?

Having the agents write tests only helps if they don’t cheat and assert true.

This feels like the most consequential question in software development right now: how can you prove that software you are producing works if both the implementation and the tests are being written for you by coding agents? (See my earlier post on proving software works.)

StrongDM’s answer: scenario testing

StrongDM was inspired by scenario testing (Cem Kaner, 2003). As they describe it:

We repurposed the word scenario to represent an end‑to‑end “user story”, often stored outside the codebase (similar to a “holdout” set in model training), which could be intuitively understood and flexibly validated by an LLM.

Because much of the software we grow itself has an agentic component, we transitioned from boolean definitions of success (“the test suite is green”) to a probabilistic and empirical one. We use the term satisfaction to quantify this validation: of all the observed trajectories through all the scenarios, what fraction of them likely satisfy the user?

Treating scenarios as hold‑out sets—used to evaluate the software but not stored where the coding agents can see them—is fascinating. It imitates aggressive testing by an external QA team—an expensive but highly effective way of ensuring quality in traditional software.

The Digital Twin Universe (DTU)

The part of the demo that made the strongest impression on me was StrongDM’s concept of a Digital Twin Universe.

The software they were building helped manage user permissions across a suite of connected services. This in itself was notable—security software is the last thing you would expect to be built using unreviewed LLM code!

[The Digital Twin Universe] are behavioral clones of the third‑party services our software depends on. We built twins of Okta, Jira, Slack, Google Docs, Google Drive, and Google Sheets, replicating their APIs, edge cases, and observable behaviors.

With the DTU they can:

- validate at volumes and rates far exceeding production limits,

- test failure modes that would be dangerous or impossible against live services,

- run thousands of scenarios per hour without hitting rate limits, triggering abuse detection, or accumulating API costs.

How they built the twins

- Dump the full public API documentation of a target service (e.g., Okta) into an agent harness.

- Instruct the agent to generate a self‑contained Go binary that imitates that API.

- Optionally add a simplified UI on top to help complete the simulation.

Now, with independent clones of those services—free from rate‑limits or usage quotas—their army of simulated testers can go wild. Their scenario tests become scripts for agents to constantly execute against the new systems as they are being built.

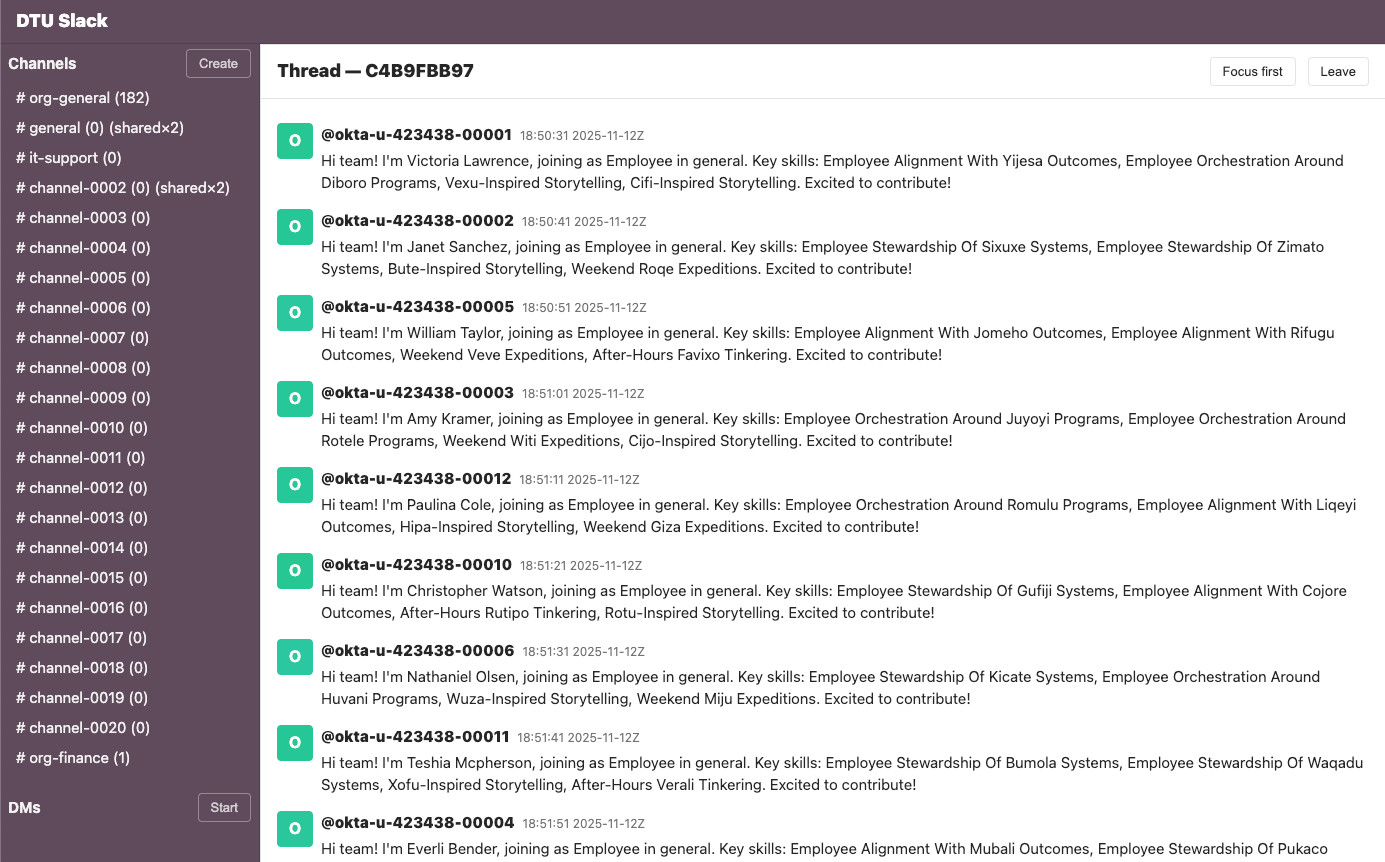

Example: Slack twin

The screenshot below shows a Slack‑like interface generated by the DTU. It illustrates how the testing process works, displaying a stream of simulated Okta users who are about to need access to different simulated systems.

[Image: Screenshot of a Slack‑like interface titled “DTU Slack” showing a thread view (Thread — C4B9FBB97) with “Focus first” and “Leave” buttons. The left sidebar lists channels including #org‑general (182), #general (0) (shared×2), #it‑support (0), #channel‑0002 (0) (shared×2), #channel‑0003 (0) through #channel‑0020 (0), #org‑finance (1), and a DMs section with a “Start” button. A “Create” button is visible at the bottom.]

(Image placeholder – replace # with the actual image URL.)

Button appears at the top of the sidebar. The main thread shows approximately nine automated introduction messages from users with Okta IDs (e.g., @okta-u-423438-00001, @okta-u-423438-00002, etc.), all timestamped 2025‑11‑12Z between 18:50:31 and 18:51:51.

Each message follows the format:

“Hi team! I’m [Name], joining as Employee in general.

Key skills: [fictional skill phrases].

Excited to contribute!”

All users have red/orange “O” avatar icons.

Why this matters

This ability to quickly spin up a useful clone of a subset of Slack demonstrates how disruptive the new generation of coding‑agent tools can be.

Creating a high‑fidelity clone of a significant SaaS application has always been possible, but never economically feasible. Generations of engineers may have wanted a full in‑memory replica of their CRM to test against, but self‑censored the proposal to build it.

The techniques page is worth a look too. In addition to the Digital Twin Universe, it introduces terms such as:

- Gene Transfusion – agents extract patterns from existing systems and reuse them elsewhere.

- Semports – directly port code from one language to another.

- Pyramid Summaries – provide multiple levels of summary so an agent can enumerate short ones quickly and zoom in on more detailed information as needed.

StrongDM AI releases

Attractor

- Repository: github.com/strongdm/attractor

- Description: The non‑interactive coding agent at the heart of their software factory.

- Note: The repo contains no code, only three markdown files describing the spec in meticulous detail, plus a README note that you should feed those specs into your coding agent of choice.

CXDB

- Repository: github.com/strongdm/cxdb

- Description: A more traditional release with:

- 16 000 lines of Rust

- 9 500 lines of Go

- 6 700 lines of TypeScript

- Function: Their “AI Context Store” – a system for storing conversation histories and tool outputs in an immutable DAG.

- Comparison: Similar to my LLM tool’s SQLite logging mechanism but far more sophisticated. I may have to gene‑transfuse some ideas out of this one!

A glimpse of the future?

I visited the StrongDM AI team back in October as part of a small group of invited guests.

- Team: Justin McCarthy, Jay Taylor, and Navan Chauhan (formed just three months earlier).

- Demo: Working demos of their coding‑agent harness, Digital Twin Universe clones of half a dozen services, and a swarm of simulated test agents running through scenarios.

- Timing: This was before the Opus 4.5 / GPT 5.2 releases that made agentic coding significantly more reliable (a month after those demos).

It felt like a glimpse of one potential future of software development, where engineers move from building code to building and then semi‑monitoring the systems that build the code—the Dark Factory.

Wait, $1,000 / day per engineer?

I glossed over this detail in my first published version of this post, but it deserves serious attention.

- Cost implication: If these patterns truly add $20 000 / month per engineer to your budget, they become far less interesting to me.

- Business model question: At that point, the challenge is whether you can create a profitable enough line of products to afford the enormous overhead of developing software this way.

- Competitive risk: Any competitor could potentially clone your newest features with a few hours of coding‑agent work.

I hope these patterns can be put into play with a much lower spend. I’ve personally found the $200 / month Claude Max plan gives me plenty of space to experiment with different agent patterns, but I’m not running a swarm of QA testers 24/7!

Takeaways

- There’s a lot to learn from StrongDM even for teams and individuals who aren’t going to burn thousands of dollars on token costs.

- I’m particularly invested in the question of what it takes to have agents prove that their code works without needing to review every line they produce.