Show HN: Steerling-8B, a language model that can explain any token it generates

Source: Hacker News

Author: Guide Labs Team

Published: February 23, 2026

We are releasing Steerling‑8B, the first interpretable model that can trace any token it generates to its input context, to concepts a human can understand, and to its training data.

- Training data: 1.35 trillion tokens

- Performance: Comparable to models trained on 2–7 × more data

Key capabilities

- Concept steering at inference: Suppress or amplify specific concepts without retraining.

- Training‑data provenance: Retrieve the source data for any generated text chunk.

- Inference‑time alignment: Control concepts directly, replacing thousands of safety‑training examples with explicit, concept‑level steering.

Model overview

For the first time, a language model at the 8‑billion‑parameter scale can explain every token it produces in three key ways. Specifically, for any group of output tokens that Steerling generates, we can trace those tokens to:

- [Input context] – the prompt tokens

- [Concepts] – human‑understandable topics in the model’s representations

- [Training data] – the training data that drove the output

Artifacts

We are releasing the weights of a base model trained on 1.35 T tokens together with companion code to interact with and explore the model.

Steerling‑8B in action

Below we show Steerling‑8B generating text from a prompt across various categories. You can select an example, then click on any highlighted chunk of the output. The panel below will update to show:

- Input‑feature attribution: which tokens in the input prompt strongly influenced that chunk.

- Concept attribution: the ranked list of concepts—both tone (e.g., analytical, clinical) and content (e.g., genetic‑alteration methodologies)—that the model routed through to produce that chunk.

- Training‑data attribution: how the concepts in that chunk are distributed across training sources (ArXiv, Wikipedia, FLAN, etc.), showing where the model’s knowledge originates.

Loading explorer…

Model architecture

Steerling is built on a causal discrete diffusion model backbone, which lets us steer generation across multi‑token spans rather than only at the next token.

The key design choice is decomposing the model’s embeddings into three explicit pathways:

- ~33 K supervised “known” concepts – curated concepts supplied during training.

- ~100 K “discovered” concepts – patterns the model learns autonomously.

- Residual – captures any remaining information not covered by the first two pathways.

We then constrain the model with training loss functions that ensure the model routes signal through concepts without sacrificing performance. The concepts feed into logits through a linear path, so every prediction decomposes exactly into per‑concept contributions, and we can edit those contributions at inference time without retraining.

For the full architecture, training objectives, and scaling analysis, see Scaling Interpretable Models to 8B.

Performance

Despite being trained on significantly fewer compute resources than comparable models, Steerling‑8B achieves competitive results across standard benchmarks.

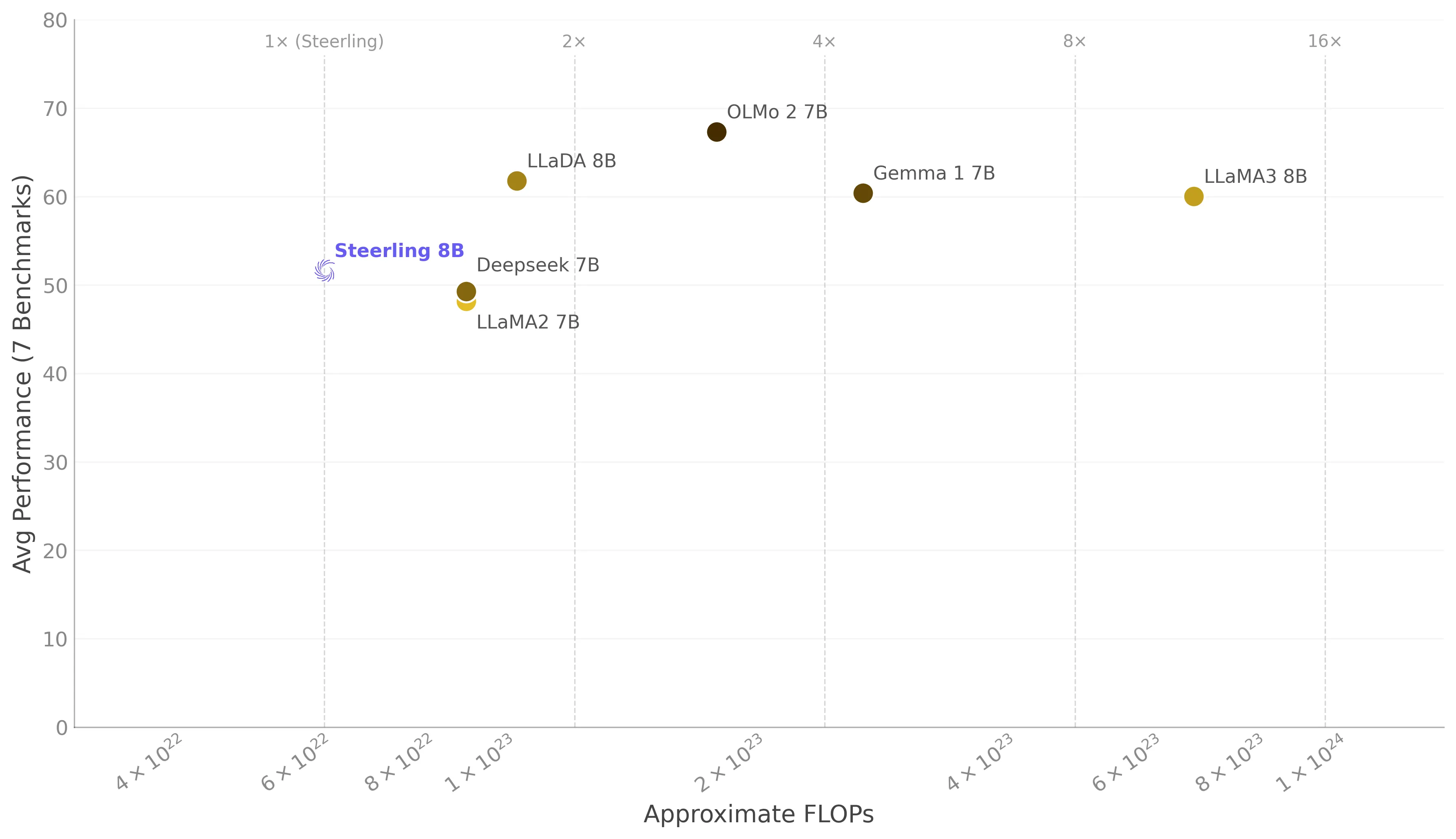

Average performance vs. training FLOPs

The scatter plot below shows the average performance (across seven benchmarks) plotted against approximate training FLOPs on a log scale. Vertical lines indicate multiples of Steerling’s compute budget.

Steerling outperforms both LLaMA2‑7B and DeepSeek‑7B on overall average despite using fewer FLOPs, and remains within the range of models trained with 2–10× more compute.

Group‑average performance across task categories

The bar chart below compares group‑average scores for General and Math task categories.

Steerling’s performance spans a variety of benchmarks, from general‑purpose question answering to tasks that emphasize reasoning and mathematics.

Interpretability

In the previous update, we shared several ways to assess how interpretable a model’s representations are. Here we add another metric that gives insight into the model’s use of its concepts.

Concept‑module contribution

- On a held‑out validation set, > 84 % of token‑level contribution comes from the concept module.

- This shows the model is not merely relying on the residual pathway to make predictions.

Why it matters:

If predictions genuinely flow through concepts, editing those concepts at inference time actually changes the model’s behavior, rather than merely nudging a side channel while the “real work” happens elsewhere.

Token‑level logit distribution of Steerling‑8B on a held‑out validation set.

Residual‑pathway ablation

A useful sanity check is to remove the residual pathway entirely:

- On several LM‑Harness tasks, dropping the residual has only a small effect on performance.

- This suggests the model’s predictive signal is largely routed through concepts rather than a generic “everything‑else” channel.

Change in model performance across a variety of benchmarks with and without the model’s residual portion.

Concept detection

- Steerling can detect known concepts in text with 96.2 % AUC on a held‑out validation dataset.

All figures are from experiments on the Steerling‑8B model.

What this unlocks

In the coming weeks, we’ll release deep dives on each of these capabilities:

- Concept steering – precise control via intervention.

- Concept discovery – what did Steerling learn that we didn’t teach it? We’ll open up the discovered concept space and show surprising structure.

- Alignment without fine‑tuning – replace thousands of safety‑training examples with a handful of concept‑level interventions.

- Memorization & training‑data valuation – trace any generation back to the training data that produced it and assign value to individual data sources.

- The case for inherent interpretability – what you gain when interpretability is designed in from the start, and what you miss when it’s bolted on later.

We’ll cover each of these in detail in upcoming posts, with quantitative evaluations and deployment‑oriented case studies.