I tried using AI to build an exam system. It worked… until it didn’t.

Source: Dev.to

The Journey from Structured‑Data Bugs to an AI‑Powered Exam Platform

I didn’t start with the idea of building an exam platform. It began with a different problem: we were using AI to generate structured data for APIs. At first the responses looked fine, but in production we started seeing subtle format errors—e.g., 120.5 instead of the required 120.50. The downstream system rejected the value because it expected an exact format.

These tiny issues took a lot of time to debug and kept re‑appearing.

Why This Matters for Exams

If AI can slip on simple numeric formatting, what happens when we ask it to generate exam questions or evaluate answers? In demos it looks impressive—instant question creation, automatic grading—but in real usage consistency becomes a problem:

- Difficulty levels vary randomly.

- Answers are not always structured the same way.

- Evaluation can feel subjective.

That’s not reliable for students or schools.

The First Attempt: Prompt Engineering

I tried the usual fix: improve the prompts. I made them longer, added more rules, and was very specific. It helped a little, but it didn’t solve the core issue—edge cases still produced slightly off output.

The real problem wasn’t the prompt; it was trusting raw AI output without any control.

The Solution: A Small Java Wrapper

Instead of “fixing AI,” I built a lightweight Java application (distributed as a JAR). Users can:

- Enter their details.

- Choose a subject and topic.

- Generate questions, run a timer, submit answers, and receive a report.

The novelty isn’t the UI; it’s what happens between the UI and the AI. Below is a walkthrough of the UI screens.

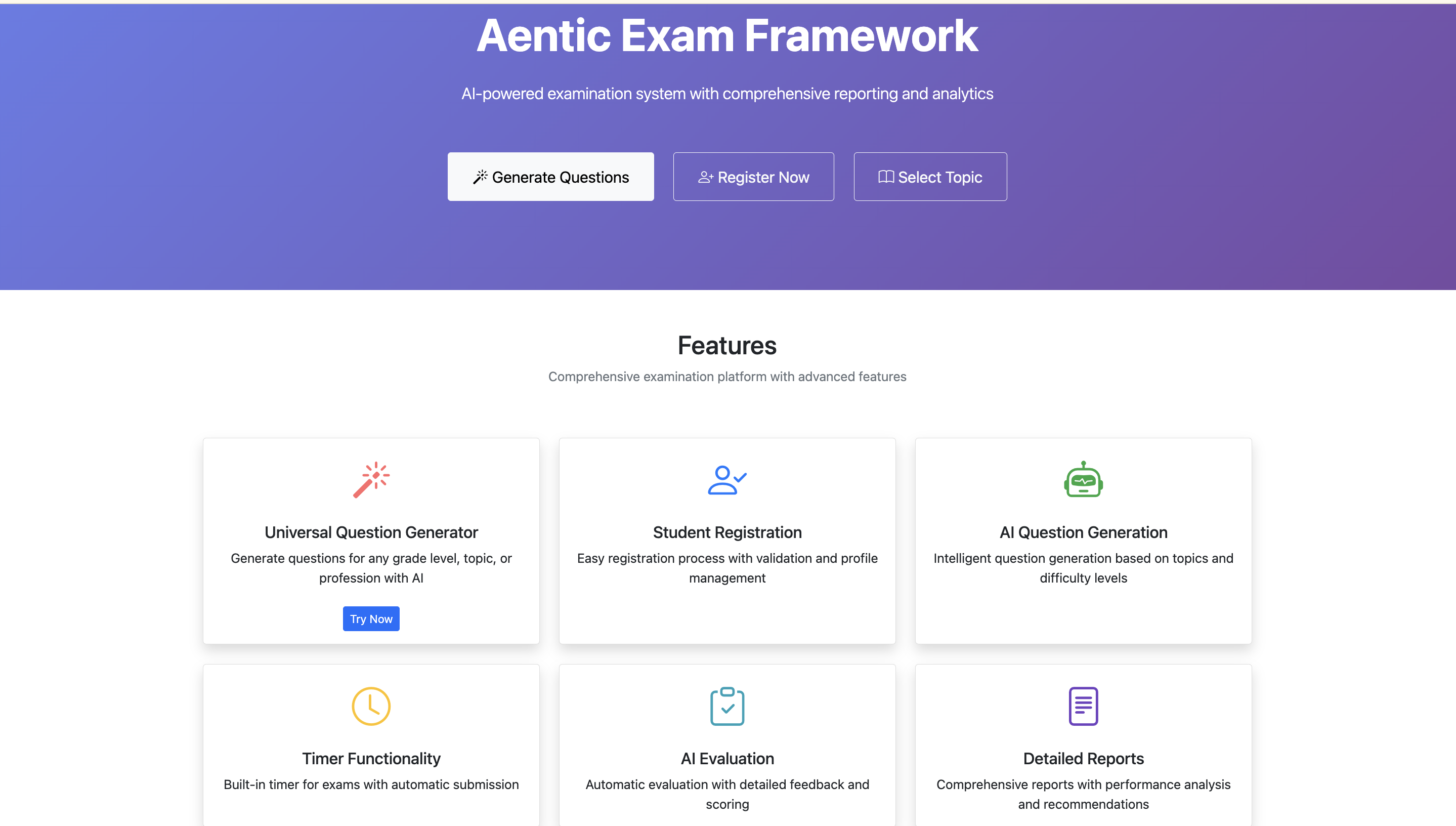

Home Page (Landing + Features)

The main entry point shows the overall idea clearly—a full‑stack AI‑powered exam platform where users can generate questions, register, and select topics.

- Not just a basic form‑based app.

- Positioned as a complete examination framework with AI question generation, evaluation, timer, and reporting already integrated.

- The feature section below highlights the core capabilities in a structured way, emphasizing that the platform handles the full exam lifecycle, not just question generation.

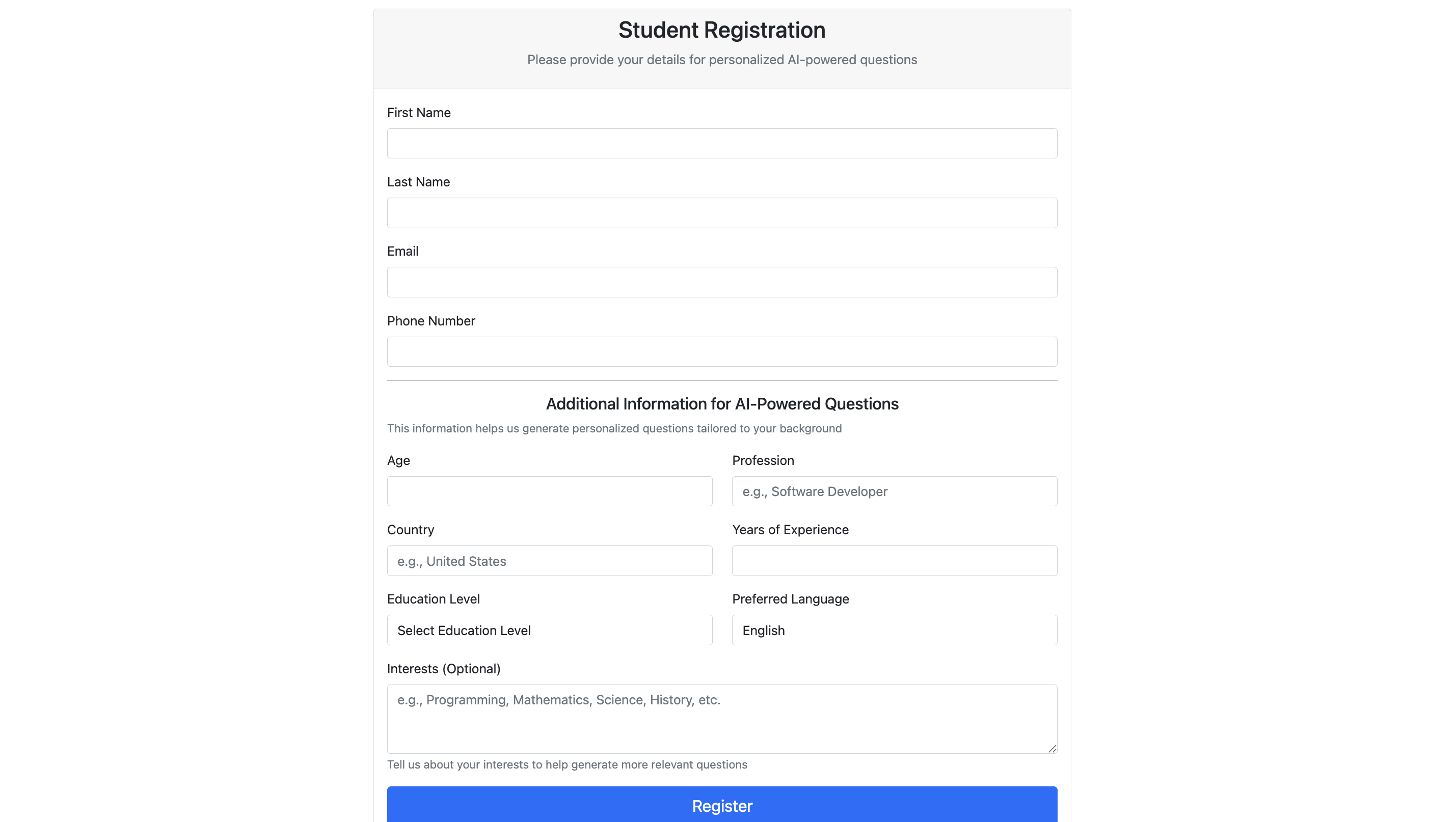

Student Registration

Captures detailed student information—name, email, age, country, experience, interests, and education level.

- This context allows the system to personalize question generation.

- The design moves beyond generic questions toward adaptive exam generation.

- Simple layout, but a strong idea: collect enough context for the AI to produce relevant, meaningful questions.

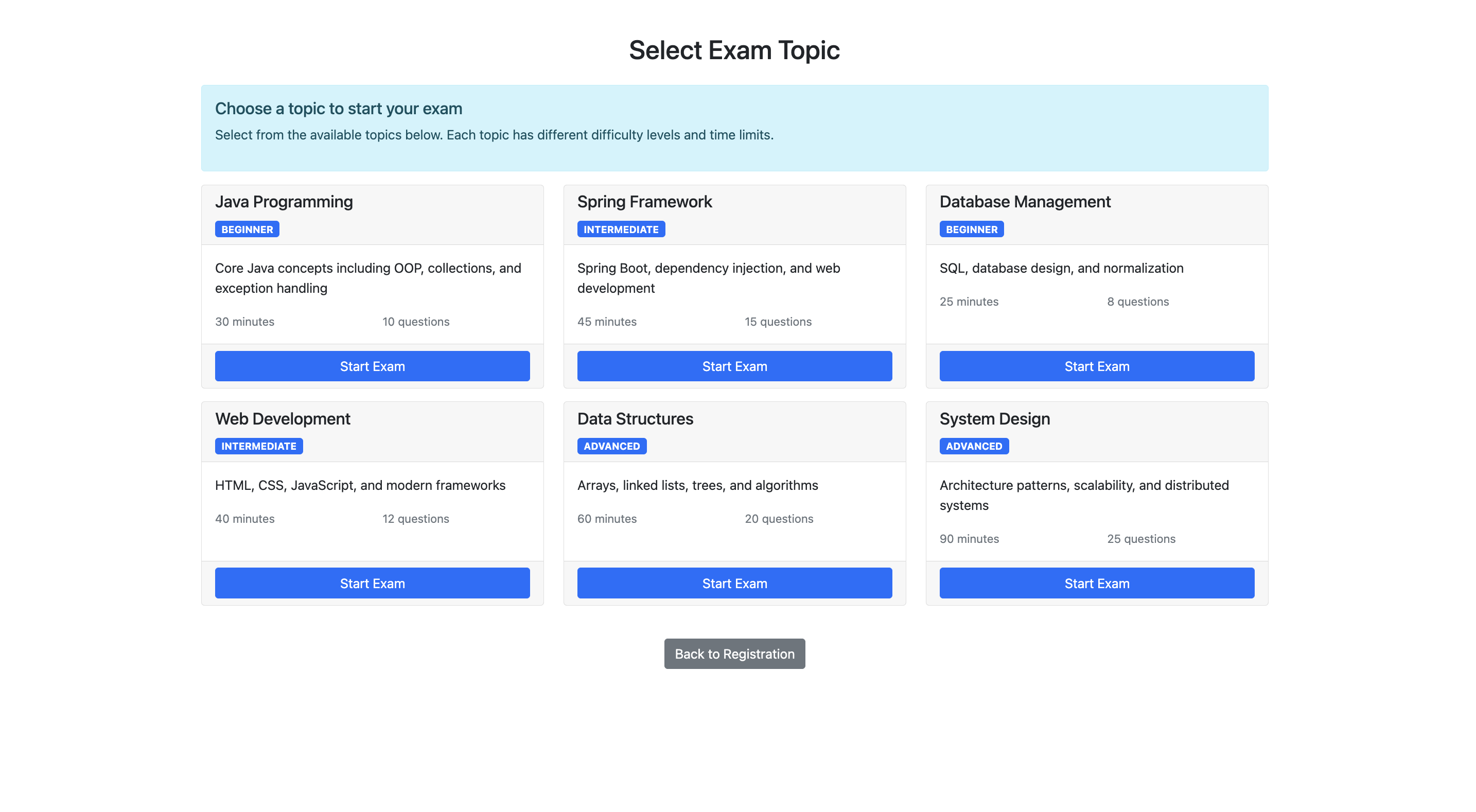

Topic Selection

Pre‑defined exam topics (Java, Spring, System Design, etc.) with clear details:

- Difficulty level

- Number of questions

- Time duration

This screen adds structure:

- Flexibility – multiple topics to choose from.

- Control – fixed duration and difficulty levels.

By reducing randomness, the exam becomes predictable and fair.

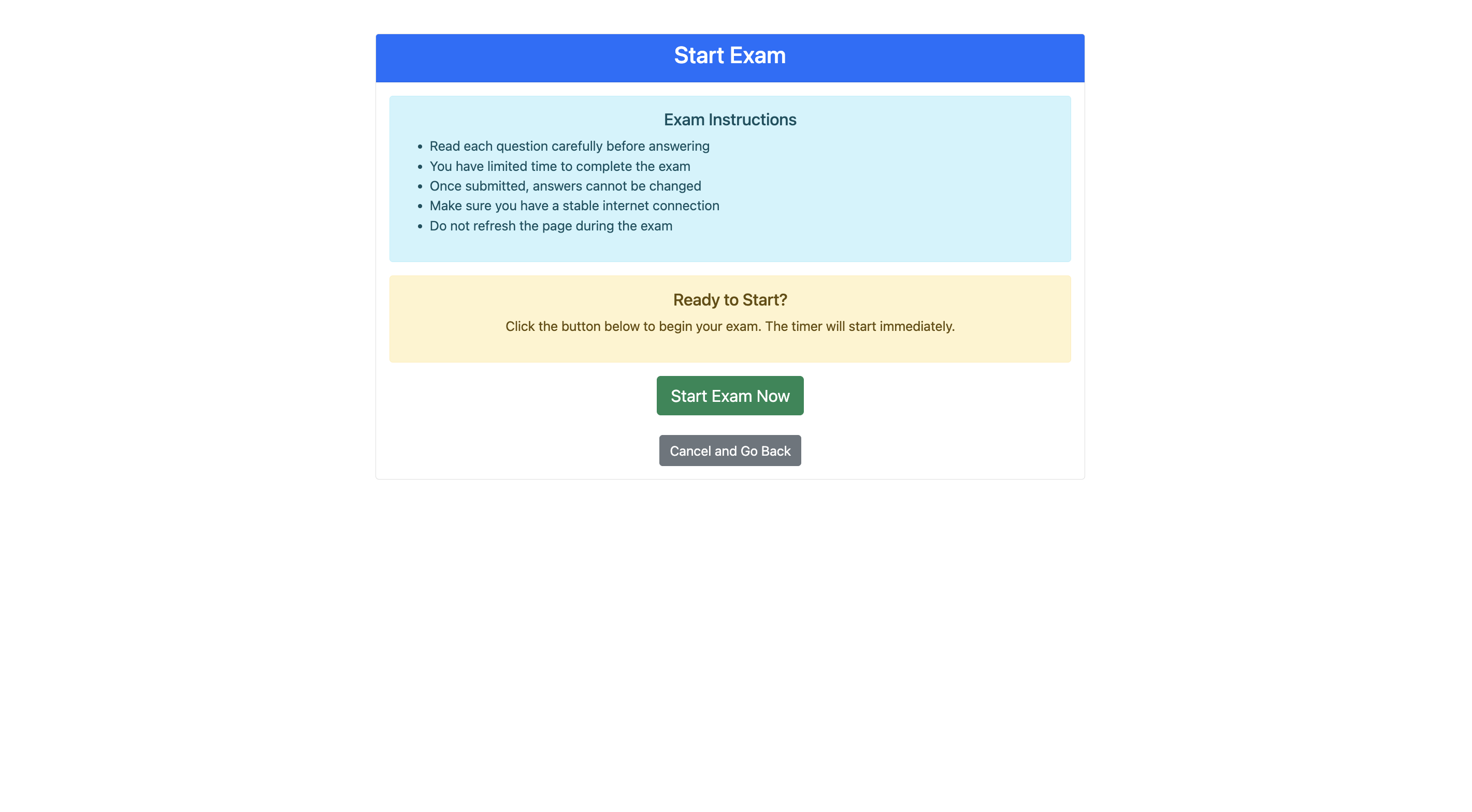

Exam Start Screen

Instructions displayed before the exam begins, including rules such as:

- Time limits.

- No page refresh.

- Answers cannot be changed once submitted.

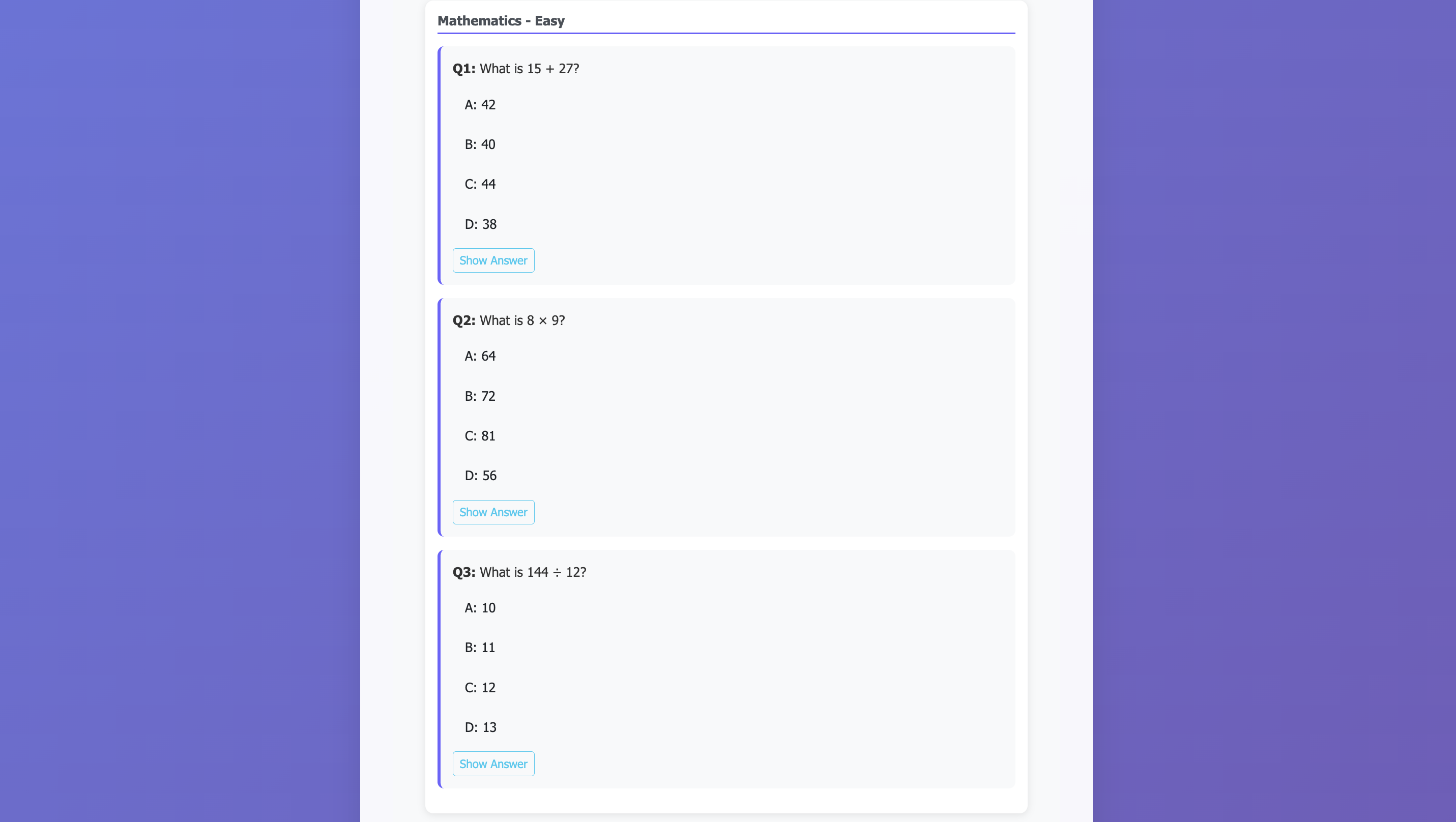

Mathematics – Easy

Shows basic math questions (addition, multiplication). Although it looks like a normal quiz, the questions are generated on‑the‑fly by AI based on the selected subject (Mathematics) and difficulty (Easy).

When the user selects the topic, the system sends a prompt to the AI such as:

“Generate easy‑level math questions with two‑digit addition and single‑digit multiplication. Return each question in the format

Q:and the answer in the formatA:.”

The response is then parsed, displayed, and later evaluated automatically.

Key Takeaways

- Control the AI, don’t trust it blindly.

- Add a deterministic layer (prompt templates, strict output parsing, validation) to guarantee consistency.

- Collect contextual data (student profile, topic settings) to make AI output more relevant and reproducible.

- Wrap the AI in a thin application that enforces rules, timing, and reporting—turning a “cool demo” into a production‑ready exam platform.

If you’re interested in the source code or want to try the JAR yourself, feel free to reach out!

Overview

The system validates every AI‑generated exam question before it is presented to the student.

The validation layer checks:

- Structure – each question must have exactly four options.

- Formatting consistency – the same layout is used for all questions.

- Correct answer extraction – the designated answer is identified and stored.

For math questions the system can also verify the answer deterministically (e.g., recompute 15 + 27 and confirm the result).

Science – Medium

In this screen the questions are conceptual (chemical symbols, physics facts). Because the answers are not numeric, the system relies on AI knowledge but still enforces:

- Structured question format

- A single correct answer

- A valid option set

Validation Process

- Cross‑checks answer format – ensures the answer follows the expected pattern.

- Ensures only one correct answer is marked.

- Optionally re‑prompts the AI if the response is ambiguous.

Programming – Hard

These screens contain advanced questions (time‑complexity, data structures). The AI generates:

- Domain‑specific questions

- Appropriate difficulty levels

- Relevant answer choices

Reliability Measures

- Enforces known patterns (e.g., Big‑O notation format)

- Validates option consistency

- Ensures only one correct answer is selected

Rule‑based validation is also applied where possible, for example:

- Valid complexity values (

O(n),O(log n), etc.) - Known correct answers for standard problems

Exam Flow

- “Start Exam Now” separates setup from execution, providing clear flow control.

- Every AI response passes through the validation layer, which:

- Checks structure

- Fixes formatting issues

- Ensures required fields exist

- Guarantees output consistency

Thus, AI suggests content, but the system decides what is actually used.

Showing Answers

When a student clicks “Show Answer”:

- The answer is extracted from the AI response.

- It is validated for correctness and proper formatting.

- For numerical questions, the system re‑checks the calculation before displaying it.

This gives students confidence that the displayed answer is accurate and reliable.

Impact

Adding the validation layer turned a demo‑like prototype into a stable, usable tool:

- Outputs are now consistent across runs.

- The system behaves predictably, no longer feeling like a “demo”.

- The implementation remains intentionally simple: a Java JAR with an in‑memory database that can be run locally.

Call for Collaboration

If you have experience with:

- AI‑generated data

- Exam or quiz systems

- Validation/control layers

I’d love to hear how you tackled similar challenges. Did you refine prompts, or did you introduce a control mechanism?

Project repository:

https://github.com/swapneswarsundarray/ai-assisted-exam

The project is early‑stage and open to contributions. Feel free to try it out, suggest improvements, or add real‑world input.

Takeaway

AI alone doesn’t replace a system; it becomes a component of a larger architecture.

The real value comes from the surrounding structure, validation, and control that make the whole solution reliable.