I Built QualityHub: AI-Powered Quality Intelligence for Your Releases

Source: Dev.to

🎯 The Problem

As a developer at Renault, I faced this question every day:

“Can we ship this release to production?”

We had test results, coverage metrics, SonarQube reports… but no single source of truth to answer this simple question.

So I built QualityHub – an AI‑powered platform that analyzes your quality metrics and gives you instant go/no‑go decisions.

🚀 What is QualityHub?

QualityHub is an open‑source quality intelligence platform that:

- 📊 Aggregates test results from any framework (Jest, JUnit, JaCoCo…)

- 🤖 Analyzes quality metrics with AI

- ✅ Decides if you can ship to production

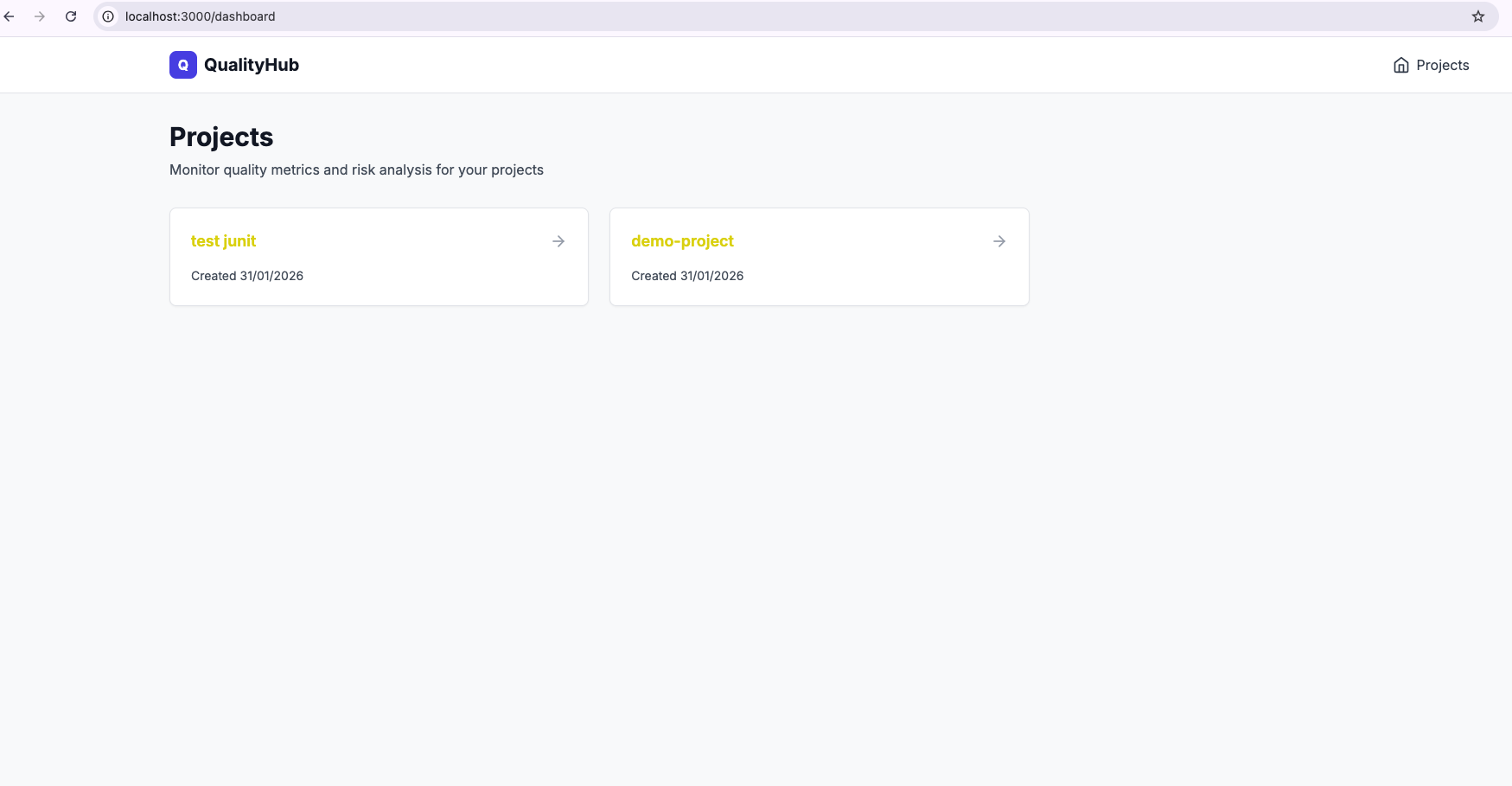

- 📈 Tracks trends over time in a beautiful dashboard

The Stack

| Component | Technology |

|---|---|

| Backend | TypeScript, Express, PostgreSQL, Redis |

| Frontend | Next.js 14, Tailwind CSS |

| CLI | TypeScript with parsers for Jest, JaCoCo, JUnit |

| Deployment | Docker Compose (self‑hostable) |

| License | MIT |

💡 How It Works

1. Universal Format – qa-result.json

Instead of forcing you to use specific tools, QualityHub uses an open standard format:

{

"version": "1.0.0",

"project": {

"name": "my-app",

"version": "2.3.1",

"commit": "a3f4d2c",

"branch": "main",

"timestamp": "2026-01-31T14:30:00Z"

},

"quality": {

"tests": {

"total": 1247,

"passed": 1245,

"failed": 2,

"skipped": 0,

"duration_ms": 45230,

"flaky_tests": ["UserAuthTest.testTimeout"]

},

"coverage": {

"lines": 87.3,

"branches": 82.1,

"functions": 91.2

}

}

}This format works with any test framework.

2. CLI Parsers

The CLI automatically converts your test results:

# Jest (JavaScript/TypeScript)

qualityhub parse jest ./coverage

# JaCoCo (Java)

qualityhub parse jacoco ./target/site/jacoco/jacoco.xml

# JUnit (Java/Kotlin/Python)

qualityhub parse junit ./build/test-results/test3. Risk Analysis Engine

The backend analyzes your results and calculates a Risk Score (0‑100).

Risk factors analyzed

- Test pass rate

- Code coverage (lines, branches, functions)

- Flaky tests

- Coverage trends

- Code‑quality metrics (if available)

Sample output

{

"risk_score": 85,

"status": "SAFE",

"decision": "PROCEED",

"reasoning": "Test pass rate: 99.8%. Coverage: 87.3%. No critical issues.",

"recommendations": []

}4. Beautiful Dashboard

Track metrics over time, see trends, and make informed decisions.

🔧 Quick Start

Self‑Hosted (5 minutes)

# Clone repo

git clone https://github.com/ybentlili/qualityhub.git

cd qualityhub

# Start everything with Docker

docker-compose up -d

# ✅ Backend: http://localhost:8080

# ✅ Frontend: http://localhost:3000Use the CLI

# Install

npm install -g qualityhub-cli

# Initialize

qualityhub init

# Parse your test results

qualityhub parse jest ./coverage

# Push to QualityHub

qualityhub push qa-result.jsonDone! Your metrics appear in the dashboard instantly.

🎨 Why I Built This

The Pain Points

- Fragmented tools – Jest for tests, JaCoCo for coverage, SonarQube for quality… each with its own UI and format.

- No single answer – “Can we ship?” required checking several tools and making a gut decision.

- No history – Hard to track quality trends over time.

- Manual process – No automation, no CI/CD integration.

The Solution

QualityHub aggregates everything into one dashboard and uses AI to make the decision for you.

🏗️ Architecture

┌─────────────┐

│ CLI │ ← Parse test results

└──────┬──────┘

│ POST /api/v1/results

↓

┌─────────────────────────────┐

│ Backend (API) │

│ • Express + TypeScript │

│ • PostgreSQL + Redis │

│ • Risk Analysis Engine │

└──────────────┬──────────────┘

│

↓

┌─────────────────────────────┐

│ Frontend (Dashboard) │

│ • Next.js 14 │

│ • Real‑time metrics │

└─────────────────────────────┘📊 Technical Deep Dive

1. The Parser Architecture

Each parser extends a base class:

export abstract class BaseParser {

abstract parse(filePath: string): Promise;

protected buildBaseResult(adapterName: string) {

return {

version: '1.0.0',

project: {

name: this.projectInfo.name,

commit: process.env.GIT_COMMIT || 'unknown',

// Auto‑detect CI/CD environment

timestamp: new Date().toISOString(),

},

metadata: {

ci_provider: this.detectCIProvider(),

adapters: [adapterName],

},

};

}

}This makes adding new parsers trivial. Want pytest support? Extend BaseParser and implement parse().

2. Risk Scoring Algorithm (MVP)

The current version uses rule‑based scoring:

let score = 100;

// Test failures

if (tests.failed > 0) {

score -= tests.failed * 5;

}

// Coverage thresholds

if (coverage.lines < 80) {

score -= (80 - coverage.lines) * 0.5;

}

if (coverage.branches < 70) {

score -= (70 - coverage.branches) * 0.5;

}

if (coverage.functions < 75) {

score -= (75 - coverage.functions) * 0.5;

}

// Flaky tests

if (flakyTests.length > 0) {

score -= flakyTests.length * 3;

}

// Ensure 0‑100 range

score = Math.max(0, Math.min(100, score));Future: Replace with an AI‑powered model that learns from historical releases.

3. red analysis (Claude API) for contextual insights

4. Database Schema

Simple and efficient:

CREATE TABLE projects (

id UUID PRIMARY KEY,

name VARCHAR(255) UNIQUE NOT NULL,

created_at TIMESTAMP DEFAULT CURRENT_TIMESTAMP

);

CREATE TABLE qa_results (

id UUID PRIMARY KEY,

project_id UUID REFERENCES projects(id),

version VARCHAR(50),

commit VARCHAR(255),

branch VARCHAR(255),

timestamp TIMESTAMP,

metrics JSONB, -- Flexible JSON storage

created_at TIMESTAMP DEFAULT CURRENT_TIMESTAMP

);

CREATE TABLE risk_analyses (

id UUID PRIMARY KEY,

qa_result_id UUID REFERENCES qa_results(id),

risk_score INTEGER,

status VARCHAR(50),

reasoning TEXT,

risks JSONB,

recommendations JSONB,

decision VARCHAR(50)

);JSONB allows flexible metric storage without schema migrations.

🚀 What’s Next?

v1.1 (Planned)

- 🤖 AI‑Powered Analysis with Claude API

- 📊 Trend Detection (coverage dropping over time)

- 🔔 Slack/Email Notifications

- 🔌 GitHub App (comments on PRs)

v1.2 (Future)

- 📈 Advanced Analytics (benchmarking, predictions)

- 🔐 SSO & RBAC for enterprise

- 🌍 Multi‑language support

- 🎨 Custom dashboards

💡 Lessons Learned

Open Standards Win

Makingqa-result.jsonan open standard was key. Now anyone can build parsers or integrations.Developer Experience Matters

The CLI must be dead simple:qualityhub parse jest ./coverage # Just worksNo config files, no setup—just works.

Self‑Hosting is a Feature

Many companies can’t send their metrics to external SaaS. Docker Compose makes self‑hosting trivial.

🤝 Contributing

QualityHub is 100 % open‑source (MIT License).

Want to contribute?

- 🧪 Add parsers (pytest, XCTest, Rust…)

- 🎨 Improve the dashboard

- 🐛 Fix bugs

- 📚 Write docs

Check out the Contributing Guide.

🔗 Links

- GitHub:

- CLI:

- npm:

🎯 Try It Now

# Self‑host in 5 minutes

git clone https://github.com/ybentlili/qualityhub.git

cd qualityhub

docker-compose up -d

# Or just the CLI

npm install -g qualityhub-cli

qualityhub parse jest ./coverage💬 What do you think?

Would you use this? What features would you like to see?

Drop a ⭐ on GitHub if you find this useful!

Built with ❤️ in TypeScript