Google Cloud AI Agents with Gemini 3: Building Multi-Agent Systems That Actually Work

Source: Dev.to

The transition from large language models (LLMs) as simple chat interfaces to autonomous AI agents represents the most significant shift in enterprise software since the move to micro‑services. With the release of Gemini 3, Google Cloud has provided the foundational model capable of the long‑context reasoning and low‑latency decision‑making required for sophisticated Multi‑Agent Systems (MAS).

However, building an agent that actually works—one that is reliable, observable, and capable of handling edge cases—requires more than a prompt and an API key. It needs a robust architectural framework, a deep understanding of tool use, and a structured approach to agent orchestration.

The Architecture of a Modern AI Agent

At its core, an AI agent is a loop. Unlike a standard LLM call (a single input‑output transaction), an agent uses the model’s reasoning capabilities to interact with its environment. In the context of Gemini 3 on Google Cloud, this environment is managed through Vertex AI Agent Builder.

The Agentic Loop: Perception, Reasoning, and Action

| Phase | Description |

|---|---|

| Perception | The agent receives a goal from the user and context from its internal memory or external data sources. |

| Reasoning | Using Gemini 3’s advanced reasoning capabilities (e.g., Chain‑of‑Thought or ReAct), the agent breaks the goal into sub‑tasks. |

| Action | The agent selects a tool (function call, API, or search) to execute a sub‑task. |

| Observation | The agent evaluates the output of the action and decides whether to continue or finish. |

System Architecture

To build a multi‑agent system we move away from a monolithic design and adopt a modular approach where a Manager/Orchestrator delegates tasks to specialized Worker agents.

In this architecture, the Manager Orchestrator serves as the brain. It uses Gemini 3’s high‑reasoning threshold to determine which worker agent is best suited for the current task, preventing “token bloat” in workers because they only receive the context necessary for their domain.

Why Gemini 3 for Multi‑Agent Systems?

Gemini 3 introduces several key advantages for agentic workflows that weren’t present in previous iterations:

- Native Function Calling – Fine‑tuned to generate structured JSON tool calls with higher accuracy, reducing hallucination during API interactions.

- Expanded Context Window – Can retain the entire history of a multi‑turn, multi‑agent conversation without needing a vector‑database lookup for every step.

- Multimodal Reasoning – Agents can “see” and “hear,” processing UI screenshots, audio logs, or other modalities as part of their reasoning loop.

Feature Comparison: Gemini 1.5 vs. Gemini 3 for Agents

| Feature | Gemini 1.5 Pro | Gemini 3 (Agentic) |

|---|---|---|

| Tool Call Accuracy | ~85 % | > 98 % |

| Reasoning Latency | Moderate | Optimized Low‑Latency |

| Native Memory Management | Limited | Integrated Session State |

| Multimodal Throughput | Standard | High‑Speed Stream Processing |

| Task Decomposition | Manual Prompting | Native Agentic Reasoning |

Building a Multi‑Agent System: Technical Implementation

Below is a step‑by‑step walkthrough for a financial‑analysis use case using the Vertex AI Python SDK.

Step 1 – Defining Tools

Tools are the “hands” of the agent. In Gemini 3, tools are defined as Python functions with clear docstrings, which the model uses to understand when and how to call them.

import vertexai

from vertexai.generative_models import GenerativeModel, Tool, FunctionDeclaration

# Initialize Vertex AI

vertexai.init(project="my-project-id", location="us-central1")

# Define a tool for fetching stock data

get_stock_price_declaration = FunctionDeclaration(

name="get_stock_price",

description="Fetch the current stock price for a given ticker symbol.",

parameters={

"type": "object",

"properties": {

"ticker": {

"type": "string",

"description": "The stock ticker (e.g., GOOG)"

}

},

"required": ["ticker"]

},

)

stock_tool = Tool(

function_declarations=[get_stock_price_declaration],

)Step 2 – The Worker Agent

A worker agent is specialized. The example below shows a Data Agent that uses the stock‑price tool.

model = GenerativeModel("gemini-3-pro")

chat = model.start_chat(tools=[stock_tool])

def run_data_agent(prompt: str) -> str:

"""Hand‑off logic for the data worker agent."""

response = chat.send_message(prompt)

# Detect a function‑call request

if (response.candidates

and response.candidates[0].content.parts

and response.candidates[0].content.parts[0].function_call):

function_call = response.candidates[0].content.parts[0].function_call

# In a real scenario you would execute the function here,

# then send the result back to the model.

return f"Agent wants to call: {function_call.name}"

return response.textStep 3 – Orchestration Flow

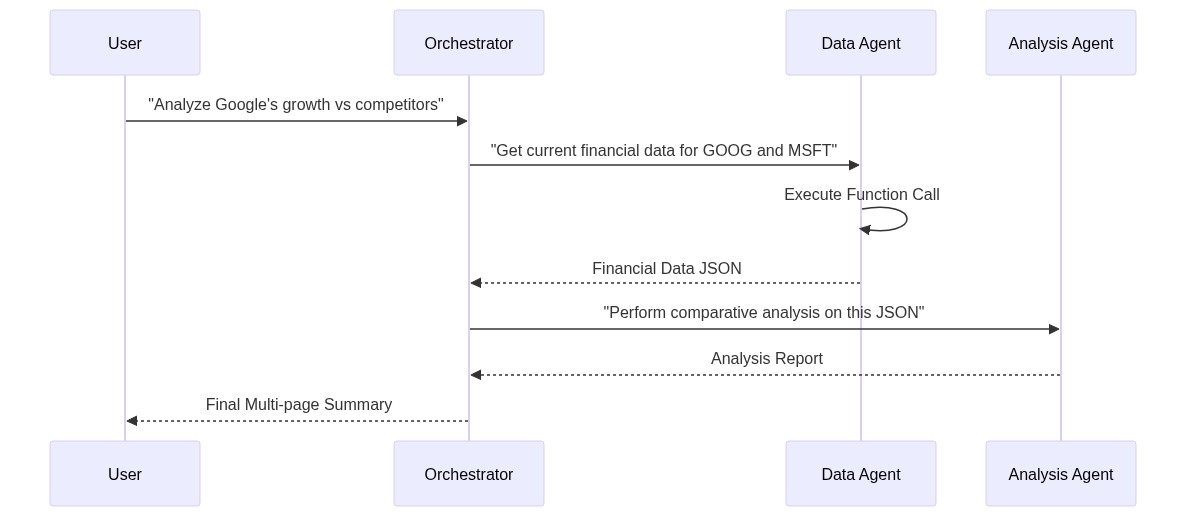

In a complex system the data flow must be managed so that Agent A’s output is correctly passed to Agent B. Below is a sequence diagram that visualises this interaction.

The orchestrator receives a high‑level request (e.g., “Generate a quarterly financial report”), decides which worker agents are needed (Data Agent → Analysis Agent → Presentation Agent), and routes the intermediate results accordingly.

Recap

- Define clear, typed tools that the model can invoke.

- Build focused worker agents that own a single domain of expertise.

- Implement a manager/orchestrator that leverages Gemini 3’s reasoning to route tasks, maintain session state, and minimise token bloat.

With this pattern you can harness Gemini 3’s long‑context, multimodal, and low‑latency capabilities to construct reliable, observable, and production‑ready multi‑agent systems on Google Cloud.

[](https://media2.dev.to/dynamic/image/width=800,height=,fit=scale-down,gravity=auto,format=auto/https%3A%2F%2Fdev-to-uploads.s3.amazonaws.com%2Fuploads%2Farticles%2Fv4u62qhdt2kantaop0zv.png)Advanced Pattern: State Management and Memory

One of the biggest challenges in multi‑agent systems is state drift, where agents lose track of the original goal during long interactions. Gemini 3 addresses this with native session‑state management in Vertex AI.

Instead of passing the entire conversation history back and forth (which increases cost and latency), we can use Context Caching. This allows the model to “freeze” the initial instructions and background data, only processing the new delta in the conversation.

Code Example: Context Caching for Efficiency

from vertexai.preview import generative_models

# Large technical manual context

long_context = "... thousands of lines of documentation ..."

# Create a cache (valid for a specific TTL)

cache = generative_models.Caching.create(

model_name="gemini-3-pro",

content=long_context,

ttl_seconds=3600,

)

# Initialize agent with the cached context

agent = generative_models.GenerativeModel(model_name="gemini-3-pro")

# The agent now has 'memory' of the documentation without re‑sending itChallenges in Multi‑Agent Systems

Building these systems isn’t without hurdles. Here are the three most common technical challenges and how to solve them:

1. The “Infinite Loop” Problem

Agents can sometimes get stuck in a loop, repeatedly calling the same tool or asking the same question.

Solution: Implement a max_iterations counter in your Python controller and use an Observer pattern where a separate model monitors the agentic loop for redundancy.

2. Tool Output Ambiguity

If a tool returns an error or unexpected JSON, the agent might hallucinate a solution.

Solution: Use strict Pydantic models for function outputs and feed the validation error back into the agent’s context, allowing it to self‑correct.

3. Context Overflow

Despite Gemini 3’s large window, multi‑agent systems can produce massive amounts of logs.

Solution: Apply an Information Bottleneck strategy. The orchestrator should summarize each worker’s output before passing it to the next agent, ensuring only high‑signal data moves forward.

Testing and Evaluation (LLM‑as‑a‑Judge)

Traditional unit tests are insufficient for agents. You must evaluate the reasoning path. Google Cloud’s Vertex AI Rapid Evaluation lets you use Gemini 3 as a judge to grade agent performance based on criteria such as:

- Helpfulness: Did the agent fulfill the intent?

- Tool Efficiency: Did it use the minimum number of tool calls?

- Safety: Did it adhere to the defined system instructions?

Evaluation Metric Table

| Metric | Description | Target Score |

|---|---|---|

| Faithfulness | How well the agent sticks to retrieved data | > 0.90 |

| Task Completion | Success rate of complex multi‑step goals | > 0.85 |

| Latency per Step | Time taken for a single reasoning loop | < 2.0 s |

Conclusion

Gemini 3 and Vertex AI Agent Builder have fundamentally lowered the barrier to building intelligent, autonomous systems. By:

- Using a modular multi‑agent architecture,

- Leveraging native function calling,

- Implementing rigorous evaluation cycles,

developers can move beyond prototypes to production‑ready AI solutions.

The key to success lies not in the size of the prompt, but in the elegance of the orchestration and the reliability of the tools provided to the agents. As we enter the era of agentic software, the developer’s role shifts from writing logic to designing ecosystems where agents collaborate effectively.