Beyond RAG: Building a Logic-Grounded AI Host with a Dual-Layer Architecture

Source: Dev.to

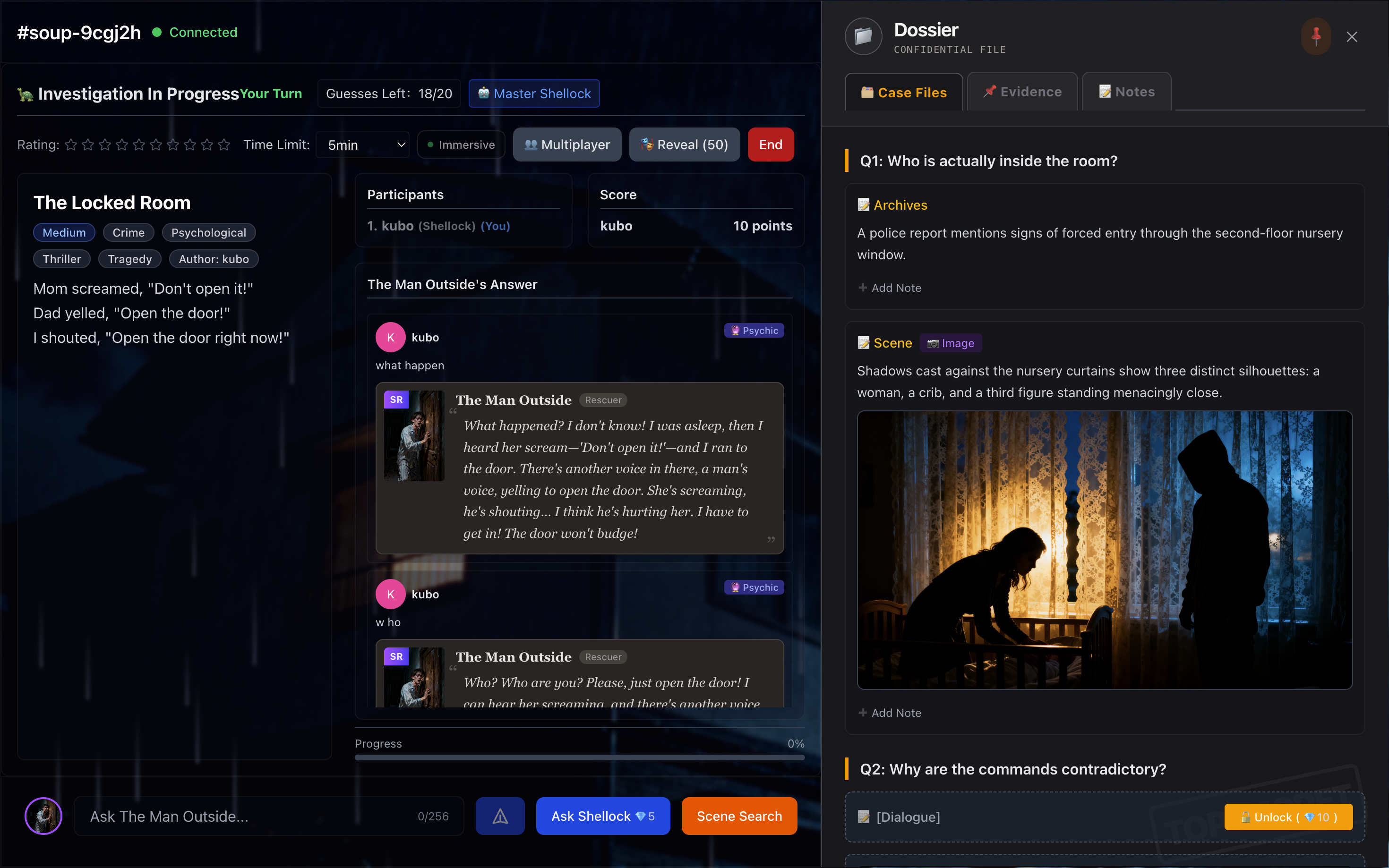

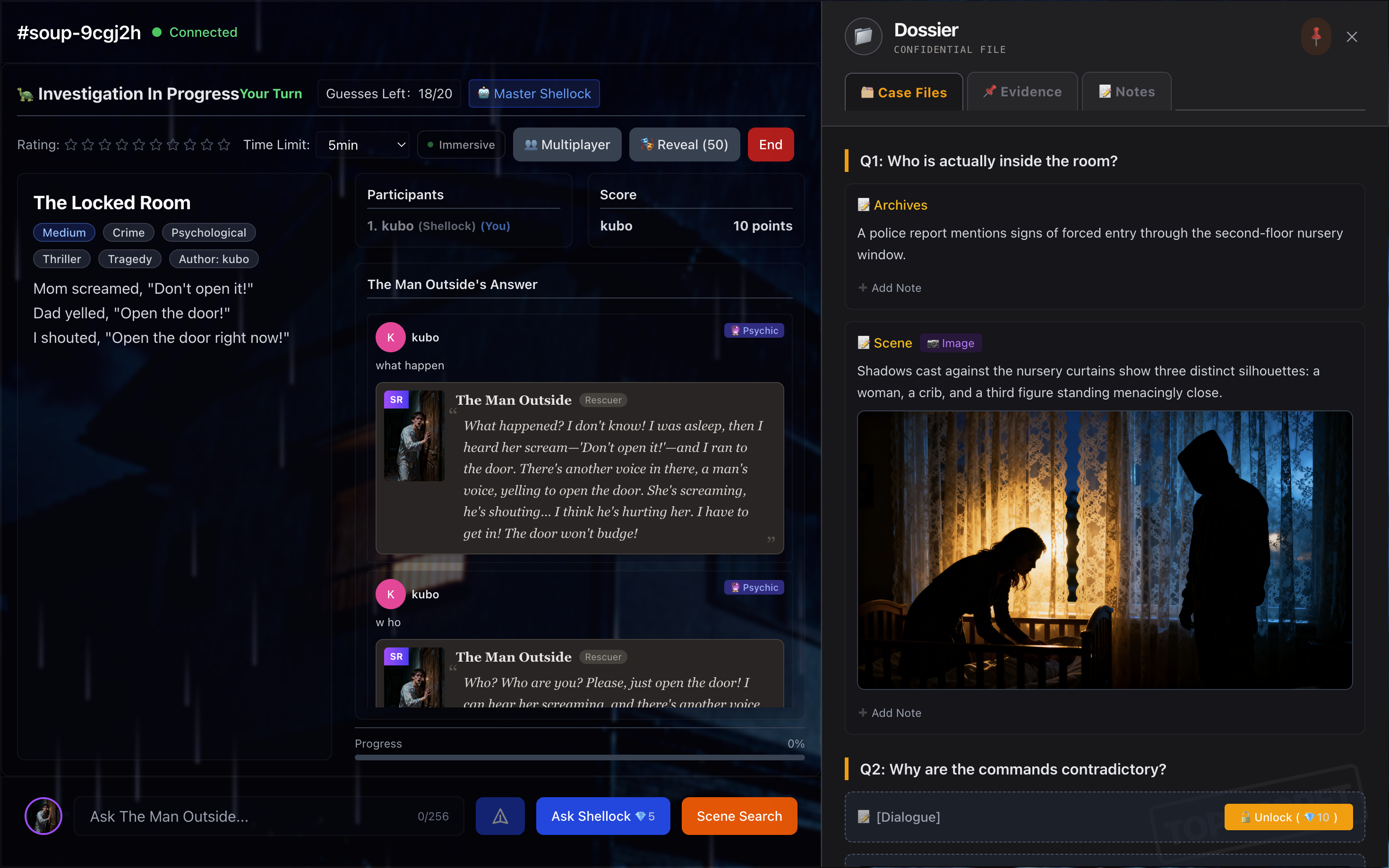

More Than Just a Chatbot: How I Engineered an “Immersive” AI Host for Lateral‑Thinking Puzzles

When we talk about AI apps today, we often get stuck in the binary of RAG (Retrieval‑Augmented Generation) or Prompt Engineering. While building TurtleNoir (my multiplayer lateral‑thinking‑puzzle game), I realized that real‑time inference alone wasn’t enough.

Below is the story of how I gave the AI Host a “soul.”

What Are Lateral‑Thinking Puzzles?

For those unfamiliar, the game (often called “Turtle Soup” in Asia) works like this:

- Players are presented with a strange scenario (the Surface).

- The hidden truth is the Soup.

It requires at least two people:

| Role | Description |

|---|---|

| Host | Knows the truth and answers the players. |

| Players | Ask Yes/No questions to deduce the backstory. |

Example – A captain drinks a bowl of turtle soup, cries, and then commits suicide. Why?

Players ask Yes/No questions; the Host must answer Yes / No / Irrelevant.

From Burning $5 in 30 Minutes to Sustainable Costs

- Early prototype – built for my girlfriend using the free Gemini API. It worked for two people.

- Viral moment – a TikTok influencer featured the site; hundreds of users flooded in.

- Free tier broke – switched to an API‑key pool, but rate limits were still too tight.

- OpenRouter migration – costs hit $5 in 90 %, making the platform sustainable.

The Dilemma: From “Glitchy AI” to “Omniscient Host”

The charm of the game lies in information asymmetry – the Host knows everything but can only answer Yes/No.

First attempt (single‑prompt)

You are the Host. Here is the story... Here is the truth... Answer the player.- With Chain‑of‑Thought – the Host was too slow; token cost doubled.

- Without Chain‑of‑Thought – the AI missed subtle narrative tricks; it couldn’t build a rigorous logical defence within ~500 ms.

Realisation: Asking one model to understand a complex puzzle, maintain logical consistency, and role‑play simultaneously is asking too much.

Solution: Decouple “Thinking” from “Speaking.”

Why RAG Failed in Practice

Before landing on the final architecture I tried a classic RAG (vector‑search) approach:

- Store every Player Question + AI Answer in a vector DB.

- If a new question is semantically similar to a past one, serve the cached answer.

What went wrong?

Lateral‑thinking puzzles demand logical precision, not just semantic similarity.

| Question A | Question B | Embedding similarity | Problem |

|---|---|---|---|

| “Did he kill himself?” | “Was he killed?” | High (both about death) | RAG would return the same cached answer, breaking game logic. |

Lesson: Probability‑based similarity can’t guarantee logical correctness. Structured reasoning is required.

The Solution: A Dual‑Layer AI Architecture

Layer 1 – The Architect (offline deep thinking)

Runs only when a new puzzle is added.

- Uses a high‑compute model (e.g., Claude‑3‑Opus, GPT‑4‑Turbo).

- Spends ~30 s deconstructing the story.

- Generates a Logic Profile – a comprehensive JSON containing:

- Core trick

- Physical evidence

- Causal relationships

- Epistemic blind‑spots for each role

{

"puzzle_id": "turtle_soup_001",

"core_trick": "misdirection via smell",

"evidence": ["broken bottle", "wet footprints"],

"causal_chain": [

{"step": 1, "description": "..."},

{"step": 2, "description": "..."}

],

"epistemic_blind_spots": {

"dead_character": ["cannot see attacker’s face"],

"witness": ["did not hear the scream"]

}

}Layer 2 – The Host (real‑time response)

Runs for every player interaction.

- Loads the pre‑computed Logic Profile.

- Uses a cheap, fast model (e.g., DeepSeek V3.2) to:

- Interpret the player’s Yes/No question.

- Consult the Logic Profile for the correct answer.

- Role‑play according to the defined blind‑spots.

Because the heavy logical lifting is done offline, the Host can answer instantly and cheaply.

How Pre‑Caching Unlocked Advanced Features

1. Seance Mode & “Epistemic Blind Spots”

In TurtleNoir, players can summon characters or objects and converse with them.

The challenge is perspective control: a dead character shouldn’t know who killed them if they were attacked from behind.

- The Architect pre‑defines each role’s knowledge limits in the Logic Profile.

- The Host enforces those limits during dialogue, producing a Rashomon‑style narrative where each perspective is fragmented rather than omniscient.

2. Dynamic Hint System

Because the Logic Profile already contains the causal chain, the Host can offer tiered hints (e.g., “You’re on the right track” → “Consider the smell”) without re‑computing the puzzle logic.

3. Multiplayer Synchronisation

All players share the same Logic Profile, ensuring consistent answers across concurrent sessions while keeping latency low.

Takeaways

| Insight | Why It Matters |

|---|---|

| Separate reasoning from language generation | Cuts cost, improves speed, and guarantees logical consistency. |

| Cache heavy context once | Reduces token usage dramatically for multi‑turn games. |

| Never rely on pure semantic similarity for logic puzzles | Structured reasoning (JSON profiles) is essential. |

| Pre‑define epistemic limits | Enables richer, perspective‑aware role‑play. |

TL;DR

- Build a heavy offline “Architect” that turns each puzzle into a structured Logic Profile.

- Deploy a lightweight “Host” that reads the profile and answers Yes/No in real time.

- Use context caching to avoid re‑sending large story texts.

- The result: a cheap, fast, and truly immersive AI Host that can handle hundreds of concurrent players without breaking the game’s logical core.

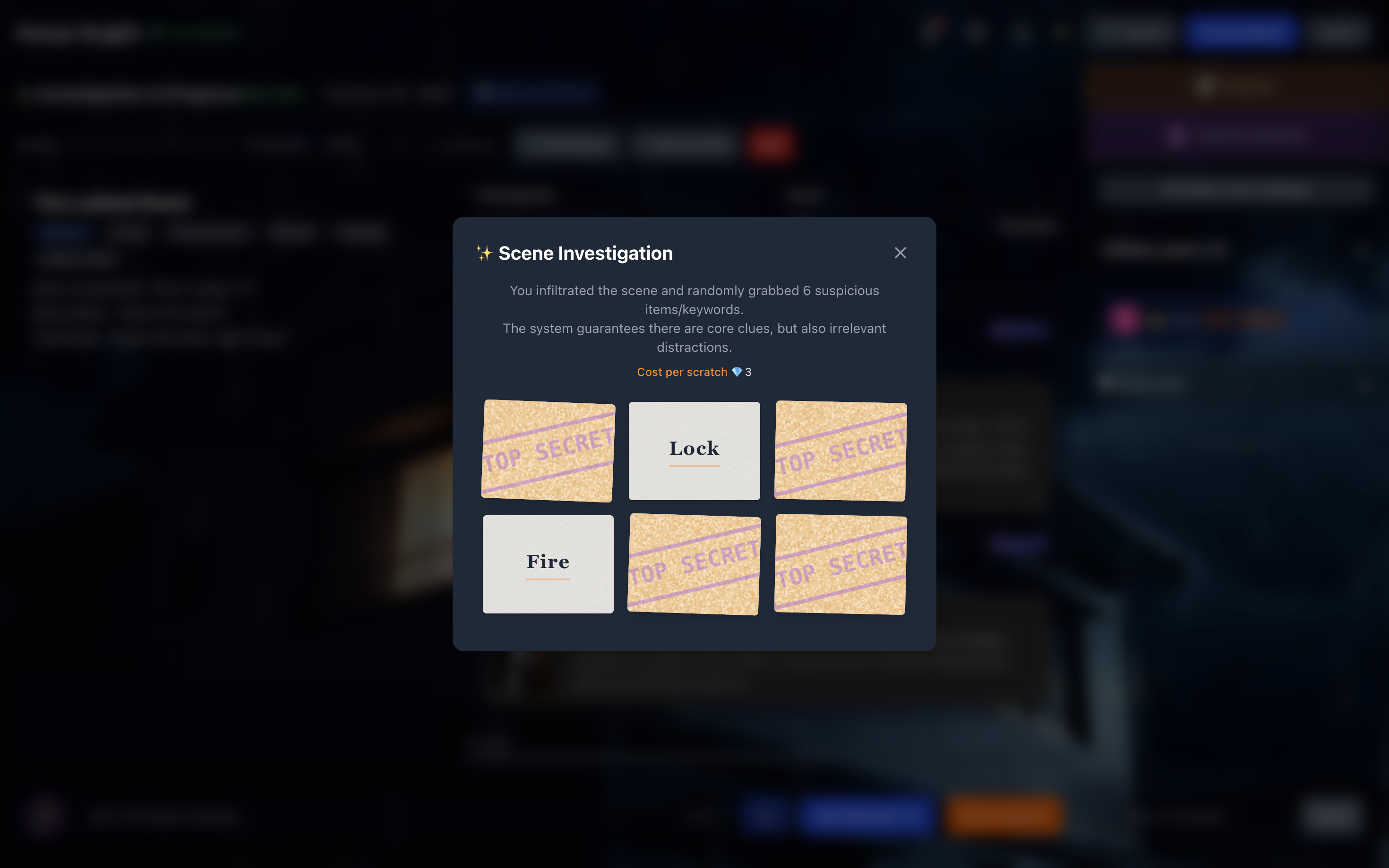

2. Evidence Collection: Organized Randomness

Players can scratch off “Evidence Bags.” These aren’t random hallucinations – the Architect pre‑generates the contents:

- 1 Core Evidence – points to the key truth

- 3 Circumstantial Clues – assist reasoning

- 2 Red Herrings – designed to mislead

Real‑time AI struggles to invent good red herrings on the fly. Offline generation ensures quality design.

Immersion Beyond Text

As an indie dev I also polished the frontend to match the backend logic:

- The Detective Workbench – a “Case File” tab collects unlocked clues; players can tag evidence as Critical, Doubtful, or Excluded.

- Dynamic Visuals – using the character descriptions from the Architect, I employ image‑generation models to create consistent character portraits without spoiling the gore or the twist.

Conclusion: Tech Serves the Experience

Building TurtleNoir taught me that AI‑native apps risk becoming simple “Chatbot Wrappers” if they lack architectural depth.

By separating the Architect (Offline Reasoning) from the Host (Online Response) and using the Logic Profile as the bridge, we mimic the workflow of a human game designer. This lets the AI handle a game that relies heavily on strict logic and information gaps.

Technology is just the tool. The goal is that late‑night moment when you and your friends stay focused on the screen, piecing together a key character’s testimony and feeling fully immersed in the story.

One More Thing

The app is a PWA (Progressive Web App)—no download needed, perfect for mobile.

👉 Play the English Demo:

(Or check out the Chinese version: )

I’d love to hear how other indie devs are structuring their AI apps in the comments!