Against the Clock: How Data 360 Launched the Informatica Help Agent in 24 Days

Source: Salesforce Engineering

By Irina Malkova and Alexander Smith.

In our Engineering Energizers Q&A series, we highlight the engineering minds driving innovation across Salesforce. Today we spotlight Irina Malkova, Vice President of Product and Success Data, who helped deliver the data foundation behind the Informatica Help Agent in just 24 days.

Explore how the team met an ambitious deadline by refining project focus, converting 100,000 unstructured documents into searchable intelligence via Data 360, and applying established architectural frameworks to enable reliable retrieval for live agents.

What is your team’s mission as it relates to building the Data 360 foundation for the Informatica Help Agent?

The team builds trusted AI‑ready context. In this case, a knowledge base that empowers the Informatica agent to reliably answer customer questions and reduce support cases.

We support all agents that augment the Customer Success business motion, including those on help.salesforce.com and slack.com/help. Our strategy balances:

- Enabling helpful, tailored answers for each agent.

- Building a durable data foundation that can power future agents—reducing time‑to‑launch and ensuring consistent, trusted results across all experiences.

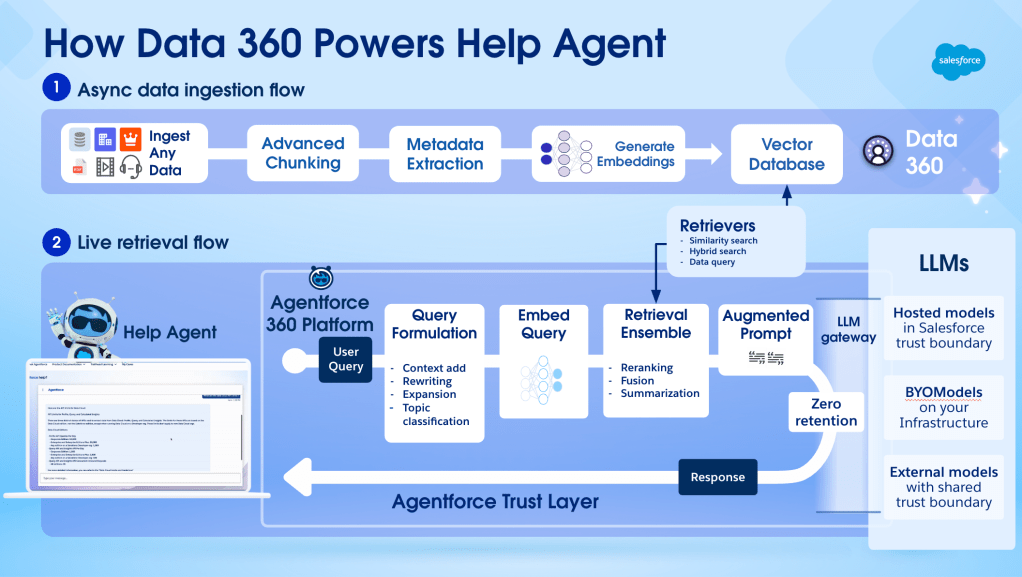

Data 360 is how the team unifies, standardizes, indexes, and activates unstructured knowledge. Data preparation is a notoriously difficult step in building AI, but Data 360 eliminates the need for custom pipelines, accelerates time‑to‑launch, and enables reuse—making tight deadlines possible.

Retrieval precision and accuracy defined the success of the Informatica Help Agent. By focusing on AI data readiness as a core engineering task, the team delivers correct answers and scales the system without losing trust.

What delivery constraints shaped the 24‑day launch of the Informatica Help Agent after acquisition?

We were challenged to enable the Informatica Agent in 30 days after the acquisition completed on November 18, 2025. The ambitious post‑acquisition timeline required strict discipline and architectural creativity. The team focused on delivering a production‑grade, high‑quality foundation rather than addressing every complex detail in the initial release.

To avoid friction that threatened the deadline, we leveraged clever architectural approaches. For instance, Informatica’s knowledge base had complex versioning, with many near‑duplicate articles differing only slightly across product versions. We managed product versioning through prompting and configuration rather than changing system logic, keeping the primary effort on ingestion and retrieval fundamentals.

Execution relied on reusing established Data 360 patterns while protecting the engineering team from distractions. By following a precise plan and sequencing tasks carefully, we completed the entire system in 24 days—ahead of the 30‑day deadline.

What data‑quality challenges emerged when preparing Informatica’s unstructured knowledge for AI consumption?

Informatica documentation was written for human readers rather than artificial intelligence. Raw HTML files contained headers, footers, and navigation menus that interfered with retrieval quality. To become AI‑ready, the knowledge needed a cleanup—but manual cleaning was impossible at this scale.

We used Data 360 patterns to normalize content and remove noise while preserving original meaning. This process transformed HTML into consistent chunks for better embedding and retrieval.

Preparing this volume of content would have taken weeks without Data 360. By using native ingestion and search features, we finished data preparation in days and moved quickly to performance optimization. Thanks to the cleanup, we had a solid performance baseline to start with—because context determines the quality of an agent’s response.

What ingestion and storage challenges shaped aggregating 100,000 Informatica documents into Data 360?

The Informatica knowledge base came from different systems with unique structures and metadata. The ingestion process had to handle these differences while remaining reliable at large scale.

- Website content – Much of the knowledge was available through a content‑management system and hosted on the Informatica website. We used the new Data 360 “sitemaps” feature, which crawls the site and creates conforming Data 360 knowledge.

- Unique content – For documents not reachable via a sitemap, Python workflows extracted the data, while Data 360 handled ingestion and storage.

The first ingestion of developer documentation finished in about three hours; subsequent updates ran faster as the pipelines stabilized.

We managed limitations in filtering and refresh timing through preprocessing and configuration. Despite these constraints, Data 360 pipelines supported hundreds of thousands of documents, creating a production‑ready knowledge base within the required timeline.

What retrieval‑accuracy and performance considerations guided your chunking and indexing strategy?

Accuracy remains vital because documentation varies by product version, audience, and depth. Our strategy focused on:

- Chunk size – Selecting a chunk length that balances semantic completeness with embedding efficiency (≈ 200‑300 tokens).

- Metadata enrichment – Attaching version, product, and audience tags to each chunk to enable precise filtering at query time.

- Hybrid indexing – Combining dense vector indexes for semantic similarity with traditional lexical indexes for exact term matching, ensuring both recall and precision.

- Evaluation loop – Running automated relevance tests (e.g., MRR, nDCG) on a curated QA set after each ingestion batch, allowing rapid tuning of chunk boundaries and ranking parameters.

By iterating on these levers, we achieved > 90 % retrieval precision on the internal benchmark while keeping query latency under 200 ms, meeting the performance expectations for a live support agent.

Architectural Insights

Mismatched content risks eroding trust even when responses appear relevant. To solve this, the team reused proven chunking strategies that worked for Customer Success and added filters and metadata tags during ingestion.

These tags enable more precise retrieval and simplify evaluation by narrowing results to the most relevant context. Real‑world usage validated this approach following the launch. The Informatica Help Agent achieved an 80 % resolution rate with only 5 % human escalation. This success demonstrates that retrieval accuracy and performance hold under live traffic without sacrificing quality.

What architectural decisions enabled reuse instead of rebuilding prior help‑agent data work?

Confidence in existing Data 360 patterns drove the decision to reuse systems and move quickly without adding unnecessary complexity. Rather than rebuilding from scratch, the team extended established configurations for ingestion, chunking, indexing, and retrieval to Informatica content.

Although Informatica data behaves differently than Salesforce‑authored content, necessary adjustments remained localized. Because pipelines and infrastructure follow a standard design, tuning did not require systemic changes or a ground‑up redesign.

This strategy avoided a rebuild that would have required a much larger team and months of extra work. In practice, reusing proven patterns in Data 360 delivered equivalent outcomes in a fraction of the usual time. The process maintained enterprise quality while establishing a scalable foundation for future agent expansions.

Learn more

- Stay connected — join our Talent Community!

- Check out our Technology and Product teams to learn how you can get involved.