Adding AI to sinus surgery system saw malfunctions rocket from eight to 100 incidents, according to new investigation — skull-puncturing errors are the stuff of nightmares

Source: Tom’s Hardware

Image credit: Acclarent

The introduction of AI to the operating theater doesn’t always work out as expected. Reuters has published a detailed report with examples taken from lawsuits involving three “AI‑enhanced” medical machines. In the most excruciating example of an AI‑assisted medical device going wrong, the enhanced system is blamed for causing major medical emergencies, resulting in blood spraying around the operating room and victims suffering strokes.

Before we go into detail, it must be noted that the FDA reports seen by Reuters aren’t complete, so they don’t definitively indicate that AI was the root cause behind the increase in mishaps. However, lawsuits argue that AI contributed to the injuries that occurred after the medical machinery “enhancements” arrived.

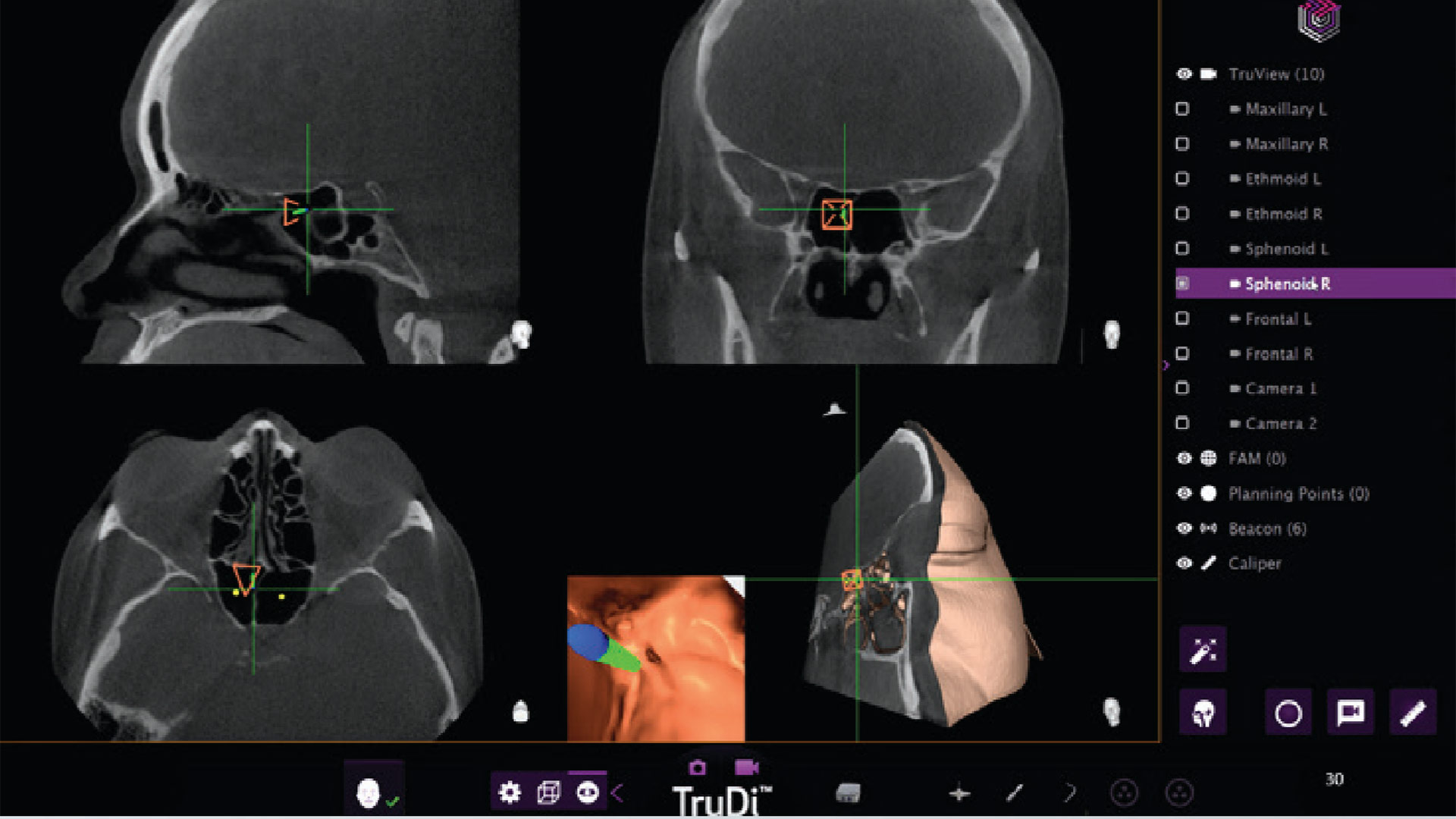

TruDi Navigation System from Acclarent

TruDi from Acclarent is used by clinicians to treat chronic sinusitis. It is designed to simplify surgical planning and provide real‑time feedback during delicate procedures such as sinus operations.

After three years on the market and a reported eight malfunctions, the device was “enhanced” by AI algorithms and has since been involved in at least 100 malfunctions and adverse events, according to the Reuters report.

Problems attributed to TruDi AI have included cerebrospinal fluid leaks, puncture of the base of the skull, major arterial damage, and strokes.

Case: Erin Ralph

Ralph’s lawsuit alleges that the TruDi system misdirected the surgeon, causing instruments to be placed near the carotid artery. This allegedly led to a blood clot and stroke. Ralph required five days in intensive care, experienced brain swelling, and had a portion of his skull removed as part of remedial treatment. One year later, he remains in physical therapy for ongoing issues.

Case: Donna Fernihough

Fernihough’s surgery reportedly resulted in “bleeding all over” the operating room after her carotid artery was damaged, leading to a stroke. Her lawyers describe the AI‑enhanced system as “inconsistent, inaccurate, and unreliable.” They claim Acclarent “lowered its safety standards to rush the new technology to market,” setting a goal of only 80 % accuracy before integrating the AI into the TruDi Navigation System.

Issues with the eSonio Detect System and the Medtronic LINQ Implantable Cardiac Monitor

-

eSonio Detect – The fetal image analyzer is accused of using a faulty algorithm that misidentifies fetal structures and body parts. No reports of patient harm have been documented.

-

Medtronic LINQ – The implantable cardiac monitor, which incorporates AI assistance, is alleged to have failed to recognize abnormal rhythms or pauses in patients. Again, no incidents of patient harm are known.

In addition to FDA resources being under strain, the AI device approval screening process may need reworking. Reuters indicates that the FDA currently leans heavily on a device’s prior reputation, effectively “positioning new devices as updates on existing ones.” This approach may allow manufacturers to push AI‑enhanced machines to market more quickly, but it raises concerns about thoroughness when human health is at stake.